Artificial Intelligence

Making machines smarter for improved safety, efficiency and comfort.

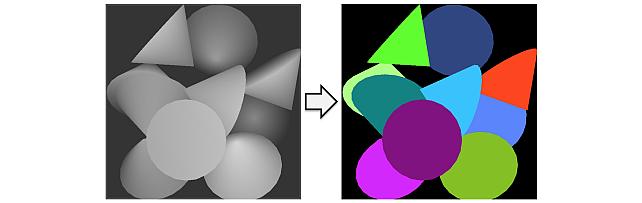

Our AI research encompasses advances in computer vision, speech and audio processing, as well as data analytics. Key research themes include improved perception based on machine learning techniques, learning control policies through model-based reinforcement learning, as well as cognition and reasoning based on learned semantic representations. We apply our work to a broad range of automotive and robotics applications, as well as building and home systems.

Quick Links

-

Researchers

Jonathan

Le Roux

Toshiaki

Koike-Akino

Ye

Wang

Gordon

Wichern

Anoop

Cherian

Tim K.

Marks

Chiori

Hori

Michael J.

Jones

Kieran

Parsons

Jing

Liu

Suhas

Lohit

Daniel N.

Nikovski

Yoshiki

Masuyama

Matthew

Brand

Kuan-Chuan

Peng

Pu

(Perry)

Wang

Moitreya

Chatterjee

Philip V.

Orlik

Siddarth

Jain

Hassan

Mansour

Petros T.

Boufounos

Radu

Corcodel

Pedro

Miraldo

William S.

Yerazunis

Arvind

Raghunathan

Yebin

Wang

Jianlin

Guo

Hongbo

Sun

Christoph

Boeddeker

Chungwei

Lin

Yanting

Ma

Saviz

Mowlavi

Bingnan

Wang

Stefano

Di Cairano

Lalit

Manam

Anthony

Vetro

Jinyun

Zhang

Vedang M.

Deshpande

Kaen

Kogashi

Christopher R.

Laughman

Dehong

Liu

Alexander

Schperberg

Kei

Suzuki

Abraham P.

Vinod

Kenji

Inomata

-

Awards

-

AWARD MERL team wins the Generative Data Augmentation of Room Acoustics (GenDARA) 2025 Challenge Date: April 7, 2025

Awarded to: Christopher Ick, Gordon Wichern, Yoshiki Masuyama, François G. Germain, and Jonathan Le Roux

MERL Contacts: Jonathan Le Roux; Yoshiki Masuyama; Gordon Wichern

Research Areas: Artificial Intelligence, Machine Learning, Speech & AudioBrief- MERL's Speech & Audio team ranked 1st out of 3 teams in the Generative Data Augmentation of Room Acoustics (GenDARA) 2025 Challenge, which focused on “generating room impulse responses (RIRs) to supplement a small set of measured examples and using the augmented data to train speaker distance estimation (SDE) models". The team was led by MERL intern Christopher Ick, and also included Gordon Wichern, Yoshiki Masuyama, François G. Germain, and Jonathan Le Roux.

The GenDARA Challenge was organized as part of the Generative Data Augmentation (GenDA) workshop at the 2025 IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP 2025), and held on April 7, 2025 in Hyderabad, India. Yoshiki Masuyama presented the team's method, "Data Augmentation Using Neural Acoustic Fields With Retrieval-Augmented Pre-training".

The GenDARA challenge aims to promote the use of generative AI to synthesize RIRs from limited room data, as collecting or simulating RIR datasets at scale remains a significant challenge due to high costs and trade-offs between accuracy and computational efficiency. The challenge asked participants to first develop RIR generation systems capable of expanding a sparse set of labeled room impulse responses by generating RIRs at new source–receiver positions. They were then tasked with using this augmented dataset to train speaker distance estimation systems. Ranking was determined by the overall performance on the downstream SDE task. MERL’s approach to the GenDARA challenge centered on a geometry-aware neural acoustic field model that was first pre-trained on a large external RIR dataset to learn generalizable mappings from 3D room geometry to room impulse responses. For each challenge room, the model was then adapted or fine-tuned using the small number of provided RIRs, enabling high-fidelity generation of RIRs at unseen source–receiver locations. These augmented RIR sets were subsequently used to train the SDE system, improving speaker distance estimation by providing richer and more diverse acoustic training data.

- MERL's Speech & Audio team ranked 1st out of 3 teams in the Generative Data Augmentation of Room Acoustics (GenDARA) 2025 Challenge, which focused on “generating room impulse responses (RIRs) to supplement a small set of measured examples and using the augmented data to train speaker distance estimation (SDE) models". The team was led by MERL intern Christopher Ick, and also included Gordon Wichern, Yoshiki Masuyama, François G. Germain, and Jonathan Le Roux.

-

AWARD MERL Wins Awards at NeurIPS LLM Privacy Challenge Date: December 15, 2024

Awarded to: Jing Liu, Ye Wang, Toshiaki Koike-Akino, Tsunato Nakai, Kento Oonishi, Takuya Higashi

MERL Contacts: Toshiaki Koike-Akino; Jing Liu; Ye Wang

Research Areas: Artificial Intelligence, Machine Learning, Information SecurityBrief- The Mitsubishi Electric Privacy Enhancing Technologies (MEL-PETs) team, consisting of a collaboration of MERL and Mitsubishi Electric researchers, won awards at the NeurIPS 2024 Large Language Model (LLM) Privacy Challenge. In the Blue Team track of the challenge, we won the 3rd Place Award, and in the Red Team track, we won the Special Award for Practical Attack.

-

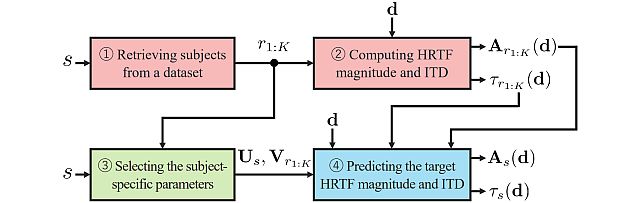

AWARD MERL team wins the Listener Acoustic Personalisation (LAP) 2024 Challenge Date: August 29, 2024

Awarded to: Yoshiki Masuyama, Gordon Wichern, Francois G. Germain, Christopher Ick, and Jonathan Le Roux

MERL Contacts: Jonathan Le Roux; Gordon Wichern; Yoshiki Masuyama

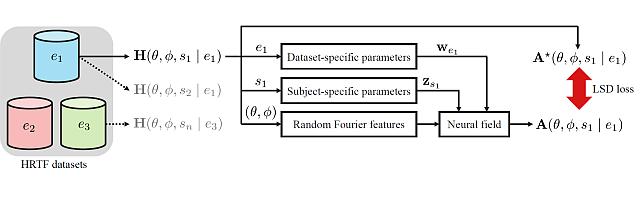

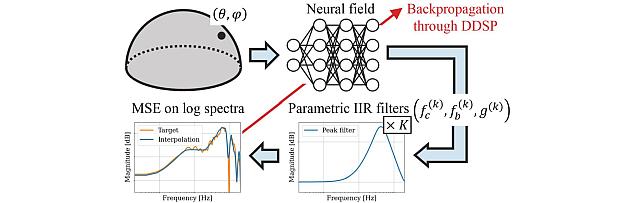

Research Areas: Artificial Intelligence, Machine Learning, Speech & AudioBrief- MERL's Speech & Audio team ranked 1st out of 7 teams in Task 2 of the 1st SONICOM Listener Acoustic Personalisation (LAP) Challenge, which focused on "Spatial upsampling for obtaining a high-spatial-resolution HRTF from a very low number of directions". The team was led by Yoshiki Masuyama, and also included Gordon Wichern, Francois Germain, MERL intern Christopher Ick, and Jonathan Le Roux.

The LAP Challenge workshop and award ceremony was hosted by the 32nd European Signal Processing Conference (EUSIPCO 24) on August 29, 2024 in Lyon, France. Yoshiki Masuyama presented the team's method, "Retrieval-Augmented Neural Field for HRTF Upsampling and Personalization", and received the award from Prof. Michele Geronazzo (University of Padova, IT, and Imperial College London, UK), Chair of the Challenge's Organizing Committee.

The LAP challenge aims to explore challenges in the field of personalized spatial audio, with the first edition focusing on the spatial upsampling and interpolation of head-related transfer functions (HRTFs). HRTFs with dense spatial grids are required for immersive audio experiences, but their recording is time-consuming. Although HRTF spatial upsampling has recently shown remarkable progress with approaches involving neural fields, HRTF estimation accuracy remains limited when upsampling from only a few measured directions, e.g., 3 or 5 measurements. The MERL team tackled this problem by proposing a retrieval-augmented neural field (RANF). RANF retrieves a subject whose HRTFs are close to those of the target subject at the measured directions from a library of subjects. The HRTF of the retrieved subject at the target direction is fed into the neural field in addition to the desired sound source direction. The team also developed a neural network architecture that can handle an arbitrary number of retrieved subjects, inspired by a multi-channel processing technique called transform-average-concatenate.

- MERL's Speech & Audio team ranked 1st out of 7 teams in Task 2 of the 1st SONICOM Listener Acoustic Personalisation (LAP) Challenge, which focused on "Spatial upsampling for obtaining a high-spatial-resolution HRTF from a very low number of directions". The team was led by Yoshiki Masuyama, and also included Gordon Wichern, Francois Germain, MERL intern Christopher Ick, and Jonathan Le Roux.

See All Awards for Artificial Intelligence -

-

News & Events

-

NEWS MERL Presents 7 Papers and 2 Workshops at CVPR 2026 Date: June 3, 2026 - June 7, 2026

Where: Colorado Convention Center, Denver, Colorado

MERL Contacts: Moitreya Chatterjee; Anoop Cherian; Kaen Kogashi; Suhas Lohit; Lalit Manam; Tim K. Marks; Pedro Miraldo; Kuan-Chuan Peng

Research Areas: Artificial Intelligence, Computer Vision, Machine LearningBrief- MERL researchers are proud to present 7 papers, including two highlight papers (top 3.6% of submissions), and 2 workshops at CVPR 2026. CVPR, taking place from June 3-7 in Denver, CO, USA, is a premier international conference in computer vision.

Papers with MERL Authors:

1. Point4Cast: Streaming Dynamic Scene Reconstruction and Forecasting by Xinhang Liu, Pedro Miraldo, Suhas Lohit, Huaizu Jiang, Naoko Sawada, Yu-Wing Tai, Chi-Keung Tang, and Moitreya Chatterjee (Highlight Paper)

2. Parallel Rigidity Matters for Bundle Adjustment by Lalit Manam and Venu Govindu (Highlight Paper)

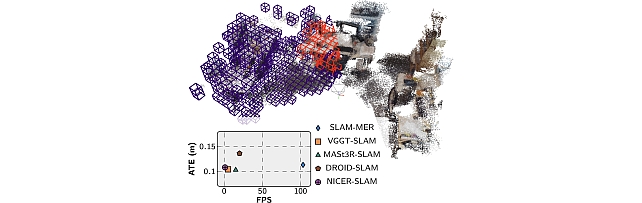

3. Revisiting Monocular SLAM with Spatio-Temporal Scene Modeling by Valter Piedade, Lalit Manam, Masashi Yamazaki, and Pedro Miraldo

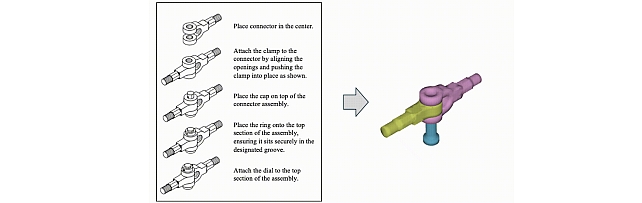

4. AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects by Danrui Li, Jiahao Zhang, Bernhard Egger, Moitreya Chatterjee, Suhas Lohit, Tim K. Marks, and Anoop Cherian

5. LASER: Layer-wise Scale Alignment for Training-Free Streaming 4D Reconstruction by Tianye Ding, Yiming Xie, Yiqing Liang, Moitreya Chatterjee, Pedro Miraldo, and Huaizu Jiang

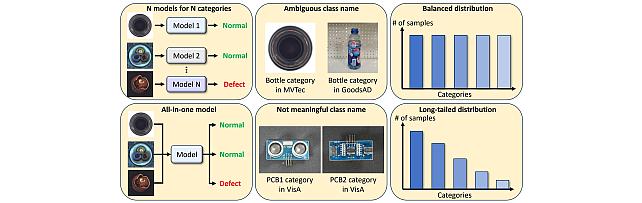

6. SoREL: Soft-Label Refurbishment with Ensemble Learning for Noisy Long-Tailed Classification by Jun-Wei Hsieh, Ying-Hsuan Wu, Yi-Kuan Hsieh, Xin Li, Kuan-Chuan Peng, Ming-Ching Chang (CVPR Findings paper)

7. MMHOI: Complex 3D Multi-Human-Object Interaction Understanding by Kaen Kogashi and Anoop Cherian (PhysHuman Workshop paper)

Workshops Co-Organized by MERL:

1. Multimodal Algorithmic Reasoning Workshop by Anoop Cherian, Suhas Lohit, Kuan-Chuan Peng, Honglu Zhou, Kevin Smith, and Josh Tenenbaum

2. The Third Workshop on Anomaly Detection with Foundation Models by Kuan-Chuan Peng, Ying Zhao, and Abhishek Aich

- MERL researchers are proud to present 7 papers, including two highlight papers (top 3.6% of submissions), and 2 workshops at CVPR 2026. CVPR, taking place from June 3-7 in Denver, CO, USA, is a premier international conference in computer vision.

-

EVENT MERL Contributes to ICASSP 2026 Date: Monday, May 4, 2026 - , May 8, 2026

Location: Barcelona, Spain

MERL Contacts: Wael H. Ali; Petros T. Boufounos; Chiori Hori; Jonathan Le Roux; Yanting Ma; Hassan Mansour; Yoshiki Masuyama; Joshua Rapp; Anthony Vetro; Pu (Perry) Wang; Gordon Wichern

Research Areas: Artificial Intelligence, Computational Sensing, Computer Vision, Machine Learning, Optimization, Signal Processing, Speech & AudioBrief- MERL has made numerous contributions to both the organization and technical program of ICASSP 2026, which is being held in Barcelona, Spain from May 4-8, 2026.

Sponsorship

MERL is proud to be a Silver Patron of the conference and will participate in the student job fair on Thursday, May 7. Please join this session to learn more about employment opportunities at MERL, including openings for research scientists, post-docs, and interns. MERL Distinguished Research Scientists Petros T. Boufounos and Jonathan Le Roux will also present a spotlight session on MERL’s research in signal processing on Tuesday, May 5 at 13:05. Finally, MERL will sponsor a photo booth on Thursday, May 7 and Friday, May 8, where ICASSP participants can take professional photos with friends and colleagues, which will be emailed to them.

MERL is also pleased to be the sponsor of two IEEE Awards that will be presented at the conference. We congratulate Prof. Nasir Ahmed, the recipient of the 2026 IEEE Fourier Award for Signal Processing, and Dr. Alex Acero, the recipient of the 2026 IEEE James L. Flanagan Speech and Audio Processing Award.

Technical Program

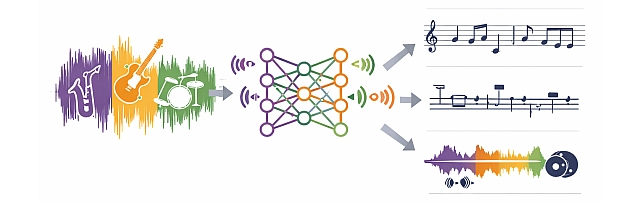

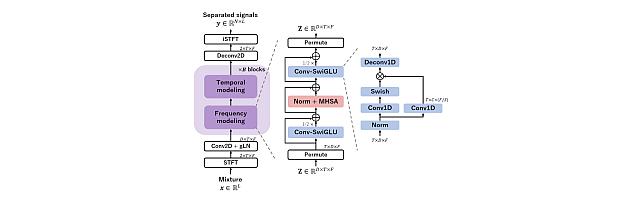

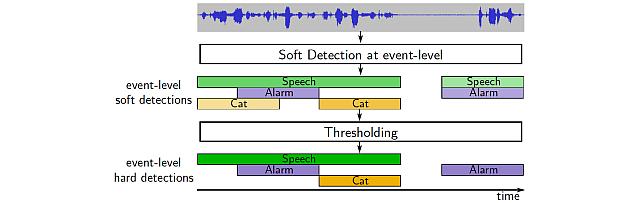

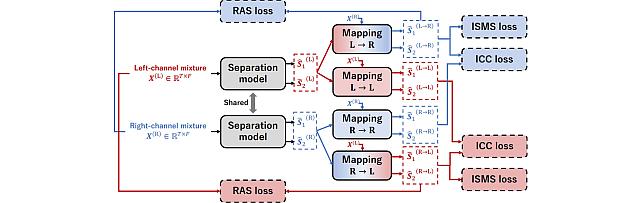

MERL is presenting 8 papers in the main conference on a wide range of topics including source separation, spatial audio, neural audio codecs, radar-based pose estimation, camera-based airflow sensing, radar array processing, and optimization. Another paper on neural speech codecs will be presented at the Low-Resource Audio Codec (LRAC) Satellite Workshop. MERL researchers will also present two articles published in IEEE Open Journal of Signal Processing (OJSP) on music source separation and head-related transfer function (HRTF) modeling. Finally, Speech and Audio Team members Yoshiki Masuyama and Jonathan Le Roux co-organized a Special Session on Neural Spatial Audio Processing, which will feature six oral presentations.

About ICASSP

ICASSP is the flagship conference of the IEEE Signal Processing Society, and the world's largest and most comprehensive technical conference focused on the research advances and latest technological development in signal and information processing. The event attracts more than 4000 participants each year.

- MERL has made numerous contributions to both the organization and technical program of ICASSP 2026, which is being held in Barcelona, Spain from May 4-8, 2026.

See All News & Events for Artificial Intelligence -

-

Research Highlights

-

Point4Cast: Streaming Dynamic Scene Reconstruction and Forecasting -

AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects -

SLAM-MER: Revisiting Monocular SLAM with Spatio-Temporal Scene Modeling -

Parallel Rigidity Matters for Bundle Adjustment -

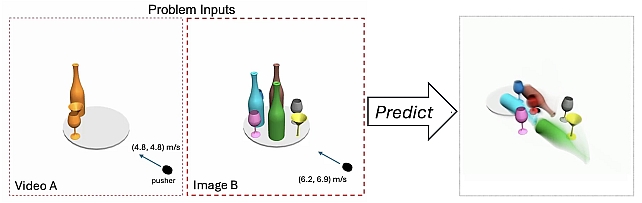

LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines -

PS-NeuS: A Probability-guided Sampler for Neural Implicit Surface Rendering -

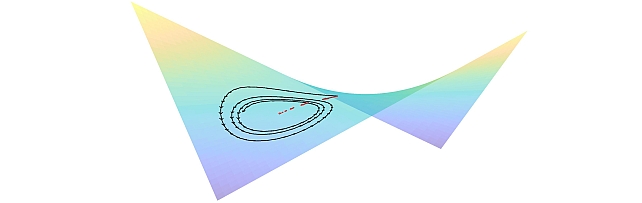

Quantum AI Technology -

TI2V-Zero: Zero-Shot Image Conditioning for Text-to-Video Diffusion Models -

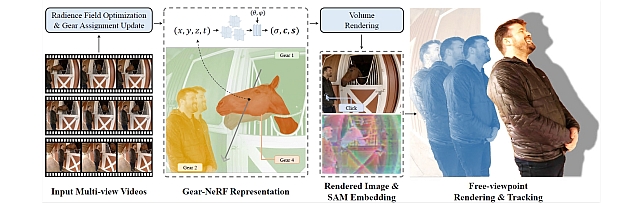

Gear-NeRF: Free-Viewpoint Rendering and Tracking with Motion-Aware Spatio-Temporal Sampling -

Private, Secure, and Reliable Artificial Intelligence -

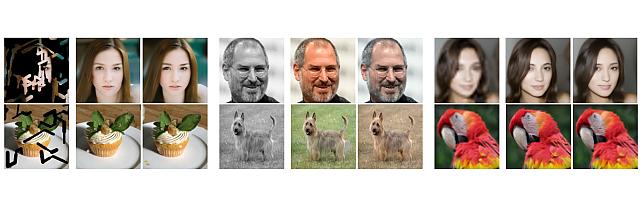

Steered Diffusion -

Sustainable AI -

Robust Machine Learning -

mmWave Beam-SNR Fingerprinting (mmBSF) -

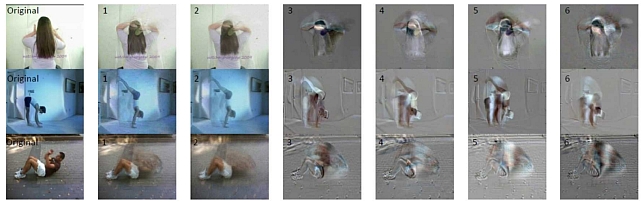

Video Anomaly Detection -

Biosignal Processing for Human-Machine Interaction -

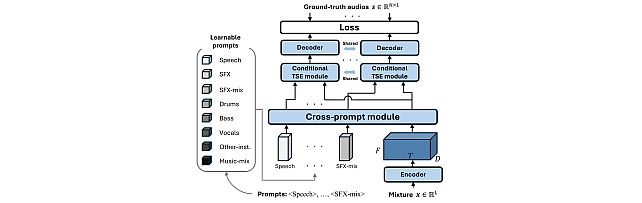

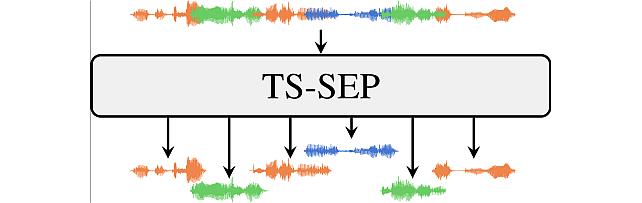

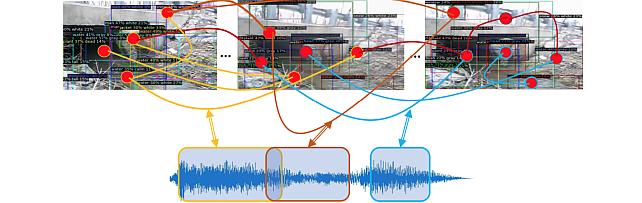

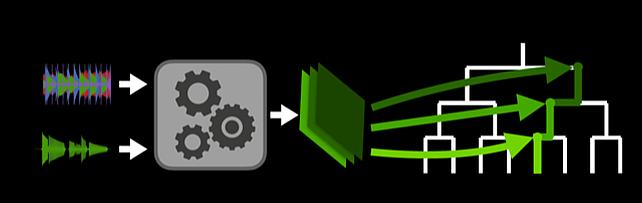

Task-aware Unified Source Separation - Audio Examples

-

-

Internships

-

CI0213: Internship - Efficient Foundation Models for Edge Intelligence

-

EA0234: Internship - Multi-modal sensor fusion for predictive maintenance

-

SA0191: Internship - Human-Robot Interaction Based on Multimodal Scene Understanding

See All Internships for Artificial Intelligence -

-

Openings

-

CI0177: Postdoctoral Research Fellow - Agentic AI

-

SA0297: Postdoctoral Research Fellow - AI for Science

See All Openings at MERL -

-

Recent Publications

- , "SoREL: Soft-Label Refurbishment with Ensemble Learning for Noisy Long-Tailed Classification", CVPR Findings, June 2026.BibTeX TR2026-075 PDF

- @inproceedings{Hsieh2026jun2,

- author = {Hsieh, Jun-Wei and Wu, Ying-Hsuan and Hsieh, Yi-Kuan and Li, Xin and Peng, Kuan-Chuan and Chang, Ming-Ching},

- title = {{SoREL: Soft-Label Refurbishment with Ensemble Learning for Noisy Long-Tailed Classification}},

- booktitle = {CVPR Findings},

- year = 2026,

- month = jun,

- url = {https://www.merl.com/publications/TR2026-075}

- }

- , "SoREL: Soft-Label Refurbishment with Ensemble Learning for Noisy Long-Tailed Classification Supplementary Material", CVPR Findings, June 2026.BibTeX TR2026-074 PDF

- @inproceedings{Hsieh2026jun,

- author = {Hsieh, Jun-Wei and Wu, Ying-Hsuan and Hsieh, Yi-Kuan and Li, Xin and Peng, Kuan-Chuan and Chang, Ming-Ching},

- title = {{SoREL: Soft-Label Refurbishment with Ensemble Learning for Noisy Long-Tailed Classification Supplementary Material}},

- booktitle = {CVPR Findings},

- year = 2026,

- month = jun,

- url = {https://www.merl.com/publications/TR2026-074}

- }

- , "AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2026.BibTeX TR2026-076 PDF Video Data Software

- @inproceedings{Li2026jun,

- author = {Li, Danrui and Zhang, Jiahao and Egger, Bernhard and Chatterjee, Moitreya and Lohit, Suhas and Marks, Tim K. and Cherian, Anoop},

- title = {{AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2026,

- month = jun,

- url = {https://www.merl.com/publications/TR2026-076}

- }

- , "Point4Cast: Streaming Dynamic Scene Reconstruction and Forecasting", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2026.BibTeX TR2026-077 PDF

- @inproceedings{Liu2026jun,

- author = {Liu, Xinhang and Miraldo, Pedro and Lohit, Suhas and Jiang, Huaizu and Sawada, Naoko and Tai, Yu-Wing and Tang, Chi-Keung and Chatterjee, Moitreya},

- title = {{Point4Cast: Streaming Dynamic Scene Reconstruction and Forecasting}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2026,

- month = jun,

- url = {https://www.merl.com/publications/TR2026-077}

- }

- , "LASER: Layer-wise Scale Alignment for Training-Free Streaming 4D Reconstruction", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), May 2026.BibTeX TR2026-055 PDF

- @inproceedings{Ding2026may,

- author = {Ding, Tianye and Xie, Yiming and Liang, Yiqing and Chatterjee, Moitreya and Miraldo, Pedro and Jiang, Huaizu},

- title = {{LASER: Layer-wise Scale Alignment for Training-Free Streaming 4D Reconstruction}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-055}

- }

- , "Parallel Rigidity Matters for Bundle Adjustment", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), May 2026.BibTeX TR2026-053 PDF Video Presentation

- @inproceedings{Lalit2026may,

- author = {{Lalit, Manam and Govindu, Venu}},

- title = {{Parallel Rigidity Matters for Bundle Adjustment}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-053}

- }

- , "Revisiting Monocular SLAM with Spatio-Temporal Scene Modeling", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), May 2026.BibTeX TR2026-056 PDF Video Software Presentation

- @inproceedings{Piedade2026may,

- author = {{Piedade, Valter and Manam, Lalit and Yamazaki, Masashi and Miraldo, Pedro}},

- title = {{Revisiting Monocular SLAM with Spatio-Temporal Scene Modeling}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-056}

- }

- , "LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines", International Conference on Artificial Intelligence and Statistics (AISTATS), May 2026.BibTeX TR2026-052 PDF Data Software

- @inproceedings{Cherian2026may,

- author = {Cherian, Anoop and Corcodel, Radu and Jain, Siddarth and Romeres, Diego},

- title = {{LLMPhy: Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines}},

- booktitle = {International Conference on Artificial Intelligence and Statistics (AISTATS)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-052}

- }

- , "SoREL: Soft-Label Refurbishment with Ensemble Learning for Noisy Long-Tailed Classification", CVPR Findings, June 2026.

-

Videos

-

Software & Data Downloads

-

Physics-Aware Assembly of Complex Industrial Objects -

Mitsubishi Electric Research framework for visual SLAM -

Parameter-Identifiable Physical Reasoning Combining Large Language Models and Physics Engines -

MMHOI Dataset: Modeling Complex 3D Multi-Human Multi-Object Interactions -

Embracing Cacophony -

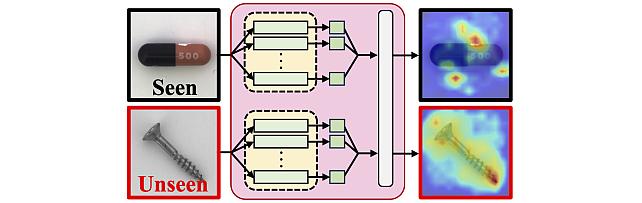

Subject- and Dataset-Aware Neural Field for HRTF Modeling -

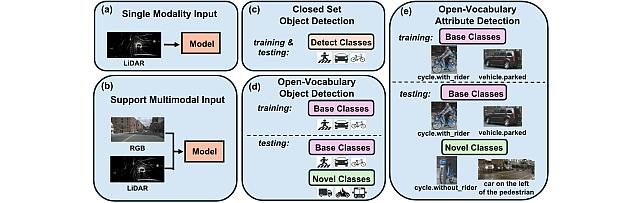

Open Vocabulary Attribute Detection Dataset -

Long-Tailed Online Anomaly Detection dataset -

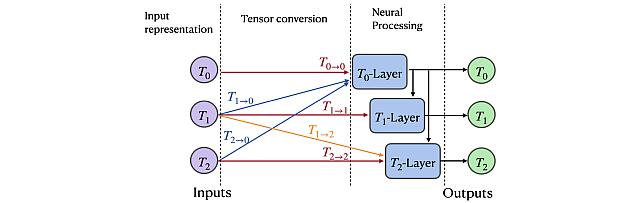

Group Representation Networks -

Task-Aware Unified Source Separation -

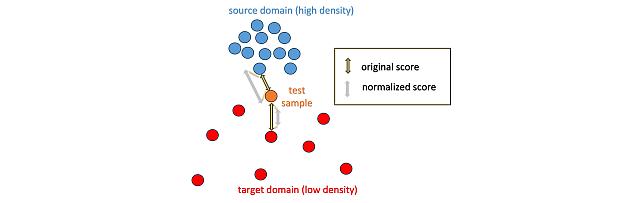

Local Density-Based Anomaly Score Normalization for Domain Generalization -

Retrieval-Augmented Neural Field for HRTF Upsampling and Personalization -

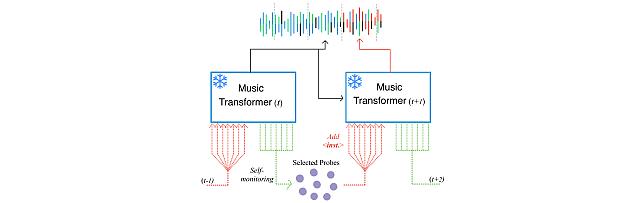

Self-Monitored Inference-Time INtervention for Generative Music Transformers -

MEL-PETs Defense for LLM Privacy Challenge -

MEL-PETs Joint-Context Attack for LLM Privacy Challenge -

Transformer-based model with LOcal-modeling by COnvolution -

Sound Event Bounding Boxes -

Enhanced Reverberation as Supervision -

Zero-Shot Image Conditioning for Text-to-Video Diffusion Models -

Gear Extensions of Neural Radiance Fields -

Long-Tailed Anomaly Detection Dataset -

Neural IIR Filter Field for HRTF Upsampling and Personalization -

Target-Speaker SEParation -

Pixel-Grounded Prototypical Part Networks -

Steered Diffusion -

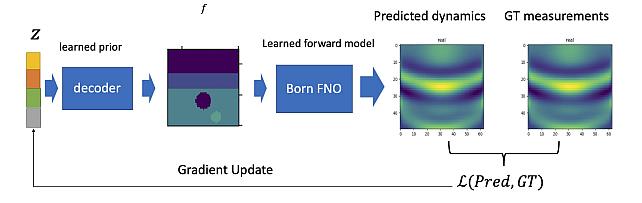

Learned Born Operator for Reflection Tomographic Imaging -

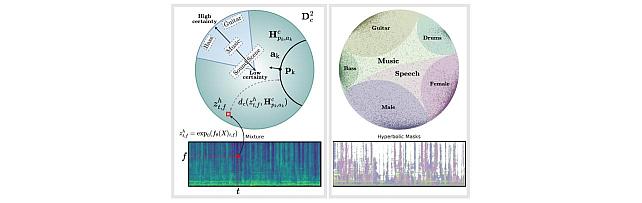

Hyperbolic Audio Source Separation -

Simple Multimodal Algorithmic Reasoning Task Dataset -

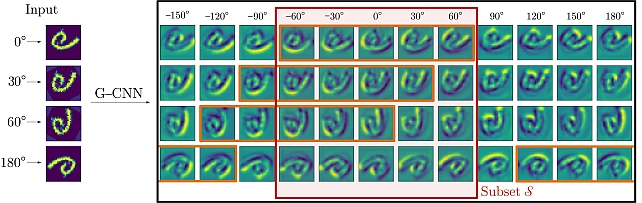

Partial Group Convolutional Neural Networks -

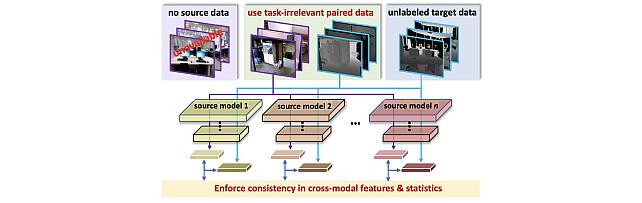

SOurce-free Cross-modal KnowledgE Transfer -

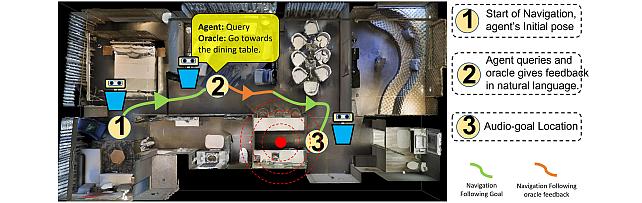

Audio-Visual-Language Embodied Navigation in 3D Environments -

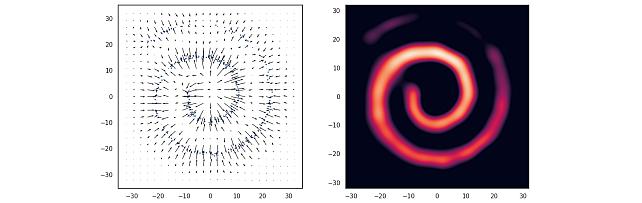

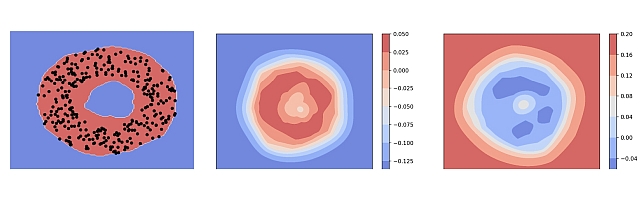

Nonparametric Score Estimators -

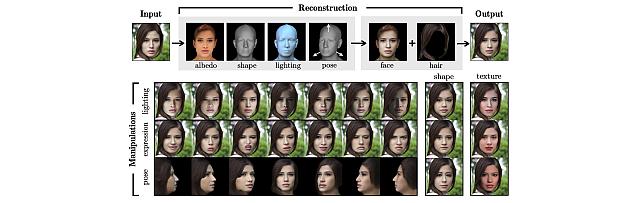

3D MOrphable STyleGAN -

Instance Segmentation GAN -

Audio Visual Scene-Graph Segmentor -

Generalized One-class Discriminative Subspaces -

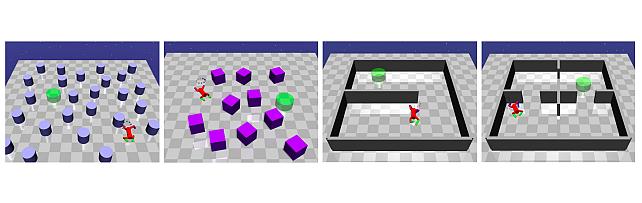

Goal directed RL with Safety Constraints -

Hierarchical Musical Instrument Separation -

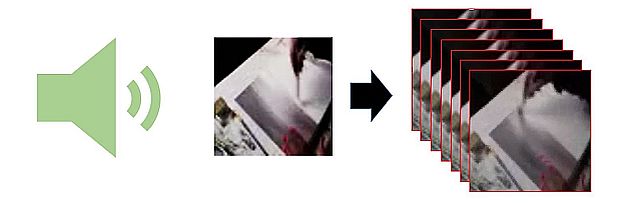

Generating Visual Dynamics from Sound and Context -

Adversarially-Contrastive Optimal Transport -

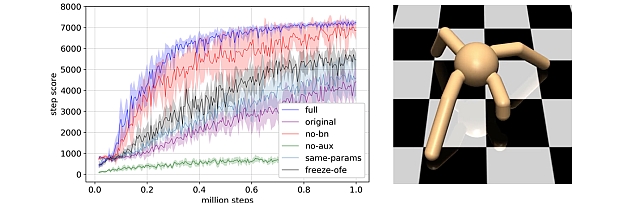

Online Feature Extractor Network -

MotionNet -

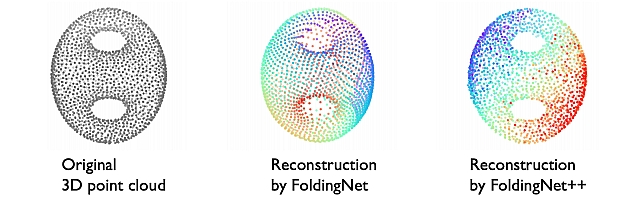

FoldingNet++ -

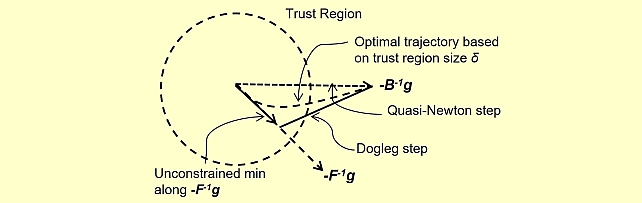

Quasi-Newton Trust Region Policy Optimization -

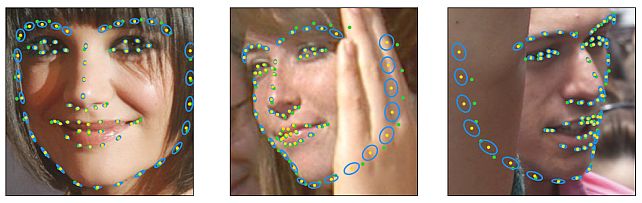

Landmarks’ Location, Uncertainty, and Visibility Likelihood -

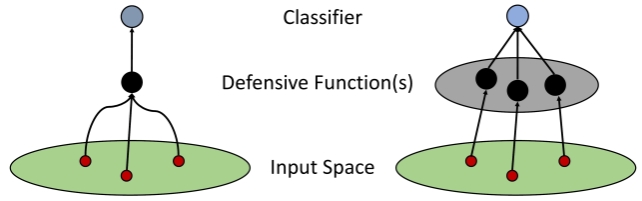

Robust Iterative Data Estimation -

Gradient-based Nikaido-Isoda -

Discriminative Subspace Pooling -

Understanding Dynamic Compute Allocation in Recurrent Transformers

-