Computational Sensing

Utilizing computation to improve sensing capabilities.

Our research in the area of computational sensing focuses on signal acquisition and design, signal modeling and reconstruction algorithms, including inverse problems, as well as array signal processing techniques.

Quick Links

-

Researchers

Petros T.

Boufounos

Hassan

Mansour

Pu

(Perry)

Wang

Dehong

Liu

Philip V.

Orlik

Toshiaki

Koike-Akino

Joshua

Rapp

Yanting

Ma

Kieran

Parsons

Saviz

Mowlavi

Ye

Wang

Anthony

Vetro

Wael H.

Ali

Jianlin

Guo

Radu

Corcodel

Vedang M.

Deshpande

Siddarth

Jain

Suhas

Lohit

Pedro

Miraldo

Kuan-Chuan

Peng

Abraham P.

Vinod

Kenji

Inomata

Tsubasa

Terada

-

Awards

-

AWARD MERL’s Paper on Wi-Fi Sensing Earns Top 3% Paper Recognition at ICASSP 2023, Selected as a Best Student Paper Award Finalist Date: June 9, 2023

Awarded to: Cristian J. Vaca-Rubio, Pu Wang, Toshiaki Koike-Akino, Ye Wang, Petros Boufounos and Petar Popovski

MERL Contacts: Petros T. Boufounos; Toshiaki Koike-Akino; Pu (Perry) Wang; Ye Wang

Research Areas: Artificial Intelligence, Communications, Computational Sensing, Dynamical Systems, Machine Learning, Signal ProcessingBrief- A MERL Paper on Wi-Fi sensing was recognized as a Top 3% Paper among all 2709 accepted papers at the 2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP 2023). Co-authored by Cristian Vaca-Rubio and Petar Popovski from Aalborg University, Denmark, and MERL researchers Pu Wang, Toshiaki Koike-Akino, Ye Wang, and Petros Boufounos, the paper "MmWave Wi-Fi Trajectory Estimation with Continous-Time Neural Dynamic Learning" was also a Best Student Paper Award finalist.

Performed during Cristian’s stay at MERL first as a visiting Marie Skłodowska-Curie Fellow and then as a full-time intern in 2022, this work capitalizes on standards-compliant Wi-Fi signals to perform indoor localization and sensing. The paper uses a neural dynamic learning framework to address technical issues such as low sampling rate and irregular sampling intervals.

ICASSP, a flagship conference of the IEEE Signal Processing Society (SPS), was hosted on the Greek island of Rhodes from June 04 to June 10, 2023. ICASSP 2023 marked the largest ICASSP in history, boasting over 4000 participants and 6128 submitted papers, out of which 2709 were accepted.

- A MERL Paper on Wi-Fi sensing was recognized as a Top 3% Paper among all 2709 accepted papers at the 2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP 2023). Co-authored by Cristian Vaca-Rubio and Petar Popovski from Aalborg University, Denmark, and MERL researchers Pu Wang, Toshiaki Koike-Akino, Ye Wang, and Petros Boufounos, the paper "MmWave Wi-Fi Trajectory Estimation with Continous-Time Neural Dynamic Learning" was also a Best Student Paper Award finalist.

-

AWARD Joshua Rapp wins Best Dissertation Award from the IEEE Signal Processing Society Date: December 20, 2021

Awarded to: Joshua Rapp

MERL Contact: Joshua Rapp

Research Areas: Computational Sensing, Signal ProcessingBrief- Joshua Rapp has won the 2021 Best PhD Dissertation Award from the IEEE Signal Processing Society.

The award recognizes a PhD thesis completed on a signal processing subject within the past three years for its relevant work in signal processing while stimulating further research in the field.

Dr. Rapp completed his PhD at Boston University in 2020 with a thesis entitled "Probabilistic Modeling for Single-Photon Lidar." The dissertation tackles challenges of the acquisition and processing of 3D depth maps reconstructed from time-of-flight data captured one photon at a time.

The award will be presented at the 2022 IEEE International Conference on Image Processing (ICIP) in France.

- Joshua Rapp has won the 2021 Best PhD Dissertation Award from the IEEE Signal Processing Society.

-

AWARD Petros Boufounos Elevated to IEEE Fellow Date: January 1, 2022

Awarded to: Petros T. Boufounos

MERL Contact: Petros T. Boufounos

Research Areas: Computational Sensing, Signal ProcessingBrief- MERL’s Petros Boufounos has been elevated to IEEE Fellow, effective January 2022, for “contributions to compressed sensing.”

IEEE Fellow is the highest grade of membership of the IEEE. It honors members with an outstanding record of technical achievements, contributing importantly to the advancement or application of engineering, science and technology, and bringing significant value to society. Each year, following a rigorous evaluation procedure, the IEEE Fellow Committee recommends a select group of recipients for elevation to IEEE Fellow. Less than 0.1% of voting members are selected annually for this member grade elevation.

- MERL’s Petros Boufounos has been elevated to IEEE Fellow, effective January 2022, for “contributions to compressed sensing.”

See All Awards for Computational Sensing -

-

News & Events

-

EVENT MERL Contributes to ICASSP 2026 Date: Monday, May 4, 2026 - , May 8, 2026

Location: Barcelona, Spain

MERL Contacts: Wael H. Ali; Petros T. Boufounos; Chiori Hori; Jonathan Le Roux; Yanting Ma; Hassan Mansour; Yoshiki Masuyama; Joshua Rapp; Anthony Vetro; Pu (Perry) Wang; Gordon Wichern

Research Areas: Artificial Intelligence, Computational Sensing, Computer Vision, Machine Learning, Optimization, Signal Processing, Speech & AudioBrief- MERL has made numerous contributions to both the organization and technical program of ICASSP 2026, which is being held in Barcelona, Spain from May 4-8, 2026.

Sponsorship

MERL is proud to be a Silver Patron of the conference and will participate in the student job fair on Thursday, May 7. Please join this session to learn more about employment opportunities at MERL, including openings for research scientists, post-docs, and interns. MERL Distinguished Research Scientists Petros T. Boufounos and Jonathan Le Roux will also present a spotlight session on MERL’s research in signal processing on Tuesday, May 5 at 13:05. Finally, MERL will sponsor a photo booth on Thursday, May 7 and Friday, May 8, where ICASSP participants can take professional photos with friends and colleagues, which will be emailed to them.

MERL is also pleased to be the sponsor of two IEEE Awards that will be presented at the conference. We congratulate Prof. Nasir Ahmed, the recipient of the 2026 IEEE Fourier Award for Signal Processing, and Dr. Alex Acero, the recipient of the 2026 IEEE James L. Flanagan Speech and Audio Processing Award.

Technical Program

MERL is presenting 8 papers in the main conference on a wide range of topics including source separation, spatial audio, neural audio codecs, radar-based pose estimation, camera-based airflow sensing, radar array processing, and optimization. Another paper on neural speech codecs will be presented at the Low-Resource Audio Codec (LRAC) Satellite Workshop. MERL researchers will also present two articles published in IEEE Open Journal of Signal Processing (OJSP) on music source separation and head-related transfer function (HRTF) modeling. Finally, Speech and Audio Team members Yoshiki Masuyama and Jonathan Le Roux co-organized a Special Session on Neural Spatial Audio Processing, which will feature six oral presentations.

About ICASSP

ICASSP is the flagship conference of the IEEE Signal Processing Society, and the world's largest and most comprehensive technical conference focused on the research advances and latest technological development in signal and information processing. The event attracts more than 4000 participants each year.

- MERL has made numerous contributions to both the organization and technical program of ICASSP 2026, which is being held in Barcelona, Spain from May 4-8, 2026.

-

NEWS MERL Researchers at NeurIPS 2025 presented 2 conference papers, 5 workshop papers, and organized a workshop. Date: December 2, 2025 - December 7, 2025

Where: San Diego

MERL Contacts: Petros T. Boufounos; Anoop Cherian; Radu Corcodel; Stefano Di Cairano; Chiori Hori; Christopher R. Laughman; Suhas Lohit; Pedro Miraldo; Saviz Mowlavi; Kuan-Chuan Peng; Arvind Raghunathan; Abraham P. Vinod; Pu (Perry) Wang

Research Areas: Artificial Intelligence, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & AudioBrief- MERL researchers presented 2 main-conference papers and 5 workshop papers, as well as organized a workshop, at NeurIPS 2025.

Main Conference Papers:

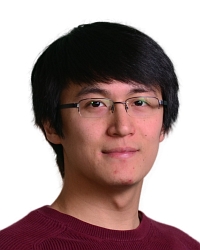

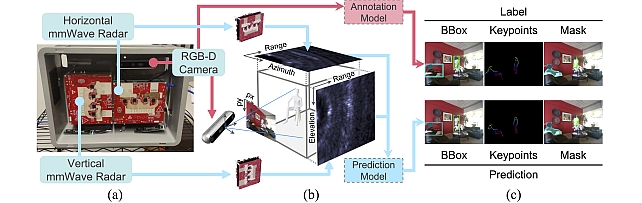

1) Sorachi Kato, Ryoma Yataka, Pu Wang, Pedro Miraldo, Takuya Fujihashi, and Petros Boufounos, "RAPTR: Radar-based 3D Pose Estimation using Transformer", Code available at: https://github.com/merlresearch/radar-pose-transformer

2) Runyu Zhang, Arvind Raghunathan, Jeff Shamma, and Na Li, "Constrained Optimization From a Control Perspective via Feedback Linearization"

Workshop Papers:

1) Yuyou Zhang, Radu Corcodel, Chiori Hori, Anoop Cherian, and Ding Zhao, "SpinBench: Perspective and Rotation as a Lens on Spatial Reasoning in VLMs", NeuriIPS 2025 Workshop on SPACE in Vision, Language, and Embodied AI (SpaVLE) (Best Paper Runner-up)

2) Xiaoyu Xie, Saviz Mowlavi, and Mouhacine Benosman, "Smooth and Sparse Latent Dynamics in Operator Learning with Jerk Regularization", Workshop on Machine Learning and the Physical Sciences (ML4PS)

3) Spencer Hutchinson, Abraham Vinod, François Germain, Stefano Di Cairano, Christopher Laughman, and Ankush Chakrabarty, "Quantile-SMPC for Grid-Interactive Buildings with Multivariate Temporal Fusion Transformers", Workshop on UrbanAI: Harnessing Artificial Intelligence for Smart Cities (UrbanAI)

4) Yuki Shirai, Kei Ota, Devesh Jha, and Diego Romeres, "Sim-to-Real Contact-Rich Pivoting via Optimization-Guided RL with Vision and Touch", Worskhop on Embodied World Models for Decision Making

5) Mark Van der Merwe and Devesh Jha, "In-Context Policy Iteration for Dynamic Manipulation", Workshop on Embodied World Models for Decision Making

Workshop Organized:

MERL members co-organized the Multimodal Algorithmic Reasoning (MAR) Workshop (https://marworkshop.github.io/neurips25/). Organizers: Anoop Cherian (Mitsubishi Electric Research Laboratories), Kuan-Chuan Peng (Mitsubishi Electric Research Laboratories), Suhas Lohit (Mitsubishi Electric Research Laboratories), Honglu Zhou (Salesforce AI Research), Kevin Smith (Massachusetts Institute of Technology), and Joshua B. Tenenbaum (Massachusetts Institute of Technology).

- MERL researchers presented 2 main-conference papers and 5 workshop papers, as well as organized a workshop, at NeurIPS 2025.

See All News & Events for Computational Sensing -

-

Research Highlights

-

Internships

See All Internships for Computational Sensing -

Openings

See All Openings at MERL -

Recent Publications

- , "Single View Camera-Based Dynamic Airflow Sensing", IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), May 2026.BibTeX TR2026-038 PDF

- @inproceedings{Tandi2026may,

- author = {Tandi, Kevin and Ali, Wael H. and Rapp, Joshua and Mansour, Hassan},

- title = {{Single View Camera-Based Dynamic Airflow Sensing}},

- booktitle = {IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-038}

- }

- , "Heatmap-to-SMPL Multi-View Radar Transformer for Multi-Person 3D Pose Estimation", IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), May 2026.BibTeX TR2026-040 PDF

- @inproceedings{Kato2026may,

- author = {Kato, Sorachi and Wang, Pu and Fujihashi, Takuya and Markham, Andrew},

- title = {{Heatmap-to-SMPL Multi-View Radar Transformer for Multi-Person 3D Pose Estimation}},

- booktitle = {IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-040}

- }

- , "Unambiguous Range Extension for1 Doppler Single-Photon Lidar", Optics Express, DOI: 10.1364/OE.592528, Vol. 34, No. 9, pp. 15933-15952, May 2026.BibTeX TR2026-050 PDF

- @article{Kitichotkul2026apr,

- author = {Kitichotkul, Ruangrawee and Rapp, Joshua and Ma, Yanting and Mansour, Hassan},

- title = {{Unambiguous range extension for Doppler single-photon lidar}},

- journal = {Optics Express},

- year = 2026,

- volume = 34,

- number = 9,

- pages = {15933--15952},

- month = apr,

- doi = {10.1364/OE.592528},

- url = {https://www.merl.com/publications/TR2026-050}

- }

- , "DUAL-REGULARIZED ITERATIVE ADAPTIVE APPROACH FOR DOA SPECTRUM RECONSTRUCTION IN LIMITED ANGLE SECTOR", IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), May 2026.BibTeX TR2026-039 PDF

- @inproceedings{Takahashi2026may,

- author = {Takahashi, Ryuhei and Mansour, Hassan and Boufounos, Petros T.},

- title = {{DUAL-REGULARIZED ITERATIVE ADAPTIVE APPROACH FOR DOA SPECTRUM RECONSTRUCTION IN LIMITED ANGLE SECTOR}},

- booktitle = {IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-039}

- }

- , "ProxiCBO: A Consensus-based Method for Composite Optimization", IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), May 2026.BibTeX TR2026-041 PDF

- @inproceedings{Zhang2026may,

- author = {Zhang, Haoyu and Ma, Yanting and Kitichotkul, Ruangrawee and Rapp, Joshua and Boufounos, Petros T.},

- title = {{ProxiCBO: A Consensus-based Method for Composite Optimization}},

- booktitle = {IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP)},

- year = 2026,

- month = may,

- url = {https://www.merl.com/publications/TR2026-041}

- }

- , "Velocity estimation with single-photon lidar", SPIE Conference on Advanced Photon Counting Techniques, April 2026.BibTeX TR2026-051 PDF

- @inproceedings{Rapp2026apr,

- author = {Rapp, Joshua and Kitichotkul, Ruangrawee and Ma, Yanting and Mansour, Hassan},

- title = {{Velocity estimation with single-photon lidar}},

- booktitle = {SPIE Conference on Advanced Photon Counting Techniques},

- year = 2026,

- month = apr,

- url = {https://www.merl.com/publications/TR2026-051}

- }

- , "OpInf-LLM: Parametric PDE Solving with LLMs via Operator Inference", International Conference on Learning Representations (ICLR) Workshop on AI and Partial Differential Equations (AI&PDE), April 2026.BibTeX TR2026-043 PDF

- @inproceedings{Wang2026apr2,

- author = {Wang, Zhuoyuan and Hu, Hanjiang and Deng, Xiyu and Mowlavi, Saviz and Nakahira, Yorie},

- title = {{OpInf-LLM: Parametric PDE Solving with LLMs via Operator Inference}},

- booktitle = {International Conference on Learning Representations (ICLR) Workshop on AI and Partial Differential Equations (AI\&PDE)},

- year = 2026,

- month = apr,

- url = {https://www.merl.com/publications/TR2026-043}

- }

- , "Physics-Informed Deep B-Spline Networks", International Conference on Learning Representations (ICLR) Workshop on AI and Partial Differential Equations (AI&PDE), April 2026.BibTeX TR2026-046 PDF

- @inproceedings{Wang2026apr3,

- author = {Wang, Zhuoyuan and Romagnoli, Raffaele and Mowlavi, Saviz and Nakahira, Yorie},

- title = {{Physics-Informed Deep B-Spline Networks}},

- booktitle = {International Conference on Learning Representations (ICLR) Workshop on AI and Partial Differential Equations (AI\&PDE)},

- year = 2026,

- month = apr,

- url = {https://www.merl.com/publications/TR2026-046}

- }

- , "Single View Camera-Based Dynamic Airflow Sensing", IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), May 2026.

-

Videos

-

Software & Data Downloads

-

Radar-based 3D Pose Estimation using Transformer -

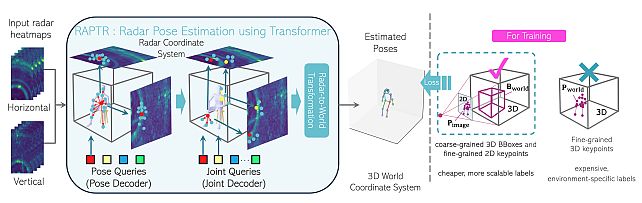

multi-view Radar object dEtection with 3D bounding boX diffusiOn -

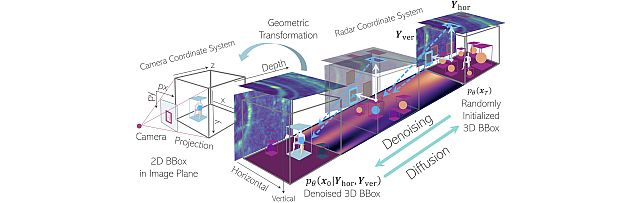

Radar dEtection TRansformer -

Millimeter-wave Multi-View Radar Dataset -

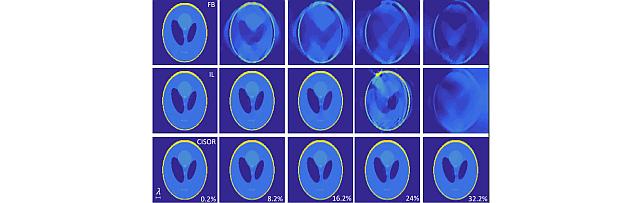

Convergent Inverse Scattering using Optimization and Regularization -

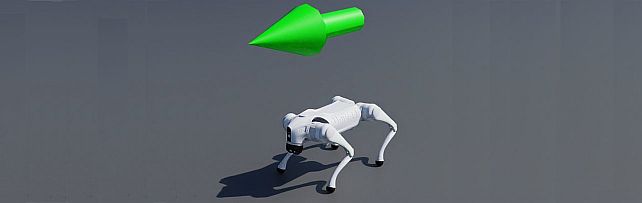

Generalization in Deep RL with a Robust Adaptation Module -

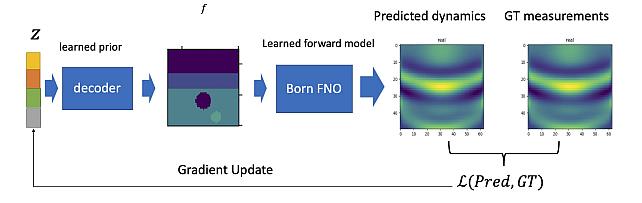

Learned Born Operator for Reflection Tomographic Imaging

-