Suhas Lohit

- Phone: 617-621-7569

- Email:

-

Position:

Research / Technical Staff

Principal Research Scientist -

Education:

Ph.D., Arizona State University, 2019 -

Research Areas:

External Links:

Suhas' Quick Links

-

Biography

Before coming to MERL, Suhas worked as an intern at MERL (summer 2018), SRI International (summer 2017) and Nvidia (summer 2016). His research interests include computer vision, computational imaging and deep learning. Recently, his research focus has been on creating hybrid model- and data-driven neural architectures for various applications in imaging and vision. He won the Best Paper Award at the CVPR workshop on Computational Cameras and Displays in 2015 and the University Graduate Fellowship at ASU for 2015-16.

-

Recent News & Events

-

NEWS MERL Presents 7 Papers and 2 Workshops at CVPR 2026 Date: June 3, 2026 - June 7, 2026

Where: Colorado Convention Center, Denver, Colorado

MERL Contacts: Moitreya Chatterjee; Anoop Cherian; Kaen Kogashi; Suhas Lohit; Lalit Manam; Tim K. Marks; Pedro Miraldo; Kuan-Chuan Peng

Research Areas: Artificial Intelligence, Computer Vision, Machine LearningBrief- MERL researchers are proud to present 7 papers, including two highlight papers (top 3.6% of submissions), and 2 workshops at CVPR 2026. CVPR, taking place from June 3-7 in Denver, CO, USA, is a premier international conference in computer vision.

Papers with MERL Authors:

1. Point4Cast: Streaming Dynamic Scene Reconstruction and Forecasting by Xinhang Liu, Pedro Miraldo, Suhas Lohit, Huaizu Jiang, Naoko Sawada, Yu-Wing Tai, Chi-Keung Tang, and Moitreya Chatterjee (Highlight Paper)

2. Parallel Rigidity Matters for Bundle Adjustment by Lalit Manam and Venu Govindu (Highlight Paper)

3. Revisiting Monocular SLAM with Spatio-Temporal Scene Modeling by Valter Piedade, Lalit Manam, Masashi Yamazaki, and Pedro Miraldo

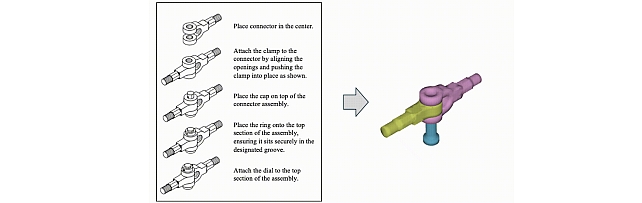

4. AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects by Danrui Li, Jiahao Zhang, Bernhard Egger, Moitreya Chatterjee, Suhas Lohit, Tim K. Marks, and Anoop Cherian

5. LASER: Layer-wise Scale Alignment for Training-Free Streaming 4D Reconstruction by Tianye Ding, Yiming Xie, Yiqing Liang, Moitreya Chatterjee, Pedro Miraldo, and Huaizu Jiang

6. SoREL: Soft-Label Refurbishment with Ensemble Learning for Noisy Long-Tailed Classification by Jun-Wei Hsieh, Ying-Hsuan Wu, Yi-Kuan Hsieh, Xin Li, Kuan-Chuan Peng, Ming-Ching Chang (CVPR Findings paper)

7. MMHOI: Complex 3D Multi-Human-Object Interaction Understanding by Kaen Kogashi and Anoop Cherian (PhysHuman Workshop paper)

Workshops Co-Organized by MERL:

1. Multimodal Algorithmic Reasoning Workshop by Anoop Cherian, Suhas Lohit, Kuan-Chuan Peng, Honglu Zhou, Kevin Smith, and Josh Tenenbaum

2. The Third Workshop on Anomaly Detection with Foundation Models by Kuan-Chuan Peng, Ying Zhao, and Abhishek Aich

- MERL researchers are proud to present 7 papers, including two highlight papers (top 3.6% of submissions), and 2 workshops at CVPR 2026. CVPR, taking place from June 3-7 in Denver, CO, USA, is a premier international conference in computer vision.

-

NEWS MERL Researchers at NeurIPS 2025 presented 2 conference papers, 5 workshop papers, and organized a workshop. Date: December 2, 2025 - December 7, 2025

Where: San Diego

MERL Contacts: Petros T. Boufounos; Anoop Cherian; Radu Corcodel; Stefano Di Cairano; Chiori Hori; Christopher R. Laughman; Suhas Lohit; Pedro Miraldo; Saviz Mowlavi; Kuan-Chuan Peng; Arvind Raghunathan; Abraham P. Vinod; Pu (Perry) Wang

Research Areas: Artificial Intelligence, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & AudioBrief- MERL researchers presented 2 main-conference papers and 5 workshop papers, as well as organized a workshop, at NeurIPS 2025.

Main Conference Papers:

1) Sorachi Kato, Ryoma Yataka, Pu Wang, Pedro Miraldo, Takuya Fujihashi, and Petros Boufounos, "RAPTR: Radar-based 3D Pose Estimation using Transformer", Code available at: https://github.com/merlresearch/radar-pose-transformer

2) Runyu Zhang, Arvind Raghunathan, Jeff Shamma, and Na Li, "Constrained Optimization From a Control Perspective via Feedback Linearization"

Workshop Papers:

1) Yuyou Zhang, Radu Corcodel, Chiori Hori, Anoop Cherian, and Ding Zhao, "SpinBench: Perspective and Rotation as a Lens on Spatial Reasoning in VLMs", NeuriIPS 2025 Workshop on SPACE in Vision, Language, and Embodied AI (SpaVLE) (Best Paper Runner-up)

2) Xiaoyu Xie, Saviz Mowlavi, and Mouhacine Benosman, "Smooth and Sparse Latent Dynamics in Operator Learning with Jerk Regularization", Workshop on Machine Learning and the Physical Sciences (ML4PS)

3) Spencer Hutchinson, Abraham Vinod, François Germain, Stefano Di Cairano, Christopher Laughman, and Ankush Chakrabarty, "Quantile-SMPC for Grid-Interactive Buildings with Multivariate Temporal Fusion Transformers", Workshop on UrbanAI: Harnessing Artificial Intelligence for Smart Cities (UrbanAI)

4) Yuki Shirai, Kei Ota, Devesh Jha, and Diego Romeres, "Sim-to-Real Contact-Rich Pivoting via Optimization-Guided RL with Vision and Touch", Worskhop on Embodied World Models for Decision Making

5) Mark Van der Merwe and Devesh Jha, "In-Context Policy Iteration for Dynamic Manipulation", Workshop on Embodied World Models for Decision Making

Workshop Organized:

MERL members co-organized the Multimodal Algorithmic Reasoning (MAR) Workshop (https://marworkshop.github.io/neurips25/). Organizers: Anoop Cherian (Mitsubishi Electric Research Laboratories), Kuan-Chuan Peng (Mitsubishi Electric Research Laboratories), Suhas Lohit (Mitsubishi Electric Research Laboratories), Honglu Zhou (Salesforce AI Research), Kevin Smith (Massachusetts Institute of Technology), and Joshua B. Tenenbaum (Massachusetts Institute of Technology).

- MERL researchers presented 2 main-conference papers and 5 workshop papers, as well as organized a workshop, at NeurIPS 2025.

See All News & Events for Suhas -

-

Awards

-

AWARD Best Paper - Honorable Mention Award at WACV 2021 Date: January 6, 2021

Awarded to: Rushil Anirudh, Suhas Lohit, Pavan Turaga

MERL Contact: Suhas Lohit

Research Areas: Computational Sensing, Computer Vision, Machine LearningBrief- A team of researchers from Mitsubishi Electric Research Laboratories (MERL), Lawrence Livermore National Laboratory (LLNL) and Arizona State University (ASU) received the Best Paper Honorable Mention Award at WACV 2021 for their paper "Generative Patch Priors for Practical Compressive Image Recovery".

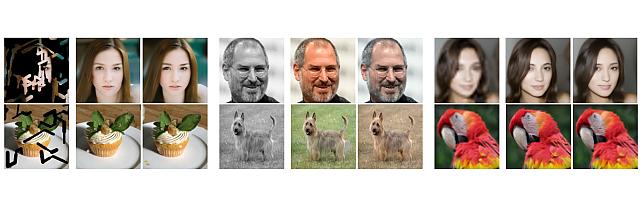

The paper proposes a novel model of natural images as a composition of small patches which are obtained from a deep generative network. This is unlike prior approaches where the networks attempt to model image-level distributions and are unable to generalize outside training distributions. The key idea in this paper is that learning patch-level statistics is far easier. As the authors demonstrate, this model can then be used to efficiently solve challenging inverse problems in imaging such as compressive image recovery and inpainting even from very few measurements for diverse natural scenes.

- A team of researchers from Mitsubishi Electric Research Laboratories (MERL), Lawrence Livermore National Laboratory (LLNL) and Arizona State University (ASU) received the Best Paper Honorable Mention Award at WACV 2021 for their paper "Generative Patch Priors for Practical Compressive Image Recovery".

-

-

Research Highlights

-

AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects -

Point4Cast: Streaming Dynamic Scene Reconstruction and Forecasting -

TI2V-Zero: Zero-Shot Image Conditioning for Text-to-Video Diffusion Models -

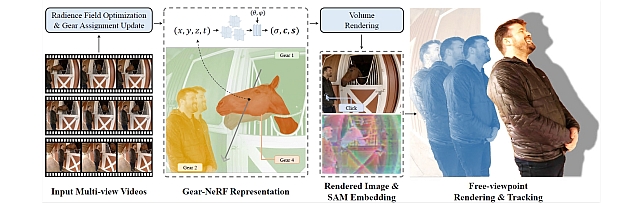

Gear-NeRF: Free-Viewpoint Rendering and Tracking with Motion-Aware Spatio-Temporal Sampling -

Steered Diffusion

-

-

MERL Publications

- , "AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects", arXiv, May 2026.BibTeX arXiv

- @article{Li2026may,

- author = {Li, Danrui and Zhang, Jiahao and Egger, Bernhard and Chatterjee, Moitreya and Lohit, Suhas and Marks, Tim K. and Cherian, Anoop},

- title = {{AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects}},

- journal = {arXiv},

- year = 2026,

- month = may,

- url = {https://arxiv.org/abs/2605.12845}

- }

- , "Recovering Pulse Waves from Video Using Deep Unrolling and Deep Equilibrium Models", IEEE Transactions on Image Processing, DOI: 10.1109/TIP.2026.3671653, Vol. 35, pp. 2755-2770, March 2026.BibTeX TR2026-031 PDF

- @article{Shenoy2026mar,

- author = {Shenoy, Vineet and Lohit, Suhas and Mansour, Hassan and Chellappa, Rama and Marks, Tim K.},

- title = {{Recovering Pulse Waves from Video Using Deep Unrolling and Deep Equilibrium Models}},

- journal = {IEEE Transactions on Image Processing},

- year = 2026,

- volume = 35,

- pages = {2755--2770},

- month = mar,

- doi = {10.1109/TIP.2026.3671653},

- issn = {1941-0042},

- url = {https://www.merl.com/publications/TR2026-031}

- }

- , "Understanding Dynamic Compute Allocation in Recurrent Transformers", arXiv, February 2026.BibTeX arXiv

- @article{Moosa2026feb,

- author = {Moosa, Ibraheem Muhammad and Lohit, Suhas and Wang, Ye and Chatterjee, Moitreya and Yin, Wenpeng},

- title = {{Understanding Dynamic Compute Allocation in Recurrent Transformers}},

- journal = {arXiv},

- year = 2026,

- month = feb,

- url = {https://arxiv.org/abs/2602.08864}

- }

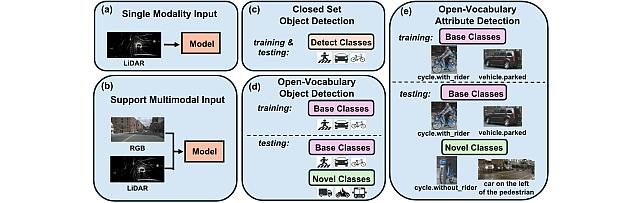

- , "Towards Open-Vocabulary Multimodal 3D Object Detection with Attributes", British Machine Vision Conference (BMVC), November 2025.BibTeX TR2025-162 PDF Video Data Presentation

- @inproceedings{Xiang2025nov,

- author = {{{Xiang, Xinhao and Peng, Kuan-Chuan and Lohit, Suhas and Jones, Michael J. and Zhang, Jiawei}}},

- title = {{{Towards Open-Vocabulary Multimodal 3D Object Detection with Attributes}}},

- booktitle = {British Machine Vision Conference (BMVC)},

- year = 2025,

- month = nov,

- url = {https://www.merl.com/publications/TR2025-162}

- }

- , "Time-Series U-Net with Recurrence for Noise-Robust Imaging Photoplethysmography", IEEE Access, DOI: 10.1109/ACCESS.2025.3617284, Vol. 13, pp. 173923-173938, October 2025.BibTeX TR2025-145 PDF

- @article{Shenoy2025oct,

- author = {Shenoy, Vineet and Wu, Shaoju and Comas, Armand and Lohit, Suhas and Mansour, Hassan and Marks, Tim K.},

- title = {{Time-Series U-Net with Recurrence for Noise-Robust Imaging Photoplethysmography}},

- journal = {IEEE Access},

- year = 2025,

- volume = 13,

- pages = {173923--173938},

- month = oct,

- doi = {10.1109/ACCESS.2025.3617284},

- url = {https://www.merl.com/publications/TR2025-145}

- }

- , "AssemblyBench: Physics-Aware Assembly of Complex Industrial Objects", arXiv, May 2026.

-

Other Publications

- , "Temporal Transformer Networks: Joint Learning of Invariant and Discriminative Time Warping", Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2019, pp. 12426-12435.BibTeX

- @Inproceedings{lohit2019temporal,

- author = {Lohit, Suhas and Wang, Qiao and Turaga, Pavan},

- title = {Temporal Transformer Networks: Joint Learning of Invariant and Discriminative Time Warping},

- booktitle = {Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition},

- year = 2019,

- pages = {12426--12435}

- }

- , "Convolutional neural networks for noniterative reconstruction of compressively sensed images", IEEE Transactions on Computational Imaging, Vol. 4, No. 3, pp. 326-340, 2018.BibTeX

- @Article{lohit2018convolutional,

- author = {Lohit, Suhas and Kulkarni, Kuldeep and Kerviche, Ronan and Turaga, Pavan and Ashok, Amit},

- title = {Convolutional neural networks for noniterative reconstruction of compressively sensed images},

- journal = {IEEE Transactions on Computational Imaging},

- year = 2018,

- volume = 4,

- number = 3,

- pages = {326--340},

- publisher = {IEEE}

- }

- , "Predicting Dynamical Evolution of Human Activities from a Single Image", Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, 2018, pp. 383-392.BibTeX

- @Inproceedings{lohit2018predicting,

- author = {Lohit, Suhas and Bansal, Ankan and Shroff, Nitesh and Pillai, Jaishanker and Turaga, Pavan and Chellappa, Rama},

- title = {Predicting Dynamical Evolution of Human Activities from a Single Image},

- booktitle = {Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops},

- year = 2018,

- pages = {383--392}

- }

- , "Learning invariant Riemannian geometric representations using deep nets", Proceedings of the IEEE International Conference on Computer Vision Workshops, 2017, pp. 1329-1338.BibTeX

- @Inproceedings{lohit2017learning,

- author = {Lohit, Suhas and Turaga, Pavan},

- title = {Learning invariant Riemannian geometric representations using deep nets},

- booktitle = {Proceedings of the IEEE International Conference on Computer Vision Workshops},

- year = 2017,

- pages = {1329--1338}

- }

- , "Reconnet: Non-iterative reconstruction of images from compressively sensed measurements", Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2016, pp. 449-458.BibTeX

- @Inproceedings{kulkarni2016reconnet,

- author = {Kulkarni, Kuldeep and Lohit, Suhas and Turaga, Pavan and Kerviche, Ronan and Ashok, Amit},

- title = {Reconnet: Non-iterative reconstruction of images from compressively sensed measurements},

- booktitle = {Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition},

- year = 2016,

- pages = {449--458}

- }

- , "Direct inference on compressive measurements using convolutional neural networks", 2016 IEEE International Conference on Image Processing (ICIP), 2016, pp. 1913-1917.BibTeX

- @Inproceedings{lohit2016direct,

- author = {Lohit, Suhas and Kulkarni, Kuldeep and Turaga, Pavan},

- title = {Direct inference on compressive measurements using convolutional neural networks},

- booktitle = {2016 IEEE International Conference on Image Processing (ICIP)},

- year = 2016,

- pages = {1913--1917},

- organization = {IEEE}

- }

- , "A statistical estimation framework for energy expenditure of physical activities from a wrist-worn accelerometer", 2016 38th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), 2016, pp. 2631-2635.BibTeX

- @Inproceedings{wang2016statistical,

- author = {Wang, Qiao and Lohit, Suhas and Toledo, Meynard John and Buman, Matthew P and Turaga, Pavan},

- title = {A statistical estimation framework for energy expenditure of physical activities from a wrist-worn accelerometer},

- booktitle = {2016 38th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC)},

- year = 2016,

- pages = {2631--2635},

- organization = {IEEE}

- }

- , "Reconstruction-free inference on compressive measurements", Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, 2015, pp. 16-24.BibTeX

- @Inproceedings{lohit2015reconstruction,

- author = {Lohit, Suhas and Kulkarni, Kuldeep and Turaga, Pavan and Wang, Jian and Sankaranarayanan, Aswin C},

- title = {Reconstruction-free inference on compressive measurements},

- booktitle = {Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops},

- year = 2015,

- pages = {16--24}

- }

- , "Temporal Transformer Networks: Joint Learning of Invariant and Discriminative Time Warping", Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2019, pp. 12426-12435.

-

Software & Data Downloads

-

Open Vocabulary Attribute Detection Dataset -

Group Representation Networks -

Zero-Shot Image Conditioning for Text-to-Video Diffusion Models -

Gear Extensions of Neural Radiance Fields -

Pixel-Grounded Prototypical Part Networks -

Steered Diffusion -

Simple Multimodal Algorithmic Reasoning Task Dataset -

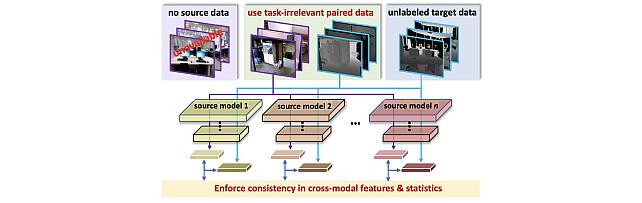

SOurce-free Cross-modal KnowledgE Transfer -

Physics-Aware Assembly of Complex Industrial Objects -

Understanding Dynamic Compute Allocation in Recurrent Transformers

-

-

Videos

-

MERL Issued Patents

-

Title: "SYSTEMS AND METHODS FOR INTERPRETABLE CLASSIFICATION OF IMAGES USING INHERENTLY EXPLAINABLE NEURAL NETWORKS"

Inventors: Jones, Michael J.; Lohit, Suhas; Cherian, Anoop; Carmichael, Zacharias

Patent No.: 12,633,103

Issue Date: May 19, 2026 -

Title: "System and Method for Cross-Modal Knowledge Transfer Without Task-Relevant Source Data"

Inventors: Lohit, Suhas; Ahmed, Sk Miraj; Peng, Kuan-Chuan; Jones, Michael J.

Patent No.: 12,511,549

Issue Date: Dec 30, 2025 -

Title: "Rendering Two-Dimensional Image of a Dynamic Three-Dimensional Scene"

Inventors: Chatterjee, Moitreya; Lohit, Suhas; Miraldo, Pedro

Patent No.: 12,475,636

Issue Date: Nov 18, 2025 -

Title: "System and Method for Generating a Radar Image of a Scene"

Inventors: Mansour, Hassan; Lohit, Suhas; Boufounos, Petros T.

Patent No.: 12,287,398

Issue Date: Apr 29, 2025 -

Title: "Systems and Methods for Multi-Spectral Image Fusion Using Unrolled Projected Gradient Descent and Convolutinoal Neural Network"

Inventors: Liu, Dehong; Lohit, Suhas; Mansour, Hassan; Boufounos, Petros T.

Patent No.: 10,891,527

Issue Date: Jan 12, 2021

-

Title: "SYSTEMS AND METHODS FOR INTERPRETABLE CLASSIFICATION OF IMAGES USING INHERENTLY EXPLAINABLE NEURAL NETWORKS"