Kuan-Chuan Peng

- Phone: 617-621-7576

- Email:

-

Position:

Research / Technical Staff

Principal Research Scientist -

Education:

Ph.D., Cornell University, 2016 -

Research Areas:

External Links:

Kuan-Chuan's Quick Links

-

Biography

Before joining MERL, he was a Research Scientist (2016-2018) and Staff Scientist (2019) at Siemens Corporate Technology. His PhD research focuses on solving abstract tasks in computer vision using convolutional neural networks. In addition to his PhD, he received a bachelor's degree in Electrical Engineering and an MS degree in Computer Science and Information Engineering from National Taiwan University in 2009 and 2012 respectively. His research interests include incremental learning, developing practical solutions given biased or scarce data, and fundamental computer vision and machine learning problems.

-

Recent News & Events

-

NEWS MERL Researchers at NeurIPS 2025 presented 2 conference papers, 5 workshop papers, and organized a workshop. Date: December 2, 2025 - December 7, 2025

Where: San Diego

MERL Contacts: Petros T. Boufounos; Anoop Cherian; Radu Corcodel; Stefano Di Cairano; Chiori Hori; Christopher R. Laughman; Suhas Lohit; Pedro Miraldo; Saviz Mowlavi; Kuan-Chuan Peng; Arvind Raghunathan; Abraham P. Vinod; Pu (Perry) Wang

Research Areas: Artificial Intelligence, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & AudioBrief- MERL researchers presented 2 main-conference papers and 5 workshop papers, as well as organized a workshop, at NeurIPS 2025.

Main Conference Papers:

1) Sorachi Kato, Ryoma Yataka, Pu Wang, Pedro Miraldo, Takuya Fujihashi, and Petros Boufounos, "RAPTR: Radar-based 3D Pose Estimation using Transformer", Code available at: https://github.com/merlresearch/radar-pose-transformer

2) Runyu Zhang, Arvind Raghunathan, Jeff Shamma, and Na Li, "Constrained Optimization From a Control Perspective via Feedback Linearization"

Workshop Papers:

1) Yuyou Zhang, Radu Corcodel, Chiori Hori, Anoop Cherian, and Ding Zhao, "SpinBench: Perspective and Rotation as a Lens on Spatial Reasoning in VLMs", NeuriIPS 2025 Workshop on SPACE in Vision, Language, and Embodied AI (SpaVLE) (Best Paper Runner-up)

2) Xiaoyu Xie, Saviz Mowlavi, and Mouhacine Benosman, "Smooth and Sparse Latent Dynamics in Operator Learning with Jerk Regularization", Workshop on Machine Learning and the Physical Sciences (ML4PS)

3) Spencer Hutchinson, Abraham Vinod, François Germain, Stefano Di Cairano, Christopher Laughman, and Ankush Chakrabarty, "Quantile-SMPC for Grid-Interactive Buildings with Multivariate Temporal Fusion Transformers", Workshop on UrbanAI: Harnessing Artificial Intelligence for Smart Cities (UrbanAI)

4) Yuki Shirai, Kei Ota, Devesh Jha, and Diego Romeres, "Sim-to-Real Contact-Rich Pivoting via Optimization-Guided RL with Vision and Touch", Worskhop on Embodied World Models for Decision Making

5) Mark Van der Merwe and Devesh Jha, "In-Context Policy Iteration for Dynamic Manipulation", Workshop on Embodied World Models for Decision Making

Workshop Organized:

MERL members co-organized the Multimodal Algorithmic Reasoning (MAR) Workshop (https://marworkshop.github.io/neurips25/). Organizers: Anoop Cherian (Mitsubishi Electric Research Laboratories), Kuan-Chuan Peng (Mitsubishi Electric Research Laboratories), Suhas Lohit (Mitsubishi Electric Research Laboratories), Honglu Zhou (Salesforce AI Research), Kevin Smith (Massachusetts Institute of Technology), and Joshua B. Tenenbaum (Massachusetts Institute of Technology).

- MERL researchers presented 2 main-conference papers and 5 workshop papers, as well as organized a workshop, at NeurIPS 2025.

-

NEWS MERL Papers, Workshops, and Talks at ICCV 2025 Date: October 19, 2025 - October 23, 2025

Where: Honolulu, HI, USA

MERL Contacts: Petros T. Boufounos; Anoop Cherian; Toshiaki Koike-Akino; Hassan Mansour; Tim K. Marks; Pedro Miraldo; Kuan-Chuan Peng; Pu (Perry) Wang

Research Areas: Artificial Intelligence, Computer Vision, Machine Learning, Signal ProcessingBrief- MERL researchers presented 3 conference papers and 3 workshop papers, co-organized 2 workshops, and delivered 2 invited talks at the IEEE International Conference on Computer Vision (ICCV) 2025, which was held in Honolulu, HI, USA from October 19-23, 2025. ICCV is one of the most prestigious and competitive international conferences in the area of computer vision. Details of MERL contributions are provided below:

Main Conference Papers:

1. "SAC-GNC: SAmple Consensus for adaptive Graduated Non-Convexity" by V. Piedade, C. Sidhartha, J. Gaspar, V. M. Govindu, and P. Miraldo. (Highlight Paper)

Paper: https://www.merl.com/publications/TR2025-146

2. "Toward Long-Tailed Online Anomaly Detection through Class-Agnostic Concepts" by C.-A. Yang, K.-C. Peng, and R. A. Yeh.

Paper: https://www.merl.com/publications/TR2025-124

3. "Manual-PA: Learning 3D Part Assembly from Instruction Diagrams" by J. Zhang, A. Cherian, C. Rodriguez-Opazo, W. Deng, and S. Gould.

Paper: https://www.merl.com/publications/TR2025-139

MERL Co-Organized Workshops:

1. "The Workshop on Anomaly Detection with Foundation Models (ADFM)" by K.-C. Peng, Y. Zhao, and A. Aich.

Workshop link: https://adfmw.github.io/iccv25/

2. "The 8th International Workshop on Computer Vision for Physiological Measurement (CVPM)" by D. McDuff, W. Wang, S. Stuijk, T. Marks, H. Mansour, V. R. Shenoy.

Workshop link: https://sstuijk.estue.nl/cvpm/cvpm25/

MERL Keynote Talks at Workshops:

1. Tim K. Marks, Keynote Speaker at the Workshop on Computer Vision for Physiological Measurement (CVPM).

Workshop website: https://vineetrshenoy.github.io/cvpmSeptember2025/

2. Tim K. Marks, Keynote Speaker at the Workshop on Analysis and Modeling of Faces and Gestures (AMFG).

Workshop website: https://fulab.sites.northeastern.edu/amfg2025/

Workshop Papers:

1. "Joint Training of Image Generator and Detector for Road Defect Detection" by K.-C. Peng.

paper: https://www.merl.com/publications/TR2025-149

2. "Radar-Conditioned 3D Bounding Box Diffusion for Indoor Human Perception" by R. Yataka, P. Wang, P.T. Boufounos, and R. Takahashi.

paper: https://www.merl.com/publications/TR2025-154

3. "L-GGSC: Learnable Graph-based Gaussian Splatting Compression" by S. Kato, T. Koike-Akino, and T. Fujihashi.

paper: https://www.merl.com/publications/TR2025-148

- MERL researchers presented 3 conference papers and 3 workshop papers, co-organized 2 workshops, and delivered 2 invited talks at the IEEE International Conference on Computer Vision (ICCV) 2025, which was held in Honolulu, HI, USA from October 19-23, 2025. ICCV is one of the most prestigious and competitive international conferences in the area of computer vision. Details of MERL contributions are provided below:

See All News & Events for Kuan-Chuan -

-

Research Highlights

-

MERL Publications

- , "Auto-Vocabulary 3D Object Detection", arXiv, December 2025.

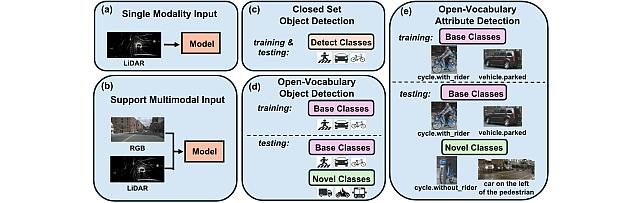

- , "Towards Open-Vocabulary Multimodal 3D Object Detection with Attributes", British Machine Vision Conference (BMVC), November 2025.BibTeX TR2025-162 PDF Video Data Presentation

- @inproceedings{Xiang2025nov,

- author = {{{Xiang, Xinhao and Peng, Kuan-Chuan and Lohit, Suhas and Jones, Michael J. and Zhang, Jiawei}}},

- title = {{{Towards Open-Vocabulary Multimodal 3D Object Detection with Attributes}}},

- booktitle = {British Machine Vision Conference (BMVC)},

- year = 2025,

- month = nov,

- url = {https://www.merl.com/publications/TR2025-162}

- }

- , "Joint Training of Image Generator and Detector for Road Defect Detection", IEEE International Conference on Computer Vision (ICCV) Workshops, October 2025.BibTeX TR2025-149 PDF Video Presentation

- @inproceedings{Peng2025oct,

- author = {{{Peng, Kuan-Chuan}}},

- title = {{{Joint Training of Image Generator and Detector for Road Defect Detection}}},

- booktitle = {IEEE International Conference on Computer Vision (ICCV) Workshops},

- year = 2025,

- month = oct,

- url = {https://www.merl.com/publications/TR2025-149}

- }

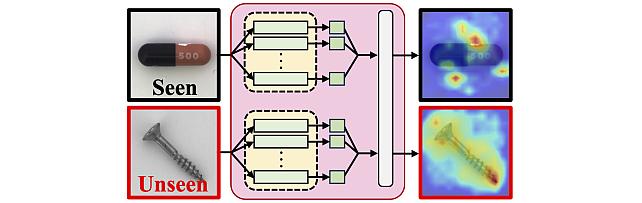

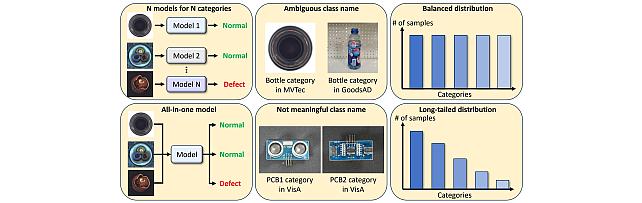

- , "Toward Long-Tailed Online Anomaly Detection through Class-Agnostic Concepts", IEEE International Conference on Computer Vision (ICCV), October 2025.BibTeX TR2025-124 PDF Video Data Presentation

- @inproceedings{Yang2025oct,

- author = {{{Yang, Chiao-An and Peng, Kuan-Chuan and Yeh, Raymond}}},

- title = {{{Toward Long-Tailed Online Anomaly Detection through Class-Agnostic Concepts}}},

- booktitle = {IEEE International Conference on Computer Vision (ICCV)},

- year = 2025,

- month = oct,

- url = {https://www.merl.com/publications/TR2025-124}

- }

- , "Multimodal 3D Object Detection on Unseen Domains", IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshop, June 2025, pp. 2499-2509.BibTeX TR2025-078 PDF

- @inproceedings{Hegde2025jun,

- author = {Hegde, Deepti and Lohit, Suhas and Peng, Kuan-Chuan and Jones, Michael J. and Patel, Vishal M.},

- title = {{Multimodal 3D Object Detection on Unseen Domains}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshop},

- year = 2025,

- pages = {2499--2509},

- month = jun,

- url = {https://www.merl.com/publications/TR2025-078}

- }

-

Other Publications

- , "Learning without Memorizing", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2019.BibTeX

- @Inproceedings{Dhar_CVPR19,

- author = {Dhar, Prithviraj and Singh, Rajat Vikram and Peng, Kuan-Chuan and Wu, Ziyan and Chellappa, Rama},

- title = {Learning without Memorizing},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2019

- }

- , "Guided Attention Inference Network", IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), 2019.BibTeX

- @Article{Li_TPAMI19,

- author = {Li, Kunpeng and Wu, Ziyan and Peng, Kuan-Chuan and Ernst, Jan and Fu, Yun},

- title = {Guided Attention Inference Network},

- journal = {IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI)},

- year = 2019,

- publisher = {IEEE}

- }

- , "Sharpen Focus: Learning with Attention Separability and Consistency", IEEE International Conference on Computer Vision (ICCV), 2019.BibTeX

- @Inproceedings{Wang_ICCV19,

- author = {Wang, Lezi and Wu, Ziyan and Karanam, Srikrishna and Peng, Kuan-Chuan and Singh, Rajat Vikram and Liu, Bo and Metaxas, Dimitris N.},

- title = {Sharpen Focus: Learning with Attention Separability and Consistency},

- booktitle = {IEEE International Conference on Computer Vision (ICCV)},

- year = 2019

- }

- , "Learning Compositional Visual Concepts with Mutual Consistency", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018.BibTeX

- @Inproceedings{Gong_CVPR18,

- author = {Gong, Yunye and Karanam, Srikrishna and Wu, Ziyan and Peng, Kuan-Chuan and Ernst, Jan and Doerschuk, Peter C.},

- title = {Learning Compositional Visual Concepts with Mutual Consistency},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2018

- }

- , "Tell Me Where to Look: Guided Attention Inference Network", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018.BibTeX

- @Inproceedings{Li_CVPR18,

- author = {Li, Kunpeng and Wu, Ziyan and Peng, Kuan-Chuan and Ernst, Jan and Fu, Yun},

- title = {Tell Me Where to Look: Guided Attention Inference Network},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2018

- }

- , "Zero-Shot Deep Domain Adaptation", European Conference on Computer Vision (ECCV), 2018.BibTeX

- @Inproceedings{Peng_ECCV18,

- author = {Peng, Kuan-Chuan and Wu, Ziyan and Ernst, Jan},

- title = {Zero-Shot Deep Domain Adaptation},

- booktitle = {European Conference on Computer Vision (ECCV)},

- year = 2018

- }

- , "Where Do Emotions Come from? Predicting the Emotion Stimuli Map", IEEE International Conference on Image Processing (ICIP), 2016.BibTeX

- @Inproceedings{Peng_ICIP16,

- author = {Peng, Kuan-Chuan and Chen, Tsuhan and Sadovnik, Amir and Gallagher, Andrew C.},

- title = {Where Do Emotions Come from? Predicting the Emotion Stimuli Map},

- booktitle = {IEEE International Conference on Image Processing (ICIP)},

- year = 2016

- }

- , "Toward Correlating and Solving Abstract Tasks Using Convolutional Neural Networks", IEEE Winter Conference on Applications of Computer Vision (WACV), 2016.BibTeX

- @Inproceedings{Peng_WACV16,

- author = {Peng, Kuan-Chuan and Chen, Tsuhan},

- title = {Toward Correlating and Solving Abstract Tasks Using Convolutional Neural Networks},

- booktitle = {IEEE Winter Conference on Applications of Computer Vision (WACV)},

- year = 2016

- }

- , "A Mixed Bag of Emotions: Model, Predict, and Transfer Emotion Distributions", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2015.BibTeX

- @Inproceedings{Peng_CVPR15,

- author = {Peng, Kuan-Chuan and Chen, Tsuhan and Sadovnik, Amir and Gallagher, Andrew C.},

- title = {A Mixed Bag of Emotions: Model, Predict, and Transfer Emotion Distributions},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2015

- }

- , "Cross-layer Features in Convolutional Neural Networks for Generic Classification Tasks", IEEE International Conference on Image Processing (ICIP), 2015.BibTeX

- @Inproceedings{Peng_ICIP15,

- author = {Peng, Kuan-Chuan and Chen, Tsuhan},

- title = {Cross-layer Features in Convolutional Neural Networks for Generic Classification Tasks},

- booktitle = {IEEE International Conference on Image Processing (ICIP)},

- year = 2015

- }

- , "A Framework of Extracting Multi-scale Features Using Multiple Convolutional Neural Network", IEEE International Conference on Multimedia and Expo (ICME), 2015.BibTeX

- @Inproceedings{Peng_ICME15,

- author = {Peng, Kuan-Chuan and Chen, Tsuhan},

- title = {A Framework of Extracting Multi-scale Features Using Multiple Convolutional Neural Network},

- booktitle = {IEEE International Conference on Multimedia and Expo (ICME)},

- year = 2015

- }

- , "A Framework of Changing Image Emotion Using Emotion Prediction", IEEE International Conference on Image Processing (ICIP), 2014.BibTeX

- @Inproceedings{Peng_ICIP14,

- author = {Peng, Kuan-Chuan and Karlsson, Kolbeinn and Chen, Tsuhan and Zhang, Dongqing and Yu, Hong Heather},

- title = {A Framework of Changing Image Emotion Using Emotion Prediction},

- booktitle = {IEEE International Conference on Image Processing (ICIP)},

- year = 2014

- }

- , "Incorporating Cloud Distribution in Sky Representation", IEEE International Conference on Computer Vision (ICCV), 2013.BibTeX

- @Inproceedings{Peng_ICCV13,

- author = {Peng, Kuan-Chuan and Chen, Tsuhan},

- title = {Incorporating Cloud Distribution in Sky Representation},

- booktitle = {IEEE International Conference on Computer Vision (ICCV)},

- year = 2013

- }

- , "Learning without Memorizing", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2019.

-

Software & Data Downloads

-

Videos

-

MERL Issued Patents

-

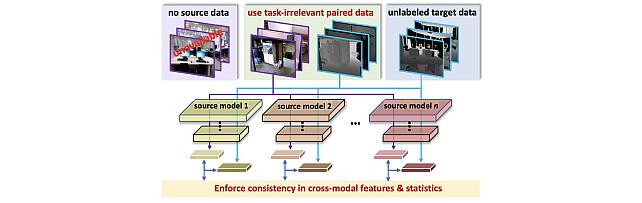

Title: "System and Method for Cross-Modal Knowledge Transfer Without Task-Relevant Source Data"

Inventors: Lohit, Suhas; Ahmed, Sk Miraj; Peng, Kuan-Chuan; Jones, Michael J.

Patent No.: 12,511,549

Issue Date: Dec 30, 2025 -

Title: "Method and System for Zero-Shot Cross Domain Video Anomaly Detection"

Inventors: Peng, Kuan-Chuan; Aich, Abhishek

Patent No.: 12,315,242

Issue Date: May 27, 2025 -

Title: "Contactless Elevator Service for an Elevator Based on Augmented Datasets"

Inventors: Sahinoglu, Zafer; Peng, Kuan-Chuan; Sullivan, Alan; Yerazunis, William S.

Patent No.: 12,071,323

Issue Date: Aug 27, 2024

-

Title: "System and Method for Cross-Modal Knowledge Transfer Without Task-Relevant Source Data"