Artificial Intelligence

Making machines smarter for improved safety, efficiency and comfort.

Our AI research encompasses advances in computer vision, speech and audio processing, as well as data analytics. Key research themes include improved perception based on machine learning techniques, learning control policies through model-based reinforcement learning, as well as cognition and reasoning based on learned semantic representations. We apply our work to a broad range of automotive and robotics applications, as well as building and home systems.

Quick Links

-

Researchers

Jonathan

Le Roux

Toshiaki

Koike-Akino

Ye

Wang

Gordon

Wichern

Anoop

Cherian

Tim K.

Marks

Chiori

Hori

Michael J.

Jones

Kieran

Parsons

François

Germain

Daniel N.

Nikovski

Devesh K.

Jha

Jing

Liu

Suhas

Lohit

Matthew

Brand

Philip V.

Orlik

Pu

(Perry)

Wang

Moitreya

Chatterjee

Kuan-Chuan

Peng

Diego

Romeres

Petros T.

Boufounos

Siddarth

Jain

Hassan

Mansour

Yoshiki

Masuyama

William S.

Yerazunis

Radu

Corcodel

Pedro

Miraldo

Arvind

Raghunathan

Jianlin

Guo

Hongbo

Sun

Yebin

Wang

Ankush

Chakrabarty

Chungwei

Lin

Yanting

Ma

Bingnan

Wang

Ryo

Aihara

Stefano

Di Cairano

Saviz

Mowlavi

Anthony

Vetro

Jinyun

Zhang

Vedang M.

Deshpande

Christopher R.

Laughman

Dehong

Liu

Naoko

Sawada

Alexander

Schperberg

Wataru

Tsujita

Abraham P.

Vinod

Kenji

Inomata

Na

Li

-

Awards

-

AWARD MERL Wins Awards at NeurIPS LLM Privacy Challenge Date: December 15, 2024

Awarded to: Jing Liu, Ye Wang, Toshiaki Koike-Akino, Tsunato Nakai, Kento Oonishi, Takuya Higashi

MERL Contacts: Toshiaki Koike-Akino; Jing Liu; Ye Wang

Research Areas: Artificial Intelligence, Machine Learning, Information SecurityBrief- The Mitsubishi Electric Privacy Enhancing Technologies (MEL-PETs) team, consisting of a collaboration of MERL and Mitsubishi Electric researchers, won awards at the NeurIPS 2024 Large Language Model (LLM) Privacy Challenge. In the Blue Team track of the challenge, we won the 3rd Place Award, and in the Red Team track, we won the Special Award for Practical Attack.

-

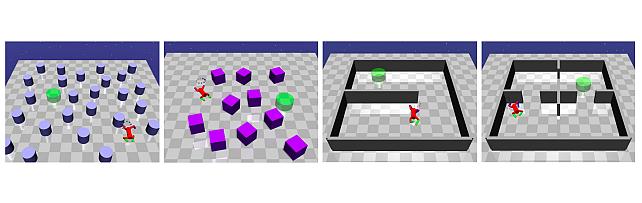

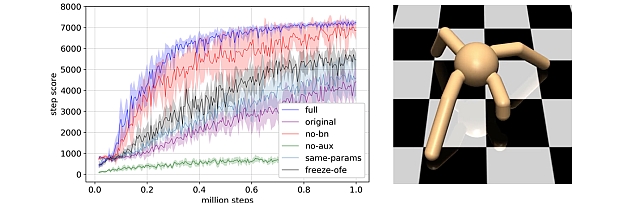

AWARD University of Padua and MERL team wins the AI Olympics with RealAIGym competition at IROS24 Date: October 17, 2024

Awarded to: Niccolò Turcato, Alberto Dalla Libera, Giulio Giacomuzzo, Ruggero Carli, Diego Romeres

MERL Contact: Diego Romeres

Research Areas: Artificial Intelligence, Dynamical Systems, Machine Learning, RoboticsBrief- The team composed of the control group at the University of Padua and MERL's Optimization and Robotic team ranked 1st out of the 4 finalist teams that arrived to the 2nd AI Olympics with RealAIGym competition at IROS 24, which focused on control of under-actuated robots. The team was composed by Niccolò Turcato, Alberto Dalla Libera, Giulio Giacomuzzo, Ruggero Carli and Diego Romeres. The competition was organized by the German Research Center for Artificial Intelligence (DFKI), Technical University of Darmstadt and Chalmers University of Technology.

The competition and award ceremony was hosted by IEEE International Conference on Intelligent Robots and Systems (IROS) on October 17, 2024 in Abu Dhabi, UAE. Diego Romeres presented the team's method, based on a model-based reinforcement learning algorithm called MC-PILCO.

- The team composed of the control group at the University of Padua and MERL's Optimization and Robotic team ranked 1st out of the 4 finalist teams that arrived to the 2nd AI Olympics with RealAIGym competition at IROS 24, which focused on control of under-actuated robots. The team was composed by Niccolò Turcato, Alberto Dalla Libera, Giulio Giacomuzzo, Ruggero Carli and Diego Romeres. The competition was organized by the German Research Center for Artificial Intelligence (DFKI), Technical University of Darmstadt and Chalmers University of Technology.

-

AWARD MERL team wins the Listener Acoustic Personalisation (LAP) 2024 Challenge Date: August 29, 2024

Awarded to: Yoshiki Masuyama, Gordon Wichern, Francois G. Germain, Christopher Ick, and Jonathan Le Roux

MERL Contacts: François Germain; Jonathan Le Roux; Gordon Wichern; Yoshiki Masuyama

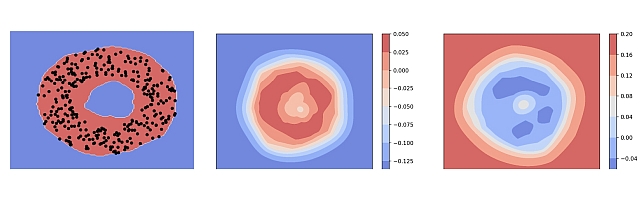

Research Areas: Artificial Intelligence, Machine Learning, Speech & AudioBrief- MERL's Speech & Audio team ranked 1st out of 7 teams in Task 2 of the 1st SONICOM Listener Acoustic Personalisation (LAP) Challenge, which focused on "Spatial upsampling for obtaining a high-spatial-resolution HRTF from a very low number of directions". The team was led by Yoshiki Masuyama, and also included Gordon Wichern, Francois Germain, MERL intern Christopher Ick, and Jonathan Le Roux.

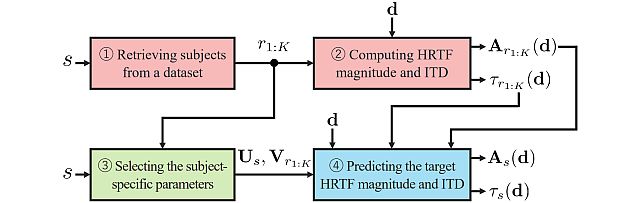

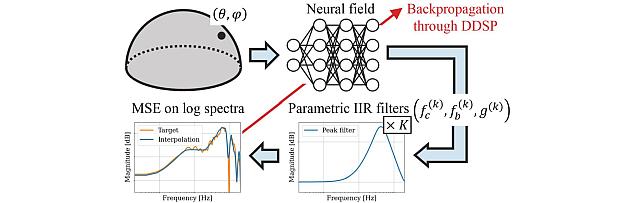

The LAP Challenge workshop and award ceremony was hosted by the 32nd European Signal Processing Conference (EUSIPCO 24) on August 29, 2024 in Lyon, France. Yoshiki Masuyama presented the team's method, "Retrieval-Augmented Neural Field for HRTF Upsampling and Personalization", and received the award from Prof. Michele Geronazzo (University of Padova, IT, and Imperial College London, UK), Chair of the Challenge's Organizing Committee.

The LAP challenge aims to explore challenges in the field of personalized spatial audio, with the first edition focusing on the spatial upsampling and interpolation of head-related transfer functions (HRTFs). HRTFs with dense spatial grids are required for immersive audio experiences, but their recording is time-consuming. Although HRTF spatial upsampling has recently shown remarkable progress with approaches involving neural fields, HRTF estimation accuracy remains limited when upsampling from only a few measured directions, e.g., 3 or 5 measurements. The MERL team tackled this problem by proposing a retrieval-augmented neural field (RANF). RANF retrieves a subject whose HRTFs are close to those of the target subject at the measured directions from a library of subjects. The HRTF of the retrieved subject at the target direction is fed into the neural field in addition to the desired sound source direction. The team also developed a neural network architecture that can handle an arbitrary number of retrieved subjects, inspired by a multi-channel processing technique called transform-average-concatenate.

- MERL's Speech & Audio team ranked 1st out of 7 teams in Task 2 of the 1st SONICOM Listener Acoustic Personalisation (LAP) Challenge, which focused on "Spatial upsampling for obtaining a high-spatial-resolution HRTF from a very low number of directions". The team was led by Yoshiki Masuyama, and also included Gordon Wichern, Francois Germain, MERL intern Christopher Ick, and Jonathan Le Roux.

See All Awards for Artificial Intelligence -

-

News & Events

-

NEWS Toshiaki Koike-Akino to give a tutorial talk at ISIT 2025 Quantum Hackathon Date: June 22, 2025

Where: IEEE International Symposium on Information Theory (ISIT)

MERL Contact: Toshiaki Koike-Akino

Research Areas: Artificial Intelligence, Communications, Data Analytics, Machine Learning, Optimization, Signal Processing, Human-Computer Interaction, Information SecurityBrief- Toshiaki Koike-Akino is invited to present a tutorial talk at IEEE ISIT 2025 Quantum Hackathon, to be held at Ann Arbor, Michigan, USA. The talk, entitled "Emerging Quantum AI Technology", will discuss the recent trends, challenges, and applications of quantum artificial intelligence (QAI) technologies.

The ISIT 2025 Quantum Hackathon invites participants to explore the intersection of quantum computing and information theory. Participants will work with quantum simulators, available quantum hardware, and state-of-the-art development kits to create innovative solutions that connect quantum advancements with challenges in communication and signal processing.

The IEEE International Symposium on Information Theory (ISIT) is the flagship conference of the IEEE Information Theory Society. The symposium centers around the presentation in all of the areas of information theory, including source and channel coding, communication theory and systems, cryptography and security, detection and estimation, networks, pattern recognition and learning, statistics, stochastic processes and complexity, and signal processing.

- Toshiaki Koike-Akino is invited to present a tutorial talk at IEEE ISIT 2025 Quantum Hackathon, to be held at Ann Arbor, Michigan, USA. The talk, entitled "Emerging Quantum AI Technology", will discuss the recent trends, challenges, and applications of quantum artificial intelligence (QAI) technologies.

-

NEWS MERL Papers and Workshops at CVPR 2025 Date: June 11, 2025 - June 15, 2025

Where: Nashville, TN, USA

MERL Contacts: Matthew Brand; Moitreya Chatterjee; Anoop Cherian; François Germain; Michael J. Jones; Toshiaki Koike-Akino; Jing Liu; Suhas Lohit; Tim K. Marks; Pedro Miraldo; Kuan-Chuan Peng; Naoko Sawada; Pu (Perry) Wang; Ye Wang

Research Areas: Artificial Intelligence, Computer Vision, Machine Learning, Signal Processing, Speech & AudioBrief- MERL researchers are presenting 2 conference papers, co-organizing two workshops, and presenting 7 workshop papers at the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2025 conference, which will be held in Nashville, TN, USA from June 11-15, 2025. CVPR is one of the most prestigious and competitive international conferences in the area of computer vision. Details of MERL contributions are provided below:

Main Conference Papers:

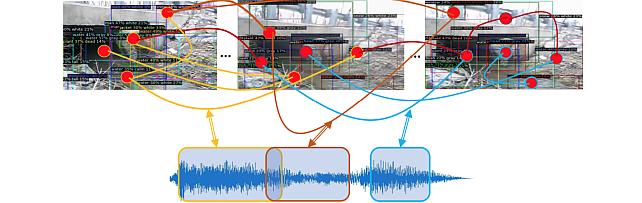

1. "UWAV: Uncertainty-weighted Weakly-supervised Audio-Visual Video Parsing" by Y.H. Lai, J. Ebbers, Y. F. Wang, F. Germain, M. J. Jones, M. Chatterjee

This work deals with the task of weakly‑supervised Audio-Visual Video Parsing (AVVP) and proposes a novel, uncertainty-aware algorithm called UWAV towards that end. UWAV works by producing more reliable segment‑level pseudo‑labels while explicitly weighting each label by its prediction uncertainty. This uncertainty‑aware training, combined with a feature‑mixup regularization scheme, promotes inter‑segment consistency in the pseudo-labels. As a result, UWAV achieves state‑of‑the‑art performance on two AVVP datasets across multiple metrics, demonstrating both effectiveness and strong generalizability.

Paper: https://www.merl.com/publications/TR2025-072

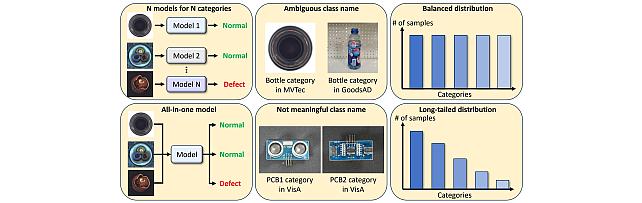

2. "TailedCore: Few-Shot Sampling for Unsupervised Long-Tail Noisy Anomaly Detection" by Y. G. Jung, J. Park, J. Yoon, K.-C. Peng, W. Kim, A. B. J. Teoh, and O. Camps.

This work tackles unsupervised anomaly detection in complex scenarios where normal data is noisy and has an unknown, imbalanced class distribution. Existing models face a trade-off between robustness to noise and performance on rare (tail) classes. To address this, the authors propose TailSampler, which estimates class sizes from embedding similarities to isolate tail samples. Using TailSampler, they develop TailedCore, a memory-based model that effectively captures tail class features while remaining noise-robust, outperforming state-of-the-art methods in extensive evaluations.

paper: https://www.merl.com/publications/TR2025-077

MERL Co-Organized Workshops:

1. Multimodal Algorithmic Reasoning (MAR) Workshop, organized by A. Cherian, K.-C. Peng, S. Lohit, H. Zhou, K. Smith, L. Xue, T. K. Marks, and J. Tenenbaum.

Workshop link: https://marworkshop.github.io/cvpr25/

2. The 6th Workshop on Fair, Data-Efficient, and Trusted Computer Vision, organized by N. Ratha, S. Karanam, Z. Wu, M. Vatsa, R. Singh, K.-C. Peng, M. Merler, and K. Varshney.

Workshop link: https://fadetrcv.github.io/2025/

Workshop Papers:

1. "FreBIS: Frequency-Based Stratification for Neural Implicit Surface Representations" by N. Sawada, P. Miraldo, S. Lohit, T.K. Marks, and M. Chatterjee (Oral)

With their ability to model object surfaces in a scene as a continuous function, neural implicit surface reconstruction methods have made remarkable strides recently, especially over classical 3D surface reconstruction methods, such as those that use voxels or point clouds. Towards this end, we propose FreBIS - a neural implicit‑surface framework that avoids overloading a single encoder with every surface detail. It divides a scene into several frequency bands and assigns a dedicated encoder (or group of encoders) to each band, then enforces complementary feature learning through a redundancy‑aware weighting module. Swapping this frequency‑stratified stack into an off‑the‑shelf reconstruction pipeline markedly boosts 3D surface accuracy and view‑consistent rendering on the challenging BlendedMVS dataset.

paper: https://www.merl.com/publications/TR2025-074

2. "Multimodal 3D Object Detection on Unseen Domains" by D. Hegde, S. Lohit, K.-C. Peng, M. J. Jones, and V. M. Patel.

LiDAR-based object detection models often suffer performance drops when deployed in unseen environments due to biases in data properties like point density and object size. Unlike domain adaptation methods that rely on access to target data, this work tackles the more realistic setting of domain generalization without test-time samples. We propose CLIX3D, a multimodal framework that uses both LiDAR and image data along with supervised contrastive learning to align same-class features across domains and improve robustness. CLIX3D achieves state-of-the-art performance across various domain shifts in 3D object detection.

paper: https://www.merl.com/publications/TR2025-078

3. "Improving Open-World Object Localization by Discovering Background" by A. Singh, M. J. Jones, K.-C. Peng, M. Chatterjee, A. Cherian, and E. Learned-Miller.

This work tackles open-world object localization, aiming to detect both seen and unseen object classes using limited labeled training data. While prior methods focus on object characterization, this approach introduces background information to improve objectness learning. The proposed framework identifies low-information, non-discriminative image regions as background and trains the model to avoid generating object proposals there. Experiments on standard benchmarks show that this method significantly outperforms previous state-of-the-art approaches.

paper: https://www.merl.com/publications/TR2025-058

4. "PF3Det: A Prompted Foundation Feature Assisted Visual LiDAR 3D Detector" by K. Li, T. Zhang, K.-C. Peng, and G. Wang.

This work addresses challenges in 3D object detection for autonomous driving by improving the fusion of LiDAR and camera data, which is often hindered by domain gaps and limited labeled data. Leveraging advances in foundation models and prompt engineering, the authors propose PF3Det, a multi-modal detector that uses foundation model encoders and soft prompts to enhance feature fusion. PF3Det achieves strong performance even with limited training data. It sets new state-of-the-art results on the nuScenes dataset, improving NDS by 1.19% and mAP by 2.42%.

paper: https://www.merl.com/publications/TR2025-076

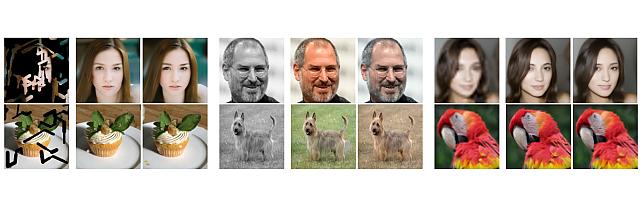

5. "Noise Consistency Regularization for Improved Subject-Driven Image Synthesis" by Y. Ni., S. Wen, P. Konius, A. Cherian

Fine-tuning Stable Diffusion enables subject-driven image synthesis by adapting the model to generate images containing specific subjects. However, existing fine-tuning methods suffer from two key issues: underfitting, where the model fails to reliably capture subject identity, and overfitting, where it memorizes the subject image and reduces background diversity. To address these challenges, two auxiliary consistency losses are porposed for diffusion fine-tuning. First, a prior consistency regularization loss ensures that the predicted diffusion noise for prior (non- subject) images remains consistent with that of the pretrained model, improving fidelity. Second, a subject consistency regularization loss enhances the fine-tuned model’s robustness to multiplicative noise modulated latent code, helping to preserve subject identity while improving diversity. Our experimental results demonstrate the effectiveness of our approach in terms of image diversity, outperforming DreamBooth in terms of CLIP scores, background variation, and overall visual quality.

paper: https://www.merl.com/publications/TR2025-073

6. "LatentLLM: Attention-Aware Joint Tensor Compression" by T. Koike-Akino, X. Chen, J. Liu, Y. Wang, P. Wang, M. Brand

We propose a new framework to convert a large foundation model such as large language models (LLMs)/large multi- modal models (LMMs) into a reduced-dimension latent structure. Our method uses a global attention-aware joint tensor decomposition to significantly improve the model efficiency. We show the benefit on several benchmark including multi-modal reasoning tasks.

paper: https://www.merl.com/publications/TR2025-075

7. "TuneComp: Joint Fine-Tuning and Compression for Large Foundation Models" by T. Koike-Akino, X. Chen, J. Liu, Y. Wang, P. Wang, M. Brand

To reduce model size during post-training, compression methods, including knowledge distillation, low-rank approximation, and pruning, are often applied after fine- tuning the model. However, sequential fine-tuning and compression sacrifices performance, while creating a larger than necessary model as an intermediate step. In this work, we aim to reduce this gap, by directly constructing a smaller model while guided by the downstream task. We propose to jointly fine-tune and compress the model by gradually distilling it to a pruned low-rank structure. Experiments demonstrate that joint fine-tuning and compression significantly outperforms other sequential compression methods.

paper: https://www.merl.com/publications/TR2025-079

- MERL researchers are presenting 2 conference papers, co-organizing two workshops, and presenting 7 workshop papers at the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2025 conference, which will be held in Nashville, TN, USA from June 11-15, 2025. CVPR is one of the most prestigious and competitive international conferences in the area of computer vision. Details of MERL contributions are provided below:

See All News & Events for Artificial Intelligence -

-

Research Highlights

-

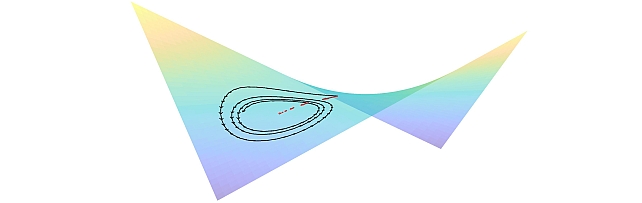

PS-NeuS: A Probability-guided Sampler for Neural Implicit Surface Rendering -

Quantum AI Technology -

TI2V-Zero: Zero-Shot Image Conditioning for Text-to-Video Diffusion Models -

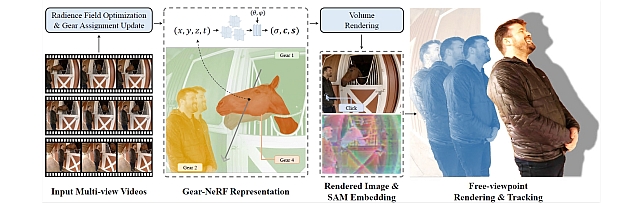

Gear-NeRF: Free-Viewpoint Rendering and Tracking with Motion-Aware Spatio-Temporal Sampling -

Steered Diffusion -

Sustainable AI -

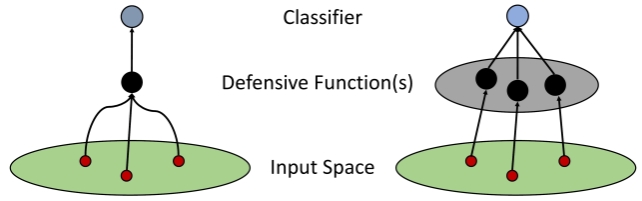

Robust Machine Learning -

mmWave Beam-SNR Fingerprinting (mmBSF) -

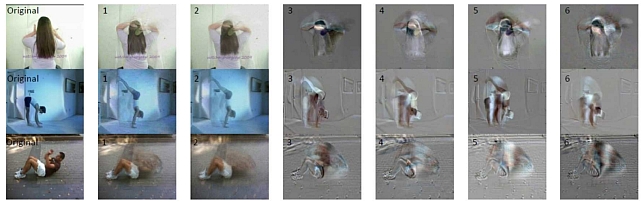

Video Anomaly Detection -

Biosignal Processing for Human-Machine Interaction -

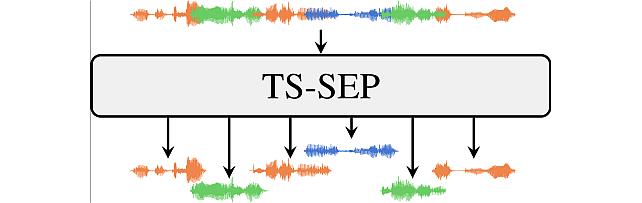

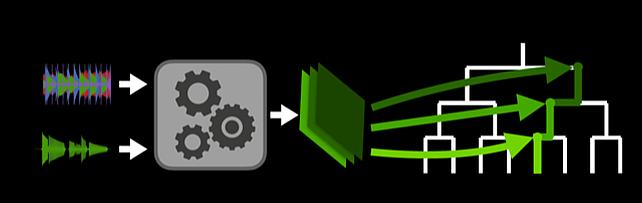

Task-aware Unified Source Separation - Audio Examples

-

-

Internships

-

EA0076: Internship - Machine Learning for Electric Motor Design

MERL is seeking a motivated and qualified intern to conduct research on machine learning based electric motor design and optimization. Ideal candidates should be Ph.D. students with a solid background and publication record in electric machine design, optimization, and machine learning. Hands-on experience with the implementation of optimization algorithms, machine learning and deep learning methods is required. Strong programming skills using Python/PyTorch are expected. Knowledge and experience with electric machine principle, design and finite-element analysis are highly desirable. Start date for this internship is flexible and the duration is about 3 months.

-

OR0115: Internship - Whole-body dexterous manipulation

MERL is looking for a highly motivated individual to work on whole-body dexterous manipulation. The research will develop robot motor skills for whole-body, dexterous manipulation using optimization and/or learning algorithms. The ideal candidate should have experience in either one or multiple of the following topics: Optimization Algorithms for contact systems, Reinforcement Learning, control through contacts, and Behavioral cloning. Senior PhD students in robotics and engineering with a focus on contact-rich manipulation are encouraged to apply. Prior experience working with physical robotic systems (and vision and tactile sensors) is required as results need to be implemented on a physical hardware. Good coding skills in Python ML libraries like PyTorch etc. and/or relevant Optimization packages is required. A successful internship will result in submission of results to a peer-reviewed robotics journal in collaboration with MERL researchers. The expected duration of internship is 4-5 months with start date in May/June 2025. This internship is preferred to be onsite at MERL.

Required Specific Experience

- Prior experience working with physical hardware system is required.

- Prior publication experience in robotics venues like ICRA,RSS, CoRL.

-

ST0105: Internship - Surrogate Modeling for Sound Propagation

MERL is seeking a motivated and qualified individual to work on fast surrogate models for sound emission and propagation from complex vibrating structures, with applications in HVAC noise reduction. The ideal candidate will be a PhD student in engineering or related fields with a solid background in frequency-domain acoustic modeling and numerical techniques for partial differential equations (PDEs). Preferred skills include knowledge of the boundary element method (BEM), data-driven modeling, and physics-informed machine learning. Publication of the results obtained during the internship is expected. The duration is expected to be at least 3 months with a flexible start date.

See All Internships for Artificial Intelligence -

-

Openings

See All Openings at MERL -

Recent Publications

- , "Quantum Diffusion Models for Few-Shot Learning", ICAD, June 2025.BibTeX TR2025-095 PDF

- @inproceedings{Wang2025jun2,

- author = {Wang, Ruhan and Wang, Ye and Liu, Jing and Koike-Akino, Toshiaki},

- title = {{Quantum Diffusion Models for Few-Shot Learning}},

- booktitle = {ICAD},

- year = 2025,

- month = jun,

- url = {https://www.merl.com/publications/TR2025-095}

- }

- , "Single- and Multi-Channel Speech Enhancement and Separation for Far-Field Conversation Recognition," Tech. Rep. TR2025-097, Jelinek Summer Workshop on Speech and Language Technology (JSALT), June 2025.BibTeX TR2025-097 PDF

- @techreport{Masuyama2025jun,

- author = {{{Masuyama, Yoshiki}}},

- title = {{{Single- and Multi-Channel Speech Enhancement and Separation for Far-Field Conversation Recognition}}},

- institution = {Jelinek Summer Workshop on Speech and Language Technology (JSALT)},

- year = 2025,

- month = jun,

- url = {https://www.merl.com/publications/TR2025-097}

- }

- , "TuneComp: Joint Fine-Tuning and Compression for Large Foundation Models", IEEE Conference on Computer Vision and Pattern Recognition (CVPR) workshop on Efficient and On-Device Generation, June 2025.BibTeX TR2025-079 PDF

- @inproceedings{Chen2025jun,

- author = {Chen, Xiangyu and Liu, Jing and Wang, Ye and Brand, Matthew and Wang, Pu and Koike-Akino, Toshiaki},

- title = {{TuneComp: Joint Fine-Tuning and Compression for Large Foundation Models}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR) workshop on Efficient and On-Device Generation},

- year = 2025,

- month = jun,

- url = {https://www.merl.com/publications/TR2025-079}

- }

- , "Multimodal 3D Object Detection on Unseen Domains", IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshop, June 2025.BibTeX TR2025-078 PDF

- @inproceedings{Hegde2025jun,

- author = {Hegde, Deepti and Lohit, Suhas and Peng, Kuan-Chuan and Jones, Michael J. and Patel, Vishal M.},

- title = {{Multimodal 3D Object Detection on Unseen Domains}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshop},

- year = 2025,

- month = jun,

- url = {https://www.merl.com/publications/TR2025-078}

- }

- , "TailedCore: Few-Shot Sampling for Unsupervised Long-Tail Noisy Anomaly Detection", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2025.BibTeX TR2025-077 PDF Video Presentation

- @inproceedings{Jung2025jun,

- author = {{{Jung, Yoon G. and Park, Jaewoo and Yoon, Jaeho and Peng, Kuan-Chuan and Kim, Wonchul and Teoh, Andrew B. J. and Camps, Octavia}}},

- title = {{{TailedCore: Few-Shot Sampling for Unsupervised Long-Tail Noisy Anomaly Detection}}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2025,

- month = jun,

- url = {https://www.merl.com/publications/TR2025-077}

- }

- , "LatentLLM: Attention-Aware Joint Tensor Compression", IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshop, June 2025.BibTeX TR2025-075 PDF

- @inproceedings{Koike-Akino2025jun,

- author = {Koike-Akino, Toshiaki and Chen, Xiangyu and Liu, Jing and Wang, Ye and Wang, Pu and Brand, Matthew},

- title = {{LatentLLM: Attention-Aware Joint Tensor Compression}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshop},

- year = 2025,

- month = jun,

- url = {https://www.merl.com/publications/TR2025-075}

- }

- , "UWAV: Uncertainty-weighted Weakly-supervised Audio-Visual Video Parsing", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2025.BibTeX TR2025-072 PDF

- @inproceedings{Lai2025jun,

- author = {Lai, Yung-Hsuan and Ebbers, Janek and Wang, Yu-Chiang Frank and Germain, François G and Jones, Michael J. and Chatterjee, Moitreya},

- title = {{UWAV: Uncertainty-weighted Weakly-supervised Audio-Visual Video Parsing}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2025,

- month = jun,

- url = {https://www.merl.com/publications/TR2025-072}

- }

- , "PF3Det: A Prompted Foundation Feature Assisted Visual LiDAR 3D Detector", IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshop, June 2025.BibTeX TR2025-076 PDF Presentation

- @inproceedings{Li2025jun,

- author = {{{Li, Kaidong and Zhang, Tianxiao and Peng, Kuan-Chuan and Wang, Guanghui}}},

- title = {{{PF3Det: A Prompted Foundation Feature Assisted Visual LiDAR 3D Detector}}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshop},

- year = 2025,

- month = jun,

- url = {https://www.merl.com/publications/TR2025-076}

- }

- , "Quantum Diffusion Models for Few-Shot Learning", ICAD, June 2025.

-

Videos

-

Software & Data Downloads

-

MEL-PETs Joint-Context Attack for LLM Privacy Challenge -

MEL-PETs Defense for LLM Privacy Challenge -

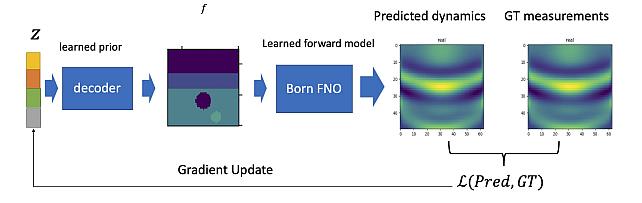

Learned Born Operator for Reflection Tomographic Imaging -

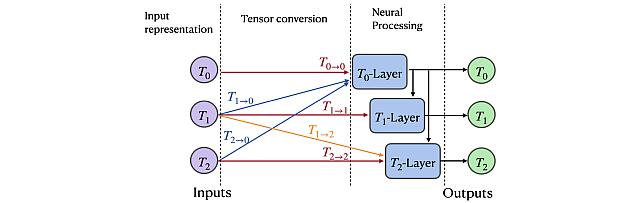

Group Representation Networks -

Retrieval-Augmented Neural Field for HRTF Upsampling and Personalization -

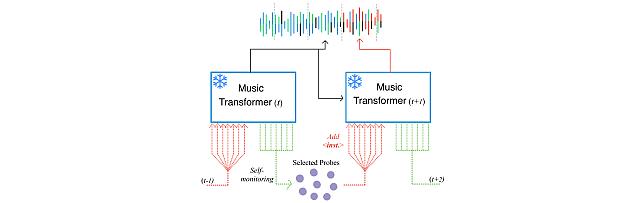

Self-Monitored Inference-Time INtervention for Generative Music Transformers -

Transformer-based model with LOcal-modeling by COnvolution -

Sound Event Bounding Boxes -

Enhanced Reverberation as Supervision -

Gear Extensions of Neural Radiance Fields -

Long-Tailed Anomaly Detection Dataset -

Neural IIR Filter Field for HRTF Upsampling and Personalization -

Target-Speaker SEParation -

Pixel-Grounded Prototypical Part Networks -

Steered Diffusion -

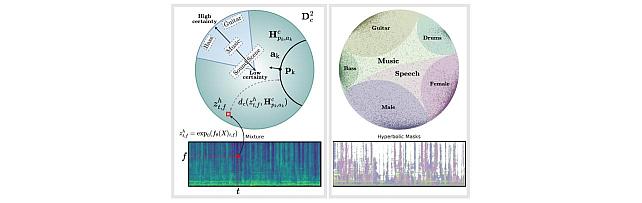

Hyperbolic Audio Source Separation -

Simple Multimodal Algorithmic Reasoning Task Dataset -

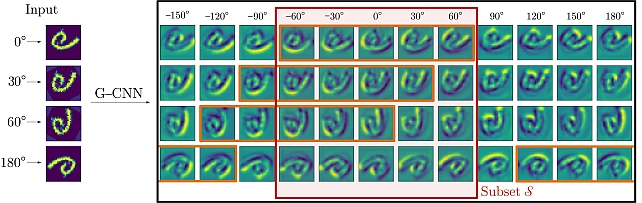

Partial Group Convolutional Neural Networks -

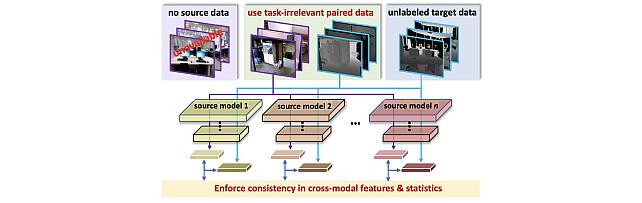

SOurce-free Cross-modal KnowledgE Transfer -

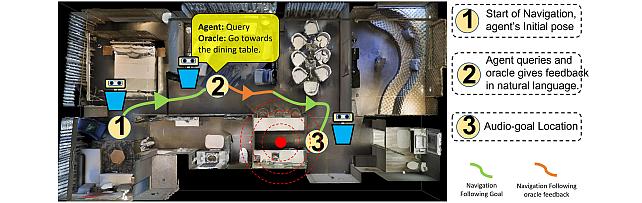

Audio-Visual-Language Embodied Navigation in 3D Environments -

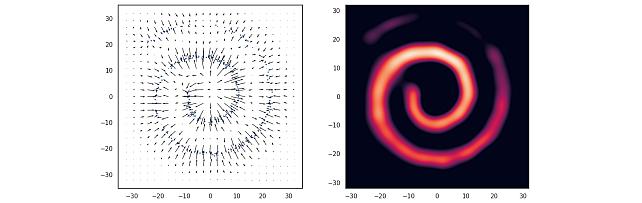

Nonparametric Score Estimators -

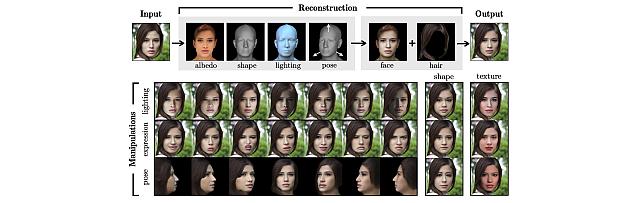

3D MOrphable STyleGAN -

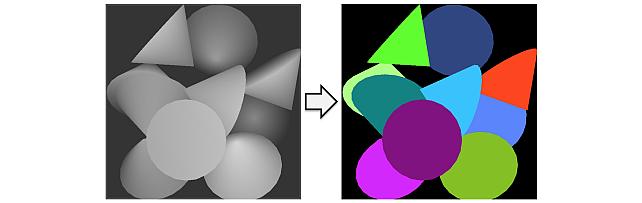

Instance Segmentation GAN -

Audio Visual Scene-Graph Segmentor -

Generalized One-class Discriminative Subspaces -

Goal directed RL with Safety Constraints -

Hierarchical Musical Instrument Separation -

Generating Visual Dynamics from Sound and Context -

Adversarially-Contrastive Optimal Transport -

Online Feature Extractor Network -

MotionNet -

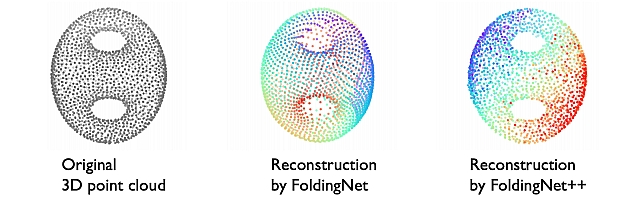

FoldingNet++ -

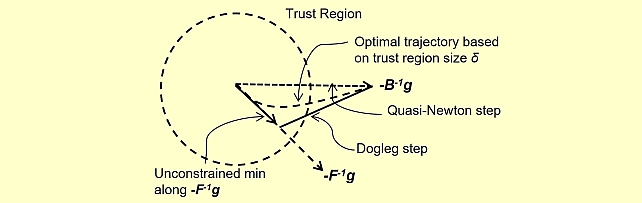

Quasi-Newton Trust Region Policy Optimization -

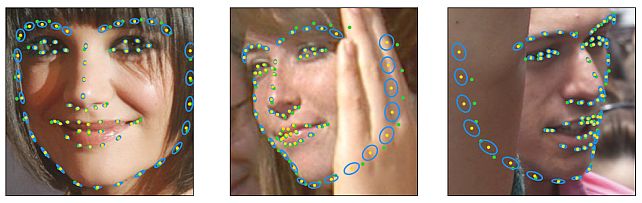

Landmarks’ Location, Uncertainty, and Visibility Likelihood -

Robust Iterative Data Estimation -

Gradient-based Nikaido-Isoda -

Discriminative Subspace Pooling

-