Robotics

Where hardware, software and machine intelligence come together.

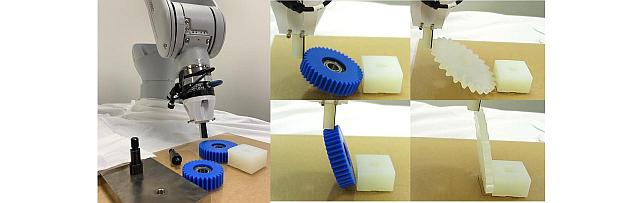

Our research is interdisciplinary and focuses on sensing, planning, reasoning, and control of single and multi-agent systems, including both manipulation and mobile robots. We strive to develop algorithms and methods for factory automation, smart building and transportation applications using machine learning, computer vision, RF/optical sensing, wireless communications, control theory and signal processing. Key research themes include bin picking and object manipulation, sensing and mapping of indoor areas, coordinated control of robot swarms, as well as robot learning and simulation.

Quick Links

-

Researchers

Diego

Romeres

Daniel N.

Nikovski

Stefano

Di Cairano

Siddarth

Jain

Arvind

Raghunathan

Radu

Corcodel

Yebin

Wang

William S.

Yerazunis

Toshiaki

Koike-Akino

Abraham P.

Vinod

Chiori

Hori

Avishai

Weiss

Tim K.

Marks

Jonathan

Le Roux

Scott A.

Bortoff

Anoop

Cherian

Alexander

Schperberg

Ye

Wang

Bingnan

Wang

Matthew

Brand

Pedro

Miraldo

Philip V.

Orlik

Purnanand

Elango

Abraham

Goldsmith

Jianlin

Guo

Jing

Liu

Hassan

Mansour

Yoshiki

Masuyama

Saviz

Mowlavi

Zhaolin

Ren

Anthony

Vetro

Kei

Suzuki

-

Awards

-

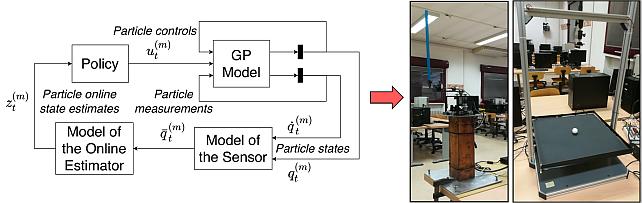

AWARD University of Padua and MERL team wins the AI Olympics with RealAIGym competition at IROS24 Date: October 17, 2024

Awarded to: Niccolò Turcato, Alberto Dalla Libera, Giulio Giacomuzzo, Ruggero Carli, Diego Romeres

MERL Contact: Diego Romeres

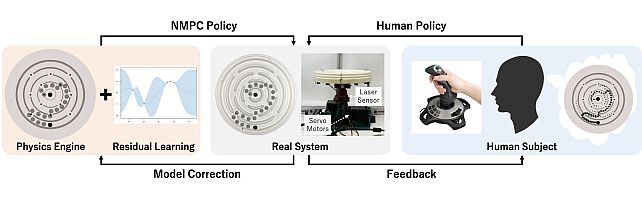

Research Areas: Artificial Intelligence, Dynamical Systems, Machine Learning, RoboticsBrief- The team composed of the control group at the University of Padua and MERL's Optimization and Robotic team ranked 1st out of the 4 finalist teams that arrived to the 2nd AI Olympics with RealAIGym competition at IROS 24, which focused on control of under-actuated robots. The team was composed by Niccolò Turcato, Alberto Dalla Libera, Giulio Giacomuzzo, Ruggero Carli and Diego Romeres. The competition was organized by the German Research Center for Artificial Intelligence (DFKI), Technical University of Darmstadt and Chalmers University of Technology.

The competition and award ceremony was hosted by IEEE International Conference on Intelligent Robots and Systems (IROS) on October 17, 2024 in Abu Dhabi, UAE. Diego Romeres presented the team's method, based on a model-based reinforcement learning algorithm called MC-PILCO.

- The team composed of the control group at the University of Padua and MERL's Optimization and Robotic team ranked 1st out of the 4 finalist teams that arrived to the 2nd AI Olympics with RealAIGym competition at IROS 24, which focused on control of under-actuated robots. The team was composed by Niccolò Turcato, Alberto Dalla Libera, Giulio Giacomuzzo, Ruggero Carli and Diego Romeres. The competition was organized by the German Research Center for Artificial Intelligence (DFKI), Technical University of Darmstadt and Chalmers University of Technology.

-

AWARD Honorable Mention Award at NeurIPS 23 Instruction Workshop Date: December 15, 2023

Awarded to: Lingfeng Sun, Devesh K. Jha, Chiori Hori, Siddharth Jain, Radu Corcodel, Xinghao Zhu, Masayoshi Tomizuka and Diego Romeres

MERL Contacts: Radu Corcodel; Chiori Hori; Siddarth Jain; Diego Romeres

Research Areas: Artificial Intelligence, Machine Learning, RoboticsBrief- MERL Researchers received an "Honorable Mention award" at the Workshop on Instruction Tuning and Instruction Following at the NeurIPS 2023 conference in New Orleans. The workshop was on the topic of instruction tuning and Instruction following for Large Language Models (LLMs). MERL researchers presented their work on interactive planning using LLMs for partially observable robotic tasks during the oral presentation session at the workshop.

-

AWARD Joint University of Padua-MERL team wins Challenge 'AI Olympics With RealAIGym' Date: August 25, 2023

Awarded to: Alberto Dalla Libera, Niccolo' Turcato, Giulio Giacomuzzo, Ruggero Carli, Diego Romeres

MERL Contact: Diego Romeres

Research Areas: Artificial Intelligence, Machine Learning, RoboticsBrief- A joint team consisting of members of University of Padua and MERL ranked 1st in the IJCAI2023 Challenge "Al Olympics With RealAlGym: Is Al Ready for Athletic Intelligence in the Real World?". The team was composed by MERL researcher Diego Romeres and a team from University Padua (UniPD) consisting of Alberto Dalla Libera, Ph.D., Ph.D. Candidates: Niccolò Turcato, Giulio Giacomuzzo and Prof. Ruggero Carli from University of Padua.

The International Joint Conference on Artificial Intelligence (IJCAI) is a premier gathering for AI researchers and organizes several competitions. This year the competition CC7 "AI Olympics With RealAIGym: Is AI Ready for Athletic Intelligence in the Real World?" consisted of two stages: simulation and real-robot experiments on two under-actuated robotic systems. The two robotics systems were treated as separate tracks and one final winner was selected for each track based on specific performance criteria in the control tasks.

The UniPD-MERL team competed and won in both tracks. The team's system made strong use of a Model-based Reinforcement Learning algorithm called (MC-PILCO) that we recently published in the journal IEEE Transaction on Robotics.

- A joint team consisting of members of University of Padua and MERL ranked 1st in the IJCAI2023 Challenge "Al Olympics With RealAlGym: Is Al Ready for Athletic Intelligence in the Real World?". The team was composed by MERL researcher Diego Romeres and a team from University Padua (UniPD) consisting of Alberto Dalla Libera, Ph.D., Ph.D. Candidates: Niccolò Turcato, Giulio Giacomuzzo and Prof. Ruggero Carli from University of Padua.

See All Awards for Robotics -

-

News & Events

-

NEWS MERL Researcher Diego Romeres Collaborates with Mitsubishi Electric and University of Padua to Advance Physics-Embedded AI for Predictive Equipment Maintenance Date: December 10, 2025

MERL Contact: Diego Romeres

Research Areas: Artificial Intelligence, Machine Learning, RoboticsBrief- Mitsubishi Electric Research Laboratories (MERL) researchers, together with collaborators at Mitsubishi Electric’s Information Technology R&D Center in Kamakura, Kanagawa Prefecture, Japan, and the Department of Information Engineering at the University of Padua, have developed a cutting-edge physics-embedded AI technology that substantially improves the accuracy of equipment degradation estimation using minimal training data. This collaborative effort has culminated in a press release by Mitsubishi Electric Corporation announcing the new AI technology as part of its Neuro-Physical AI initiative under the Maisart program.

The interdisciplinary team, including MERL Senior Principal Research Scientist and Team Leader Diego Romeres and University of Padua researchers Alberto Dalla Libera and Giulio Giacomuzzo, combined expertise in machine learning, physical modeling, and real-world industrial systems to embed physics-based models directly into AI frameworks. By training AI with theoretical physical laws and real operational data, the resulting system delivers reliable degradation estimates on the torque of robotic arms even with limited datasets. This result addresses key challenges in preventive maintenance for complex manufacturing environments and supports reduced downtime, maintained quality, and lower lifecycle costs.

The successful integration of these foundational research efforts into Mitsubishi Electric’s business-scale AI solutions exemplifies MERL’s commitment to translating fundamental innovation into real-world impact.

- Mitsubishi Electric Research Laboratories (MERL) researchers, together with collaborators at Mitsubishi Electric’s Information Technology R&D Center in Kamakura, Kanagawa Prefecture, Japan, and the Department of Information Engineering at the University of Padua, have developed a cutting-edge physics-embedded AI technology that substantially improves the accuracy of equipment degradation estimation using minimal training data. This collaborative effort has culminated in a press release by Mitsubishi Electric Corporation announcing the new AI technology as part of its Neuro-Physical AI initiative under the Maisart program.

-

NEWS MERL Researchers at NeurIPS 2025 presented 2 conference papers, 5 workshop papers, and organized a workshop. Date: December 2, 2025 - December 7, 2025

Where: San Diego

MERL Contacts: Petros T. Boufounos; Anoop Cherian; Radu Corcodel; Stefano Di Cairano; Chiori Hori; Christopher R. Laughman; Suhas Anand Lohit; Pedro Miraldo; Saviz Mowlavi; Kuan-Chuan Peng; Arvind Raghunathan; Diego Romeres; Abraham P. Vinod; Pu (Perry) Wang

Research Areas: Artificial Intelligence, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & AudioBrief- MERL researchers presented 2 main-conference papers and 5 workshop papers, as well as organized a workshop, at NeurIPS 2025.

Main Conference Papers:

1) Sorachi Kato, Ryoma Yataka, Pu Wang, Pedro Miraldo, Takuya Fujihashi, and Petros Boufounos, "RAPTR: Radar-based 3D Pose Estimation using Transformer", Code available at: https://github.com/merlresearch/radar-pose-transformer

2) Runyu Zhang, Arvind Raghunathan, Jeff Shamma, and Na Li, "Constrained Optimization From a Control Perspective via Feedback Linearization"

Workshop Papers:

1) Yuyou Zhang, Radu Corcodel, Chiori Hori, Anoop Cherian, and Ding Zhao, "SpinBench: Perspective and Rotation as a Lens on Spatial Reasoning in VLMs", NeuriIPS 2025 Workshop on SPACE in Vision, Language, and Embodied AI (SpaVLE) (Best Paper Runner-up)

2) Xiaoyu Xie, Saviz Mowlavi, and Mouhacine Benosman, "Smooth and Sparse Latent Dynamics in Operator Learning with Jerk Regularization", Workshop on Machine Learning and the Physical Sciences (ML4PS)

3) Spencer Hutchinson, Abraham Vinod, François Germain, Stefano Di Cairano, Christopher Laughman, and Ankush Chakrabarty, "Quantile-SMPC for Grid-Interactive Buildings with Multivariate Temporal Fusion Transformers", Workshop on UrbanAI: Harnessing Artificial Intelligence for Smart Cities (UrbanAI)

4) Yuki Shirai, Kei Ota, Devesh Jha, and Diego Romeres, "Sim-to-Real Contact-Rich Pivoting via Optimization-Guided RL with Vision and Touch", Worskhop on Embodied World Models for Decision Making

5) Mark Van der Merwe and Devesh Jha, "In-Context Policy Iteration for Dynamic Manipulation", Workshop on Embodied World Models for Decision Making

Workshop Organized:

MERL members co-organized the Multimodal Algorithmic Reasoning (MAR) Workshop (https://marworkshop.github.io/neurips25/). Organizers: Anoop Cherian (Mitsubishi Electric Research Laboratories), Kuan-Chuan Peng (Mitsubishi Electric Research Laboratories), Suhas Lohit (Mitsubishi Electric Research Laboratories), Honglu Zhou (Salesforce AI Research), Kevin Smith (Massachusetts Institute of Technology), and Joshua B. Tenenbaum (Massachusetts Institute of Technology).

- MERL researchers presented 2 main-conference papers and 5 workshop papers, as well as organized a workshop, at NeurIPS 2025.

See All News & Events for Robotics -

-

Research Highlights

-

Internships

-

OR0249: Internship - Whole-body manipulation for quadrupedal robots

-

CI0190: Internship - IoT Network Methodology

-

CA0274: Internship - Infrastructure inspection/repair using heterogenous robot teams

See All Internships for Robotics -

-

Openings

See All Openings at MERL -

Recent Publications

- , "Learning Non-prehensile Manipulation with Force and Vision Feedback Using Optimization-based Demonstrations", IEEE Robotics and Automation Letters, January 2026.BibTeX TR2026-011 PDF Video

- @article{Shirai2026jan,

- author = {Shirai, Yuki and Ota, Kei and Jha, Devesh K. and Romeres, Diego},

- title = {{Learning Non-prehensile Manipulation with Force and Vision Feedback Using Optimization-based Demonstrations}},

- journal = {IEEE Robotics and Automation Letters},

- year = 2026,

- month = jan,

- url = {https://www.merl.com/publications/TR2026-011}

- }

- , "Real-time Human Progress Estimation with Online Dynamic Time Warping for Collaborative Robotics", Frontiers, December 2025.BibTeX TR2025-173 PDF

- @article{DeLazzari2025dec,

- author = {De Lazzari, Davide and Terreran, Matteo and Giacomuzzo, Giulio and Jain, Siddarth and Falco, Pietro and Carli, Ruggero and Ghidoni, Stefano and Romeres, Diego},

- title = {{Real-time Human Progress Estimation with Online Dynamic Time Warping for Collaborative Robotics}},

- journal = {Frontiers},

- year = 2025,

- month = dec,

- url = {https://www.merl.com/publications/TR2025-173}

- }

- , "Simultaneous Extrinsic Contact and In-Hand Pose Estimation via Distributed Tactile Sensing", IEEE RA-L, December 2025.BibTeX TR2026-002 PDF

- @article{VanderMerwe2025dec2,

- author = {Van der Merwe, Mark and Ota, Kei and Berenson, Dmitry and Fazeli, Nima and Jha, Devesh K.},

- title = {{Simultaneous Extrinsic Contact and In-Hand Pose Estimation via Distributed Tactile Sensing}},

- journal = {IEEE RA-L},

- year = 2025,

- month = dec,

- url = {https://www.merl.com/publications/TR2026-002}

- }

- , "Motion Planning for Information Acquisition via Continuous-time Successive Convexification", IEEE Conference on Decision and Control (CDC), December 2025.BibTeX TR2025-170 PDF

- @inproceedings{Uzun2025dec,

- author = {Uzun, Samet and Acikmese, Behcet and {Di Cairano}, Stefano},

- title = {{Motion Planning for Information Acquisition via Continuous-time Successive Convexification}},

- booktitle = {IEEE Conference on Decision and Control (CDC)},

- year = 2025,

- month = dec,

- url = {https://www.merl.com/publications/TR2025-170}

- }

- , "Robot Confirmation Generation and Action Planning Using Long-context Q-Former Integrated with Multimodal LLM", IEEE Workshop on Automatic Speech Recognition and Understanding (ASRU), December 2025.BibTeX TR2025-167 PDF

- @inproceedings{Hori2025dec,

- author = {Hori, Chiori and Masuyama, Yoshiki and Jain, Siddarth and Corcodel, Radu and Jha, Devesh K. and Romeres, Diego and {Le Roux}, Jonathan},

- title = {{Robot Confirmation Generation and Action Planning Using Long-context Q-Former Integrated with Multimodal LLM}},

- booktitle = {IEEE Workshop on Automatic Speech Recognition and Understanding (ASRU)},

- year = 2025,

- month = dec,

- url = {https://www.merl.com/publications/TR2025-167}

- }

- , "Sim-to-Real Contact-Rich Pivoting via Optimization-Guided RL with Vision and Touch", Embodied World Models for Decision Making, NeurIPS Workshop, December 2025.BibTeX TR2025-169 PDF Video

- @inproceedings{Shirai2025dec,

- author = {Shirai, Yuki and Ota, Kei and Jha, Devesh K. and Romeres, Diego},

- title = {{Sim-to-Real Contact-Rich Pivoting via Optimization-Guided RL with Vision and Touch}},

- booktitle = {NeurIPS 2025 Workshop on Embodied World Models for Decision Making},

- year = 2025,

- month = dec,

- url = {https://www.merl.com/publications/TR2025-169}

- }

- , "AxisBench: What Can Go Wrong in VLMs’ Spatial Reasoning?", Advances in Neural Information Processing Systems (NeurIPS) workshop, December 2025.BibTeX TR2025-168 PDF

- @inproceedings{Zhang2025dec2,

- author = {{{Zhang, Yuyou and Corcodel, Radu and Hori, Chiori and Cherian, Anoop and Zhao, Ding}}},

- title = {{{AxisBench: What Can Go Wrong in VLMs’ Spatial Reasoning?}}},

- booktitle = {Advances in Neural Information Processing Systems (NeurIPS) workshop},

- year = 2025,

- month = dec,

- url = {https://www.merl.com/publications/TR2025-168}

- }

- , "In-Context Policy Iteration for Dynamic Manipulation", Advances in Neural Information Processing Systems (NeurIPS) Workshop on Embodied World Models for Decision Making, December 2025.BibTeX TR2025-163 PDF Video

- @inproceedings{VanderMerwe2025dec,

- author = {Van der Merwe, Mark and Jha, Devesh K.},

- title = {{In-Context Policy Iteration for Dynamic Manipulation}},

- booktitle = {Advances in Neural Information Processing Systems (NeurIPS) Workshop on Embodied World Models for Decision Making},

- year = 2025,

- month = dec,

- url = {https://www.merl.com/publications/TR2025-163}

- }

- , "Learning Non-prehensile Manipulation with Force and Vision Feedback Using Optimization-based Demonstrations", IEEE Robotics and Automation Letters, January 2026.

-

Videos

-

Software & Data Downloads

-

Lagrangian Inspired Polynomial for Robot Inverse Dynamics -

Monte Carlo Probabilistic Inference for Learning COntrol -

Python-based Robotic Control & Optimization Package -

Context-Aware Zero Shot Learning -

Online Feature Extractor Network -

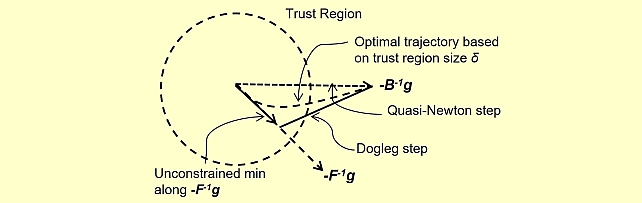

Quasi-Newton Trust Region Policy Optimization -

Circular Maze Environment

-