TR2024-026

NIIRF: Neural IIR Filter Field for HRTF Upsampling and Personalization

-

- , "NIIRF: Neural IIR Filter Field for HRTF Upsampling and Personalization", IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), DOI: 10.1109/ICASSP48485.2024.10448477, March 2024, pp. 1016-1020.BibTeX TR2024-026 PDF Software

- @inproceedings{Masuyama2024mar,

- author = {Masuyama, Yoshiki and Wichern, Gordon and Germain, François G and Pan, Zexu and Khurana, Sameer and Hori, Chiori and {Le Roux}, Jonathan},

- title = {{NIIRF: Neural IIR Filter Field for HRTF Upsampling and Personalization}},

- booktitle = {IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP)},

- year = 2024,

- pages = {1016--1020},

- month = mar,

- doi = {10.1109/ICASSP48485.2024.10448477},

- url = {https://www.merl.com/publications/TR2024-026}

- }

- , "NIIRF: Neural IIR Filter Field for HRTF Upsampling and Personalization", IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), DOI: 10.1109/ICASSP48485.2024.10448477, March 2024, pp. 1016-1020.

-

MERL Contacts:

-

Research Areas:

Abstract:

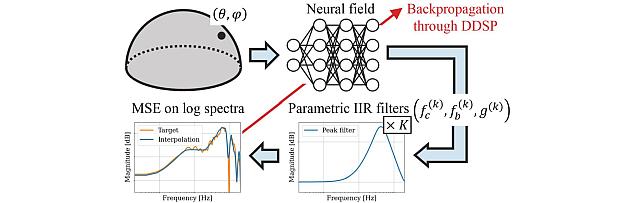

Head-related transfer functions (HRTFs) are important for immersive audio, and their spatial interpolation has been studied to up- sample finite measurements. Recently, neural fields (NFs) which map from sound source direction to HRTF have gained attention. Existing NF-based methods focused on estimating the magnitude of the HRTF from a given sound source direction, and the magnitude is converted to a finite impulse response (FIR) filter. We propose the neural infinite impulse response filter field (NIIRF) method that instead estimates the coefficients of cascaded IIR filters. IIR filters mimic the modal nature of HRTFs, thus needing fewer coefficients to approximate them well compared to FIR filters. We find that our method can match the performance of existing NF-based methods on multiple datasets, even outperforming them when measurements are sparse. We also explore approaches to personalize the NF to a subject and experimentally find low-rank adaptation to be effective.

Software & Data Downloads

Related News & Events

-

EVENT SANE 2025 - Speech and Audio in the Northeast Date: Friday, November 7, 2025

Location: Google, New York, NY

MERL Contacts: Jonathan Le Roux; Yoshiki Masuyama

Research Areas: Artificial Intelligence, Machine Learning, Speech & AudioBrief- SANE 2025, a one-day event gathering researchers and students in speech and audio from the Northeast of the American continent, was held on Friday November 7, 2025 at Google, in New York, NY.

It was the 12th edition in the SANE series of workshops, which started in 2012 and is typically held every year alternately in Boston and New York. Since the first edition, the audience has grown to about 200 participants and 50 posters each year, and SANE has established itself as a vibrant, must-attend event for the speech and audio community across the northeast and beyond.

SANE 2025 featured invited talks by six leading researchers from the Northeast as well as from the wider community: Dan Ellis (Google Deepmind), Leibny Paola Garcia Perera (Johns Hopkins University), Yuki Mitsufuji (Sony AI), Julia Hirschberg (Columbia University), Yoshiki Masuyama (MERL), and Robin Scheibler (Google Deepmind). It also featured a lively poster session with 50 posters.

MERL Speech and Audio Team's Yoshiki Masuyama presented a well-received overview of the team's recent work on "Neural Fields for Spatial Audio Modeling". His talk highlighted how neural fields are reshaping spatial audio research by enabling flexible, data-driven interpolation of head-related transfer functions and room impulse responses. He also discussed the integration of sound-propagation physics into neural field models through physics-informed neural networks, showcasing MERL’s advances at the intersection of acoustics and deep learning.

SANE 2025 was co-organized by Jonathan Le Roux (MERL), Quan Wang (Google Deepmind), and John R. Hershey (Google Deepmind). SANE remained a free event thanks to generous sponsorship by Google, MERL, Apple, Bose, and Carnegie Mellon University.

Slides and videos of the talks are available from the SANE workshop website and via a YouTube playlist.

- SANE 2025, a one-day event gathering researchers and students in speech and audio from the Northeast of the American continent, was held on Friday November 7, 2025 at Google, in New York, NY.

-

AWARD MERL team wins the Listener Acoustic Personalisation (LAP) 2024 Challenge Date: August 29, 2024

Awarded to: Yoshiki Masuyama, Gordon Wichern, Francois G. Germain, Christopher Ick, and Jonathan Le Roux

MERL Contacts: Jonathan Le Roux; Gordon Wichern; Yoshiki Masuyama

Research Areas: Artificial Intelligence, Machine Learning, Speech & AudioBrief- MERL's Speech & Audio team ranked 1st out of 7 teams in Task 2 of the 1st SONICOM Listener Acoustic Personalisation (LAP) Challenge, which focused on "Spatial upsampling for obtaining a high-spatial-resolution HRTF from a very low number of directions". The team was led by Yoshiki Masuyama, and also included Gordon Wichern, Francois Germain, MERL intern Christopher Ick, and Jonathan Le Roux.

The LAP Challenge workshop and award ceremony was hosted by the 32nd European Signal Processing Conference (EUSIPCO 24) on August 29, 2024 in Lyon, France. Yoshiki Masuyama presented the team's method, "Retrieval-Augmented Neural Field for HRTF Upsampling and Personalization", and received the award from Prof. Michele Geronazzo (University of Padova, IT, and Imperial College London, UK), Chair of the Challenge's Organizing Committee.

The LAP challenge aims to explore challenges in the field of personalized spatial audio, with the first edition focusing on the spatial upsampling and interpolation of head-related transfer functions (HRTFs). HRTFs with dense spatial grids are required for immersive audio experiences, but their recording is time-consuming. Although HRTF spatial upsampling has recently shown remarkable progress with approaches involving neural fields, HRTF estimation accuracy remains limited when upsampling from only a few measured directions, e.g., 3 or 5 measurements. The MERL team tackled this problem by proposing a retrieval-augmented neural field (RANF). RANF retrieves a subject whose HRTFs are close to those of the target subject at the measured directions from a library of subjects. The HRTF of the retrieved subject at the target direction is fed into the neural field in addition to the desired sound source direction. The team also developed a neural network architecture that can handle an arbitrary number of retrieved subjects, inspired by a multi-channel processing technique called transform-average-concatenate.

- MERL's Speech & Audio team ranked 1st out of 7 teams in Task 2 of the 1st SONICOM Listener Acoustic Personalisation (LAP) Challenge, which focused on "Spatial upsampling for obtaining a high-spatial-resolution HRTF from a very low number of directions". The team was led by Yoshiki Masuyama, and also included Gordon Wichern, Francois Germain, MERL intern Christopher Ick, and Jonathan Le Roux.

-

EVENT MERL Contributes to ICASSP 2024 Date: Sunday, April 14, 2024 - , April 19, 2024

Location: Seoul, South Korea

MERL Contacts: Petros T. Boufounos; Chiori Hori; Toshiaki Koike-Akino; Jonathan Le Roux; Hassan Mansour; Kieran Parsons; Joshua Rapp; Anthony Vetro; Pu (Perry) Wang; Gordon Wichern

Research Areas: Artificial Intelligence, Computational Sensing, Machine Learning, Robotics, Signal Processing, Speech & AudioBrief- MERL has made numerous contributions to both the organization and technical program of ICASSP 2024, which is being held in Seoul, Korea from April 14-19, 2024.

Sponsorship and Awards

MERL is proud to be a Bronze Patron of the conference and will participate in the student job fair on Thursday, April 18. Please join this session to learn more about employment opportunities at MERL, including openings for research scientists, post-docs, and interns.

MERL is pleased to be the sponsor of two IEEE Awards that will be presented at the conference. We congratulate Prof. Stéphane G. Mallat, the recipient of the 2024 IEEE Fourier Award for Signal Processing, and Prof. Keiichi Tokuda, the recipient of the 2024 IEEE James L. Flanagan Speech and Audio Processing Award.

Jonathan Le Roux, MERL Speech and Audio Senior Team Leader, will also be recognized during the Awards Ceremony for his recent elevation to IEEE Fellow.

Technical Program

MERL will present 13 papers in the main conference on a wide range of topics including automated audio captioning, speech separation, audio generative models, speech and sound synthesis, spatial audio reproduction, multimodal indoor monitoring, radar imaging, depth estimation, physics-informed machine learning, and integrated sensing and communications (ISAC). Three workshop papers have also been accepted for presentation on audio-visual speaker diarization, music source separation, and music generative models.

Perry Wang is the co-organizer of the Workshop on Signal Processing and Machine Learning Advances in Automotive Radars (SPLAR), held on Sunday, April 14. It features keynote talks from leaders in both academia and industry, peer-reviewed workshop papers, and lightning talks from ICASSP regular tracks on signal processing and machine learning for automotive radar and, more generally, radar perception.

Gordon Wichern will present an invited keynote talk on analyzing and interpreting audio deep learning models at the Workshop on Explainable Machine Learning for Speech and Audio (XAI-SA), held on Monday, April 15. He will also appear in a panel discussion on interpretable audio AI at the workshop.

Perry Wang also co-organizes a two-part special session on Next-Generation Wi-Fi Sensing (SS-L9 and SS-L13) which will be held on Thursday afternoon, April 18. The special session includes papers on PHY-layer oriented signal processing and data-driven deep learning advances, and supports upcoming 802.11bf WLAN Sensing Standardization activities.

Petros Boufounos is participating as a mentor in ICASSP’s Micro-Mentoring Experience Program (MiME).

About ICASSP

ICASSP is the flagship conference of the IEEE Signal Processing Society, and the world's largest and most comprehensive technical conference focused on the research advances and latest technological development in signal and information processing. The event attracts more than 3000 participants.

- MERL has made numerous contributions to both the organization and technical program of ICASSP 2024, which is being held in Seoul, Korea from April 14-19, 2024.

Related Publication

- @article{Masuyama2024feb,

- author = {Masuyama, Yoshiki and Wichern, Gordon and Germain, François G and Pan, Zexu and Khurana, Sameer and Hori, Chiori and {Le Roux}, Jonathan},

- title = {{NIIRF: Neural IIR Filter Field for HRTF Upsampling and Personalization}},

- journal = {arXiv},

- year = 2024,

- month = feb,

- url = {https://arxiv.org/abs/2402.17907}

- }