- Date: Sunday, April 14, 2024 - Friday, April 19, 2024

Location: Seoul, South Korea

MERL Contacts: Petros T. Boufounos; François Germain; Chiori Hori; Sameer Khurana; Toshiaki Koike-Akino; Jonathan Le Roux; Hassan Mansour; Zexu Pan; Kieran Parsons; Joshua Rapp; Anthony Vetro; Pu (Perry) Wang; Gordon Wichern; Ryoma Yataka

Research Areas: Artificial Intelligence, Computational Sensing, Machine Learning, Robotics, Signal Processing, Speech & Audio

Brief - MERL has made numerous contributions to both the organization and technical program of ICASSP 2024, which is being held in Seoul, Korea from April 14-19, 2024.

Sponsorship and Awards

MERL is proud to be a Bronze Patron of the conference and will participate in the student job fair on Thursday, April 18. Please join this session to learn more about employment opportunities at MERL, including openings for research scientists, post-docs, and interns.

MERL is pleased to be the sponsor of two IEEE Awards that will be presented at the conference. We congratulate Prof. Stéphane G. Mallat, the recipient of the 2024 IEEE Fourier Award for Signal Processing, and Prof. Keiichi Tokuda, the recipient of the 2024 IEEE James L. Flanagan Speech and Audio Processing Award.

Jonathan Le Roux, MERL Speech and Audio Senior Team Leader, will also be recognized during the Awards Ceremony for his recent elevation to IEEE Fellow.

Technical Program

MERL will present 13 papers in the main conference on a wide range of topics including automated audio captioning, speech separation, audio generative models, speech and sound synthesis, spatial audio reproduction, multimodal indoor monitoring, radar imaging, depth estimation, physics-informed machine learning, and integrated sensing and communications (ISAC). Three workshop papers have also been accepted for presentation on audio-visual speaker diarization, music source separation, and music generative models.

Perry Wang is the co-organizer of the Workshop on Signal Processing and Machine Learning Advances in Automotive Radars (SPLAR), held on Sunday, April 14. It features keynote talks from leaders in both academia and industry, peer-reviewed workshop papers, and lightning talks from ICASSP regular tracks on signal processing and machine learning for automotive radar and, more generally, radar perception.

Gordon Wichern will present an invited keynote talk on analyzing and interpreting audio deep learning models at the Workshop on Explainable Machine Learning for Speech and Audio (XAI-SA), held on Monday, April 15. He will also appear in a panel discussion on interpretable audio AI at the workshop.

Perry Wang also co-organizes a two-part special session on Next-Generation Wi-Fi Sensing (SS-L9 and SS-L13) which will be held on Thursday afternoon, April 18. The special session includes papers on PHY-layer oriented signal processing and data-driven deep learning advances, and supports upcoming 802.11bf WLAN Sensing Standardization activities.

Petros Boufounos is participating as a mentor in ICASSP’s Micro-Mentoring Experience Program (MiME).

About ICASSP

ICASSP is the flagship conference of the IEEE Signal Processing Society, and the world's largest and most comprehensive technical conference focused on the research advances and latest technological development in signal and information processing. The event attracts more than 3000 participants.

-

- Date & Time: Wednesday, January 31, 2024; 12:00 PM

Speaker: Greta Tuckute, MIT

MERL Host: Sameer Khurana

Research Areas: Artificial Intelligence, Machine Learning, Speech & Audio

Abstract  Advances in machine learning have led to powerful models for audio and language, proficient in tasks like speech recognition and fluent language generation. Beyond their immense utility in engineering applications, these models offer valuable tools for cognitive science and neuroscience. In this talk, I will demonstrate how these artificial neural network models can be used to understand how the human brain processes language. The first part of the talk will cover how audio neural networks serve as computational accounts for brain activity in the auditory cortex. The second part will focus on the use of large language models, such as those in the GPT family, to non-invasively control brain activity in the human language system.

Advances in machine learning have led to powerful models for audio and language, proficient in tasks like speech recognition and fluent language generation. Beyond their immense utility in engineering applications, these models offer valuable tools for cognitive science and neuroscience. In this talk, I will demonstrate how these artificial neural network models can be used to understand how the human brain processes language. The first part of the talk will cover how audio neural networks serve as computational accounts for brain activity in the auditory cortex. The second part will focus on the use of large language models, such as those in the GPT family, to non-invasively control brain activity in the human language system.

-

- Date & Time: Thursday, September 28, 2023; 12:00 PM

Speaker: Komei Sugiura, Keio University

MERL Host: Chiori Hori

Research Areas: Artificial Intelligence, Machine Learning, Robotics, Speech & Audio

Abstract  Recent advances in multimodal models that fuse vision and language are revolutionizing robotics. In this lecture, I will begin by introducing recent multimodal foundational models and their applications in robotics. The second topic of this talk will address our recent work on multimodal language processing in robotics. The shortage of home care workers has become a pressing societal issue, and the use of domestic service robots (DSRs) to assist individuals with disabilities is seen as a possible solution. I will present our work on DSRs that are capable of open-vocabulary mobile manipulation, referring expression comprehension and segmentation models for everyday objects, and future captioning methods for cooking videos and DSRs.

Recent advances in multimodal models that fuse vision and language are revolutionizing robotics. In this lecture, I will begin by introducing recent multimodal foundational models and their applications in robotics. The second topic of this talk will address our recent work on multimodal language processing in robotics. The shortage of home care workers has become a pressing societal issue, and the use of domestic service robots (DSRs) to assist individuals with disabilities is seen as a possible solution. I will present our work on DSRs that are capable of open-vocabulary mobile manipulation, referring expression comprehension and segmentation models for everyday objects, and future captioning methods for cooking videos and DSRs.

-

- Date: Sunday, June 4, 2023 - Saturday, June 10, 2023

Location: Rhodes Island, Greece

MERL Contacts: Petros T. Boufounos; François Germain; Toshiaki Koike-Akino; Jonathan Le Roux; Dehong Liu; Suhas Lohit; Yanting Ma; Hassan Mansour; Joshua Rapp; Anthony Vetro; Pu (Perry) Wang; Gordon Wichern

Research Areas: Artificial Intelligence, Computational Sensing, Machine Learning, Signal Processing, Speech & Audio

Brief - MERL has made numerous contributions to both the organization and technical program of ICASSP 2023, which is being held in Rhodes Island, Greece from June 4-10, 2023.

Organization

Petros Boufounos is serving as General Co-Chair of the conference this year, where he has been involved in all aspects of conference planning and execution.

Perry Wang is the organizer of a special session on Radar-Assisted Perception (RAP), which will be held on Wednesday, June 7. The session will feature talks on signal processing and deep learning for radar perception, pose estimation, and mutual interference mitigation with speakers from both academia (Carnegie Mellon University, Virginia Tech, University of Illinois Urbana-Champaign) and industry (Mitsubishi Electric, Bosch, Waveye).

Anthony Vetro is the co-organizer of the Workshop on Signal Processing for Autonomous Systems (SPAS), which will be held on Monday, June 5, and feature invited talks from leaders in both academia and industry on timely topics related to autonomous systems.

Sponsorship

MERL is proud to be a Silver Patron of the conference and will participate in the student job fair on Thursday, June 8. Please join this session to learn more about employment opportunities at MERL, including openings for research scientists, post-docs, and interns.

MERL is pleased to be the sponsor of two IEEE Awards that will be presented at the conference. We congratulate Prof. Rabab Ward, the recipient of the 2023 IEEE Fourier Award for Signal Processing, and Prof. Alexander Waibel, the recipient of the 2023 IEEE James L. Flanagan Speech and Audio Processing Award.

Technical Program

MERL is presenting 13 papers in the main conference on a wide range of topics including source separation and speech enhancement, radar imaging, depth estimation, motor fault detection, time series recovery, and point clouds. One workshop paper has also been accepted for presentation on self-supervised music source separation.

Perry Wang has been invited to give a keynote talk on Wi-Fi sensing and related standards activities at the Workshop on Integrated Sensing and Communications (ISAC), which will be held on Sunday, June 4.

Additionally, Anthony Vetro will present a Perspective Talk on Physics-Grounded Machine Learning, which is scheduled for Thursday, June 8.

About ICASSP

ICASSP is the flagship conference of the IEEE Signal Processing Society, and the world's largest and most comprehensive technical conference focused on the research advances and latest technological development in signal and information processing. The event attracts more than 2000 participants each year.

-

- Date & Time: Tuesday, April 25, 2023; 11:00 AM

Speaker: Dan Stowell, Tilburg University / Naturalis Biodiversity Centre

MERL Host: Gordon Wichern

Research Areas: Artificial Intelligence, Machine Learning, Speech & Audio

Abstract  Machine learning can be used to identify animals from their sound. This could be a valuable tool for biodiversity monitoring, and for understanding animal behaviour and communication. But to get there, we need very high accuracy at fine-grained acoustic distinctions across hundreds of categories in diverse conditions. In our group we are studying how to achieve this at continental scale. I will describe aspects of bioacoustic data that challenge even the latest deep learning workflows, and our work to address this. Methods covered include adaptive feature representations, deep embeddings and few-shot learning.

Machine learning can be used to identify animals from their sound. This could be a valuable tool for biodiversity monitoring, and for understanding animal behaviour and communication. But to get there, we need very high accuracy at fine-grained acoustic distinctions across hundreds of categories in diverse conditions. In our group we are studying how to achieve this at continental scale. I will describe aspects of bioacoustic data that challenge even the latest deep learning workflows, and our work to address this. Methods covered include adaptive feature representations, deep embeddings and few-shot learning.

-

- Date & Time: Monday, December 12, 2022; 1:00pm-5:30pm ET

Location: Mitsubishi Electric Research Laboratories (MERL)/Virtual

Research Areas: Applied Physics, Artificial Intelligence, Communications, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Electric Systems, Electronic and Photonic Devices, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & Audio, Digital Video

Brief - Join MERL's virtual open house on December 12th, 2022! Featuring a keynote, live sessions, research area booths, and opportunities to interact with our research team. Discover who we are and what we do, and learn about internship and employment opportunities.

-

- Date: Thursday, October 6, 2022

Location: Kendall Square, Cambridge, MA

MERL Contacts: Anoop Cherian; Jonathan Le Roux

Research Areas: Artificial Intelligence, Computer Vision, Machine Learning, Speech & Audio

Brief - SANE 2022, a one-day event gathering researchers and students in speech and audio from the Northeast of the American continent, was held on Thursday October 6, 2022 in Kendall Square, Cambridge, MA.

It was the 9th edition in the SANE series of workshops, which started in 2012 and was held every year alternately in Boston and New York until 2019. Since the first edition, the audience has grown to a record 200 participants and 45 posters in 2019. After a 2-year hiatus due to the pandemic, SANE returned with an in-person gathering of 140 students and researchers.

SANE 2022 featured invited talks by seven leading researchers from the Northeast: Rupal Patel (Northeastern/VocaliD), Wei-Ning Hsu (Meta FAIR), Scott Wisdom (Google), Tara Sainath (Google), Shinji Watanabe (CMU), Anoop Cherian (MERL), and Chuang Gan (UMass Amherst/MIT-IBM Watson AI Lab). It also featured a lively poster session with 29 posters.

SANE 2022 was co-organized by Jonathan Le Roux (MERL), Arnab Ghoshal (Apple), John Hershey (Google), and Shinji Watanabe (CMU). SANE remained a free event thanks to generous sponsorship by Bose, Google, MERL, and Microsoft.

Slides and videos of the talks will be released on the SANE workshop website.

-

- Date & Time: Tuesday, September 6, 2022; 12:00 PM EDT

Speaker: Chuang Gan, UMass Amherst & MIT-IBM Watson AI Lab

MERL Host: Jonathan Le Roux

Research Areas: Artificial Intelligence, Computer Vision, Machine Learning, Speech & Audio

Abstract  Human sensory perception of the physical world is rich and multimodal and can flexibly integrate input from all five sensory modalities -- vision, touch, smell, hearing, and taste. However, in AI, attention has primarily focused on visual perception. In this talk, I will introduce my efforts in connecting vision with sound, which will allow machine perception systems to see objects and infer physics from multi-sensory data. In the first part of my talk, I will introduce a. self-supervised approach that could learn to parse images and separate the sound sources by watching and listening to unlabeled videos without requiring additional manual supervision. In the second part of my talk, I will show we may further infer the underlying causal structure in 3D environments through visual and auditory observations. This enables agents to seek the sound source of repeating environmental sound (e.g., alarm) or identify what object has fallen, and where, from an intermittent impact sound.

Human sensory perception of the physical world is rich and multimodal and can flexibly integrate input from all five sensory modalities -- vision, touch, smell, hearing, and taste. However, in AI, attention has primarily focused on visual perception. In this talk, I will introduce my efforts in connecting vision with sound, which will allow machine perception systems to see objects and infer physics from multi-sensory data. In the first part of my talk, I will introduce a. self-supervised approach that could learn to parse images and separate the sound sources by watching and listening to unlabeled videos without requiring additional manual supervision. In the second part of my talk, I will show we may further infer the underlying causal structure in 3D environments through visual and auditory observations. This enables agents to seek the sound source of repeating environmental sound (e.g., alarm) or identify what object has fallen, and where, from an intermittent impact sound.

-

- Date & Time: Tuesday, March 1, 2022; 1:00 PM EST

Speaker: David Harwath, The University of Texas at Austin

MERL Host: Chiori Hori

Research Areas: Artificial Intelligence, Machine Learning, Speech & Audio

Abstract  Humans learn spoken language and visual perception at an early age by being immersed in the world around them. Why can't computers do the same? In this talk, I will describe our ongoing work to develop methodologies for grounding continuous speech signals at the raw waveform level to natural image scenes. I will first present self-supervised models capable of discovering discrete, hierarchical structure (words and sub-word units) in the speech signal. Instead of conventional annotations, these models learn from correspondences between speech sounds and visual patterns such as objects and textures. Next, I will demonstrate how these discrete units can be used as a drop-in replacement for text transcriptions in an image captioning system, enabling us to directly synthesize spoken descriptions of images without the need for text as an intermediate representation. Finally, I will describe our latest work on Transformer-based models of visually-grounded speech. These models significantly outperform the prior state of the art on semantic speech-to-image retrieval tasks, and also learn representations that are useful for a multitude of other speech processing tasks.

Humans learn spoken language and visual perception at an early age by being immersed in the world around them. Why can't computers do the same? In this talk, I will describe our ongoing work to develop methodologies for grounding continuous speech signals at the raw waveform level to natural image scenes. I will first present self-supervised models capable of discovering discrete, hierarchical structure (words and sub-word units) in the speech signal. Instead of conventional annotations, these models learn from correspondences between speech sounds and visual patterns such as objects and textures. Next, I will demonstrate how these discrete units can be used as a drop-in replacement for text transcriptions in an image captioning system, enabling us to directly synthesize spoken descriptions of images without the need for text as an intermediate representation. Finally, I will describe our latest work on Transformer-based models of visually-grounded speech. These models significantly outperform the prior state of the art on semantic speech-to-image retrieval tasks, and also learn representations that are useful for a multitude of other speech processing tasks.

-

- Date & Time: Thursday, December 9, 2021; 1:00pm - 5:30pm EST

Location: Virtual Event

Speaker: Prof. Melanie Zeilinger, ETH

Research Areas: Applied Physics, Artificial Intelligence, Communications, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Electric Systems, Electronic and Photonic Devices, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & Audio, Digital Video, Human-Computer Interaction, Information Security

Brief - MERL is excited to announce the second keynote speaker for our Virtual Open House 2021:

Prof. Melanie Zeilinger from ETH .

Our virtual open house will take place on December 9, 2021, 1:00pm - 5:30pm (EST).

Join us to learn more about who we are, what we do, and discuss our internship and employment opportunities. Prof. Zeilinger's talk is scheduled for 3:15pm - 3:45pm (EST).

Registration: https://mailchi.mp/merl/merlvoh2021

Keynote Title: Control Meets Learning - On Performance, Safety and User Interaction

Abstract: With increasing sensing and communication capabilities, physical systems today are becoming one of the largest generators of data, making learning a central component of autonomous control systems. While this paradigm shift offers tremendous opportunities to address new levels of system complexity, variability and user interaction, it also raises fundamental questions of learning in a closed-loop dynamical control system. In this talk, I will present some of our recent results showing how even safety-critical systems can leverage the potential of data. I will first briefly present concepts for using learning for automatic controller design and for a new safety framework that can equip any learning-based controller with safety guarantees. The second part will then discuss how expert and user information can be utilized to optimize system performance, where I will particularly highlight an approach developed together with MERL for personalizing the motion planning in autonomous driving to the individual driving style of a passenger.

-

- Date & Time: Thursday, December 9, 2021; 1:00pm - 5:30pm EST

Location: Virtual Event

Speaker: Prof. Ashok Veeraraghavan, Rice University

Research Areas: Applied Physics, Artificial Intelligence, Communications, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Electric Systems, Electronic and Photonic Devices, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & Audio, Digital Video, Human-Computer Interaction, Information Security

Brief - MERL is excited to announce the first keynote speaker for our Virtual Open House 2021:

Prof. Ashok Veeraraghavan from Rice University.

Our virtual open house will take place on December 9, 2021, 1:00pm - 5:30pm (EST).

Join us to learn more about who we are, what we do, and discuss our internship and employment opportunities. Prof. Veeraraghavan's talk is scheduled for 1:15pm - 1:45pm (EST).

Registration: https://mailchi.mp/merl/merlvoh2021

Keynote Title: Computational Imaging: Beyond the limits imposed by lenses.

Abstract: The lens has long been a central element of cameras, since its early use in the mid-nineteenth century by Niepce, Talbot, and Daguerre. The role of the lens, from the Daguerrotype to modern digital cameras, is to refract light to achieve a one-to-one mapping between a point in the scene and a point on the sensor. This effect enables the sensor to compute a particular two-dimensional (2D) integral of the incident 4D light-field. We propose a radical departure from this practice and the many limitations it imposes. In the talk we focus on two inter-related research projects that attempt to go beyond lens-based imaging.

First, we discuss our lab’s recent efforts to build flat, extremely thin imaging devices by replacing the lens in a conventional camera with an amplitude mask and computational reconstruction algorithms. These lensless cameras, called FlatCams can be less than a millimeter in thickness and enable applications where size, weight, thickness or cost are the driving factors. Second, we discuss high-resolution, long-distance imaging using Fourier Ptychography, where the need for a large aperture aberration corrected lens is replaced by a camera array and associated phase retrieval algorithms resulting again in order of magnitude reductions in size, weight and cost. Finally, I will spend a few minutes discussing how the wholistic computational imaging approach can be used to create ultra-high-resolution wavefront sensors.

-

- Date & Time: Thursday, December 9, 2021; 100pm-5:30pm (EST)

Location: Virtual Event

Research Areas: Applied Physics, Artificial Intelligence, Communications, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Electric Systems, Electronic and Photonic Devices, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & Audio, Digital Video, Human-Computer Interaction, Information Security

Brief - Mitsubishi Electric Research Laboratories cordially invites you to join our Virtual Open House, on December 9, 2021, 1:00pm - 5:30pm (EST).

The event will feature keynotes, live sessions, research area booths, and time for open interactions with our researchers. Join us to learn more about who we are, what we do, and discuss our internship and employment opportunities.

Registration: https://mailchi.mp/merl/merlvoh2021

-

- Date & Time: Tuesday, September 28, 2021; 1:00 PM EST

Speaker: Dr. Ruohan Gao, Stanford University

MERL Host: Gordon Wichern

Research Areas: Computer Vision, Machine Learning, Speech & Audio

Abstract  While computer vision has made significant progress by "looking" — detecting objects, actions, or people based on their appearance — it often does not listen. Yet cognitive science tells us that perception develops by making use of all our senses without intensive supervision. Towards this goal, in this talk I will present my research on audio-visual learning — We disentangle object sounds from unlabeled video, use audio as an efficient preview for action recognition in untrimmed video, decode the monaural soundtrack into its binaural counterpart by injecting visual spatial information, and use echoes to interact with the environment for spatial image representation learning. Together, these are steps towards multimodal understanding of the visual world, where audio serves as both the semantic and spatial signals. In the end, I will also briefly talk about our latest work on multisensory learning for robotics.

While computer vision has made significant progress by "looking" — detecting objects, actions, or people based on their appearance — it often does not listen. Yet cognitive science tells us that perception develops by making use of all our senses without intensive supervision. Towards this goal, in this talk I will present my research on audio-visual learning — We disentangle object sounds from unlabeled video, use audio as an efficient preview for action recognition in untrimmed video, decode the monaural soundtrack into its binaural counterpart by injecting visual spatial information, and use echoes to interact with the environment for spatial image representation learning. Together, these are steps towards multimodal understanding of the visual world, where audio serves as both the semantic and spatial signals. In the end, I will also briefly talk about our latest work on multisensory learning for robotics.

-

- Date & Time: Wednesday, December 9, 2020; 1:00-5:00PM EST

Location: Virtual

MERL Contacts: Elizabeth Phillips; Anthony Vetro

Research Areas: Applied Physics, Artificial Intelligence, Communications, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Electric Systems, Electronic and Photonic Devices, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & Audio

-

- Date & Time: Thursday, November 29, 2018; 4-6pm

Location: 201 Broadway, 8th floor, Cambridge, MA

MERL Contacts: Elizabeth Phillips; Anthony Vetro

Research Areas: Applied Physics, Artificial Intelligence, Communications, Computational Sensing, Computer Vision, Control, Data Analytics, Dynamical Systems, Electric Systems, Electronic and Photonic Devices, Machine Learning, Multi-Physical Modeling, Optimization, Robotics, Signal Processing, Speech & Audio

Brief - Snacks, demos, science: On Thursday 11/29, Mitsubishi Electric Research Labs (MERL) will host an open house for graduate+ students interested in internships, post-docs, and research scientist positions. The event will be held from 4-6pm and will feature demos & short presentations in our main areas of research including artificial intelligence, robotics, computer vision, speech processing, optimization, machine learning, data analytics, signal processing, communications, sensing, control and dynamical systems, as well as multi-physyical modeling and electronic devices. MERL is a high impact publication-oriented research lab with very extensive internship and university collaboration programs. Most internships lead to publication; many of our interns and staff have gone on to notable careers at MERL and in academia. Come mix with our researchers, see our state of the art technologies, and learn about our research opportunities. Dress code: casual, with resumes.

Pre-registration for the event is strongly encouraged:

merlopenhouse.eventbrite.com

Current internship and employment openings:

www.merl.com/internship/openings

www.merl.com/employment/employment

Information about working at MERL:

www.merl.com/employment.

-

- Date: Thursday, October 18, 2018

Location: Google, Cambridge, MA

MERL Contact: Jonathan Le Roux

Research Area: Speech & Audio

Brief - SANE 2018, a one-day event gathering researchers and students in speech and audio from the Northeast of the American continent, will be held on Thursday October 18, 2018 at Google, in Cambridge, MA. MERL is one of the organizers and sponsors of the workshop.

It is the 7th edition in the SANE series of workshops, which started at MERL in 2012. Since the first edition, the audience has steadily grown, with a record 180 participants in 2017.

SANE 2018 will feature invited talks by leading researchers from the Northeast, as well as from the international community. It will also feature a lively poster session, open to both students and researchers.

-

- Date & Time: Tuesday, March 6, 2018; 12:00 PM

Speaker: Scott Wisdom, Affectiva

MERL Host: Jonathan Le Roux

Research Area: Speech & Audio

Abstract  Recurrent neural networks (RNNs) are effective, data-driven models for sequential data, such as audio and speech signals. However, like many deep networks, RNNs are essentially black boxes; though they are effective, their weights and architecture are not directly interpretable by practitioners. A major component of my dissertation research is explaining the success of RNNs and constructing new RNN architectures through the process of "deep unfolding," which can construct and explain deep network architectures using an equivalence to inference in statistical models. Deep unfolding yields principled initializations for training deep networks, provides insight into their effectiveness, and assists with interpretation of what these networks learn.

Recurrent neural networks (RNNs) are effective, data-driven models for sequential data, such as audio and speech signals. However, like many deep networks, RNNs are essentially black boxes; though they are effective, their weights and architecture are not directly interpretable by practitioners. A major component of my dissertation research is explaining the success of RNNs and constructing new RNN architectures through the process of "deep unfolding," which can construct and explain deep network architectures using an equivalence to inference in statistical models. Deep unfolding yields principled initializations for training deep networks, provides insight into their effectiveness, and assists with interpretation of what these networks learn.

In particular, I will show how RNNs with rectified linear units and residual connections are a particular deep unfolding of a sequential version of the iterative shrinkage-thresholding algorithm (ISTA), a simple and classic algorithm for solving L1-regularized least-squares. This equivalence allows interpretation of state-of-the-art unitary RNNs (uRNNs) as an unfolded sparse coding algorithm. I will also describe a new type of RNN architecture called deep recurrent nonnegative matrix factorization (DR-NMF). DR-NMF is an unfolding of a sparse NMF model of nonnegative spectrograms for audio source separation. Both of these networks outperform conventional LSTM networks while also providing interpretability for practitioners.

-

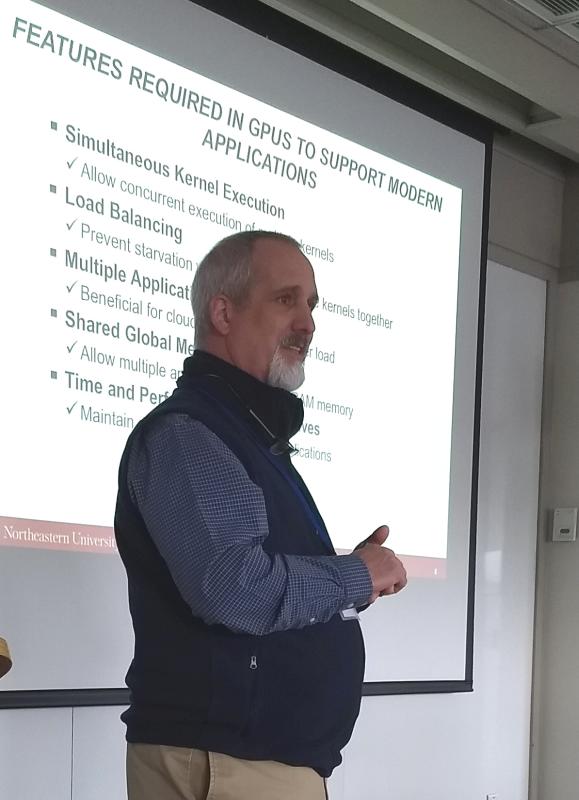

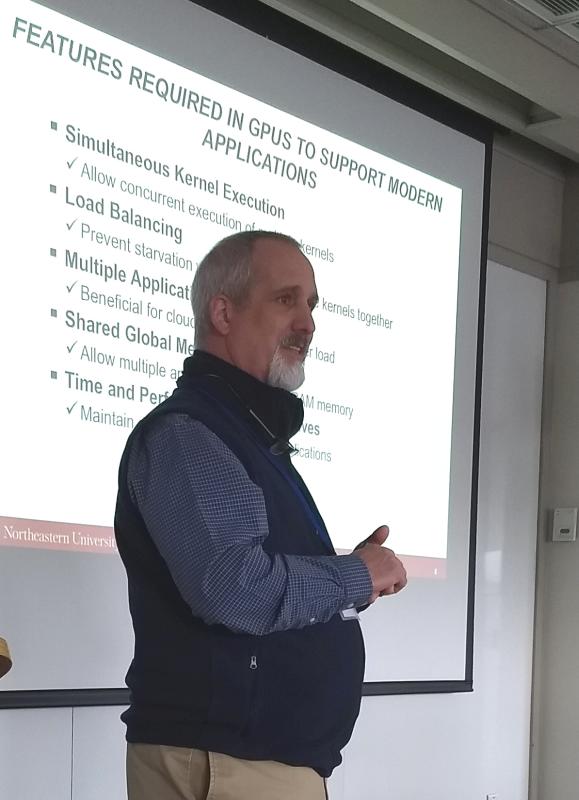

- Date & Time: Friday, February 2, 2018; 12:00

Speaker: Dr. David Kaeli, Northeastern University

MERL Host: Abraham Goldsmith

Research Areas: Control, Optimization, Machine Learning, Speech & Audio

Abstract  GPU computing is alive and well! The GPU has allowed researchers to overcome a number of computational barriers in important problem domains. But still, there remain challenges to use a GPU to target more general purpose applications. GPUs achieve impressive speedups when compared to CPUs, since GPUs have a large number of compute cores and high memory bandwidth. Recent GPU performance is approaching 10 teraflops of single precision performance on a single device. In this talk we will discuss current trends with GPUs, including some advanced features that allow them exploit multi-context grains of parallelism. Further, we consider how GPUs can be treated as cloud-based resources, enabling a GPU-enabled server to deliver HPC cloud services by leveraging virtualization and collaborative filtering. Finally, we argue for for new heterogeneous workloads and discuss the role of the Heterogeneous Systems Architecture (HSA), a standard that further supports integration of the CPU and GPU into a common framework. We present a new class of benchmarks specifically tailored to evaluate the benefits of features supported in the new HSA programming model.

GPU computing is alive and well! The GPU has allowed researchers to overcome a number of computational barriers in important problem domains. But still, there remain challenges to use a GPU to target more general purpose applications. GPUs achieve impressive speedups when compared to CPUs, since GPUs have a large number of compute cores and high memory bandwidth. Recent GPU performance is approaching 10 teraflops of single precision performance on a single device. In this talk we will discuss current trends with GPUs, including some advanced features that allow them exploit multi-context grains of parallelism. Further, we consider how GPUs can be treated as cloud-based resources, enabling a GPU-enabled server to deliver HPC cloud services by leveraging virtualization and collaborative filtering. Finally, we argue for for new heterogeneous workloads and discuss the role of the Heterogeneous Systems Architecture (HSA), a standard that further supports integration of the CPU and GPU into a common framework. We present a new class of benchmarks specifically tailored to evaluate the benefits of features supported in the new HSA programming model.

-

- Date: Sunday, December 10, 2017

Location: Hyatt Regency, Long Beach, CA

MERL Contact: Chiori Hori

Research Area: Speech & Audio

Brief - MERL researcher Chiori Hori led the organization of the 6th edition of the Dialog System Technology Challenges (DSTC6). This year's edition of DSTC is split into three tracks: End-to-End Goal Oriented Dialog Learning, End-to-End Conversation Modeling, and Dialogue Breakdown Detection. A total of 23 teams from all over the world competed in the various tracks, and will meet at the Hyatt Regency in Long Beach, CA, USA on December 10 to present their results at a dedicated workshop colocated with NIPS 2017.

MERL's Speech and Audio Team and Mitsubishi Electric Corporation jointly submitted a set of systems to the End-to-End Conversation Modeling Track, obtaining the best rank among 19 submissions in terms of objective metrics.

-

- Date & Time: Wednesday, February 1, 2017; 12:00-13:00

Speaker: Dr. Heiga ZEN, Google

MERL Host: Chiori Hori

Research Area: Speech & Audio

Abstract  Recent progress in generative modeling has improved the naturalness of synthesized speech significantly. In this talk I will summarize these generative model-based approaches for speech synthesis such as WaveNet, a deep generative model of raw audio waveforms. We show that WaveNets are able to generate speech which mimics any human voice and which sounds more natural than the best existing Text-to-Speech systems.

Recent progress in generative modeling has improved the naturalness of synthesized speech significantly. In this talk I will summarize these generative model-based approaches for speech synthesis such as WaveNet, a deep generative model of raw audio waveforms. We show that WaveNets are able to generate speech which mimics any human voice and which sounds more natural than the best existing Text-to-Speech systems.

See https://deepmind.com/blog/wavenet-generative-model-raw-audio/ for further details.

-

- Date: Saturday, December 10, 2016

Location: Centre Convencions Internacional Barcelona, Barcelona SPAIN

Research Areas: Machine Learning, Speech & Audio

Brief - MERL researcher John Hershey, is organizing a Workshop on End-to-End Speech and Audio Processing, on behalf of MERL's Speech and Audio team, and in collaboration with Philemon Brakel of the University of Montreal. The workshop focuses on recent advances to end-to-end deep learning methods to address alignment and structured prediction problems that naturally arise in speech and audio processing. The all day workshop takes place on Saturday, December 10th at NIPS 2016, in Barcelona, Spain.

-

- Date: Tuesday, December 13, 2016 - Friday, December 16, 2016

Location: San Diego, California

Research Area: Speech & Audio

Brief - The IEEE Workshop on Spoken Language Technology is a premier international showcase for advances in spoken language technology. The theme for 2016 is "machine learning: from signal to concepts," which reflects the current excitement about end-to-end learning in speech and language processing. This year, MERL is showing its support for SLT as one of its top sponsors, along with Amazon and Microsoft.

-

- Date: Tuesday, December 13, 2016

Location: 2016 IEEE Spoken Language Technology Workshop, San Diego, California

Speaker: John Hershey, MERL

MERL Contact: Jonathan Le Roux

Research Areas: Machine Learning, Speech & Audio

Brief - MERL researcher John Hershey presents an invited tutorial at the 2016 IEEE Workshop on Spoken Language Technology, in San Diego, California. The topic, "developing novel deep neural network architectures from probabilistic models" stems from MERL work with collaborators Jonathan Le Roux and Shinji Watanabe, on a principled framework that seeks to improve our understanding of deep neural networks, and draws inspiration for new types of deep network from the arsenal of principles and tools developed over the years for conventional probabilistic models. The tutorial covers a range of parallel ideas in the literature that have formed a recent trend, as well as their application to speech and language.

-

- Date: Friday, October 21, 2016

Location: MIT, McGovern Institute for Brain Research, Cambridge, MA

MERL Contact: Jonathan Le Roux

Research Area: Speech & Audio

Brief - SANE 2016, a one-day event gathering researchers and students in speech and audio from the Northeast of the American continent, will be held on Friday October 21, 2016 at MIT's Brain and Cognitive Sciences Department, at the McGovern Institute for Brain Research, in Cambridge, MA.

It is a follow-up to SANE 2012 (Mitsubishi Electric Research Labs - MERL), SANE 2013 (Columbia University), SANE 2014 (MIT CSAIL), and SANE 2015 (Google NY). Since the first edition, the audience has steadily grown, gathering 140 researchers and students in 2015.

SANE 2016 will feature invited talks by leading researchers: Juan P. Bello (NYU), William T. Freeman (MIT/Google), Nima Mesgarani (Columbia University), DAn Ellis (Google), Shinji Watanabe (MERL), Josh McDermott (MIT), and Jesse Engel (Google). It will also feature a lively poster session during lunch time, open to both students and researchers.

SANE 2016 is organized by Jonathan Le Roux (MERL), Josh McDermott (MIT), Jim Glass (MIT), and John R. Hershey (MERL).

-

- Date & Time: Friday, June 3, 2016; 1:30PM - 3:00PM

Speaker: Nobuaki Minematsu and Daisuke Saito, The University of Tokyo

Research Area: Speech & Audio

Abstract  Speech signals covey various kinds of information, which are grouped into two kinds, linguistic and extra-linguistic information. Many speech applications, however, focus on only a single aspect of speech. For example, speech recognizers try to extract only word identity from speech and speaker recognizers extract only speaker identity. Here, irrelevant features are often treated as hidden or latent by applying the probability theory to a large number of samples or the irrelevant features are normalized to have quasi-standard values. In speech analysis, however, phases are usually removed, not hidden or normalized, and pitch harmonics are also removed, not hidden or normalized. The resulting speech spectrum still contains both linguistic information and extra-linguistic information. Is there any good method to remove extra-linguistic information from the spectrum? In this talk, our answer to that question is introduced, called speech structure. Extra-linguistic variation can be modeled as feature space transformation and our speech structure is based on the transform-invariance of f-divergence. This proposal was inspired by findings in classical studies of structural phonology and recent studies of developmental psychology. Speech structure has been applied to accent clustering, speech recognition, and language identification. These applications are also explained in the talk.

Speech signals covey various kinds of information, which are grouped into two kinds, linguistic and extra-linguistic information. Many speech applications, however, focus on only a single aspect of speech. For example, speech recognizers try to extract only word identity from speech and speaker recognizers extract only speaker identity. Here, irrelevant features are often treated as hidden or latent by applying the probability theory to a large number of samples or the irrelevant features are normalized to have quasi-standard values. In speech analysis, however, phases are usually removed, not hidden or normalized, and pitch harmonics are also removed, not hidden or normalized. The resulting speech spectrum still contains both linguistic information and extra-linguistic information. Is there any good method to remove extra-linguistic information from the spectrum? In this talk, our answer to that question is introduced, called speech structure. Extra-linguistic variation can be modeled as feature space transformation and our speech structure is based on the transform-invariance of f-divergence. This proposal was inspired by findings in classical studies of structural phonology and recent studies of developmental psychology. Speech structure has been applied to accent clustering, speech recognition, and language identification. These applications are also explained in the talk.

-

Advances in machine learning have led to powerful models for audio and language, proficient in tasks like speech recognition and fluent language generation. Beyond their immense utility in engineering applications, these models offer valuable tools for cognitive science and neuroscience. In this talk, I will demonstrate how these artificial neural network models can be used to understand how the human brain processes language. The first part of the talk will cover how audio neural networks serve as computational accounts for brain activity in the auditory cortex. The second part will focus on the use of large language models, such as those in the GPT family, to non-invasively control brain activity in the human language system.

Advances in machine learning have led to powerful models for audio and language, proficient in tasks like speech recognition and fluent language generation. Beyond their immense utility in engineering applications, these models offer valuable tools for cognitive science and neuroscience. In this talk, I will demonstrate how these artificial neural network models can be used to understand how the human brain processes language. The first part of the talk will cover how audio neural networks serve as computational accounts for brain activity in the auditory cortex. The second part will focus on the use of large language models, such as those in the GPT family, to non-invasively control brain activity in the human language system. Recent advances in multimodal models that fuse vision and language are revolutionizing robotics. In this lecture, I will begin by introducing recent multimodal foundational models and their applications in robotics. The second topic of this talk will address our recent work on multimodal language processing in robotics. The shortage of home care workers has become a pressing societal issue, and the use of domestic service robots (DSRs) to assist individuals with disabilities is seen as a possible solution. I will present our work on DSRs that are capable of open-vocabulary mobile manipulation, referring expression comprehension and segmentation models for everyday objects, and future captioning methods for cooking videos and DSRs.

Recent advances in multimodal models that fuse vision and language are revolutionizing robotics. In this lecture, I will begin by introducing recent multimodal foundational models and their applications in robotics. The second topic of this talk will address our recent work on multimodal language processing in robotics. The shortage of home care workers has become a pressing societal issue, and the use of domestic service robots (DSRs) to assist individuals with disabilities is seen as a possible solution. I will present our work on DSRs that are capable of open-vocabulary mobile manipulation, referring expression comprehension and segmentation models for everyday objects, and future captioning methods for cooking videos and DSRs. Machine learning can be used to identify animals from their sound. This could be a valuable tool for biodiversity monitoring, and for understanding animal behaviour and communication. But to get there, we need very high accuracy at fine-grained acoustic distinctions across hundreds of categories in diverse conditions. In our group we are studying how to achieve this at continental scale. I will describe aspects of bioacoustic data that challenge even the latest deep learning workflows, and our work to address this. Methods covered include adaptive feature representations, deep embeddings and few-shot learning.

Machine learning can be used to identify animals from their sound. This could be a valuable tool for biodiversity monitoring, and for understanding animal behaviour and communication. But to get there, we need very high accuracy at fine-grained acoustic distinctions across hundreds of categories in diverse conditions. In our group we are studying how to achieve this at continental scale. I will describe aspects of bioacoustic data that challenge even the latest deep learning workflows, and our work to address this. Methods covered include adaptive feature representations, deep embeddings and few-shot learning. Human sensory perception of the physical world is rich and multimodal and can flexibly integrate input from all five sensory modalities -- vision, touch, smell, hearing, and taste. However, in AI, attention has primarily focused on visual perception. In this talk, I will introduce my efforts in connecting vision with sound, which will allow machine perception systems to see objects and infer physics from multi-sensory data. In the first part of my talk, I will introduce a. self-supervised approach that could learn to parse images and separate the sound sources by watching and listening to unlabeled videos without requiring additional manual supervision. In the second part of my talk, I will show we may further infer the underlying causal structure in 3D environments through visual and auditory observations. This enables agents to seek the sound source of repeating environmental sound (e.g., alarm) or identify what object has fallen, and where, from an intermittent impact sound.

Human sensory perception of the physical world is rich and multimodal and can flexibly integrate input from all five sensory modalities -- vision, touch, smell, hearing, and taste. However, in AI, attention has primarily focused on visual perception. In this talk, I will introduce my efforts in connecting vision with sound, which will allow machine perception systems to see objects and infer physics from multi-sensory data. In the first part of my talk, I will introduce a. self-supervised approach that could learn to parse images and separate the sound sources by watching and listening to unlabeled videos without requiring additional manual supervision. In the second part of my talk, I will show we may further infer the underlying causal structure in 3D environments through visual and auditory observations. This enables agents to seek the sound source of repeating environmental sound (e.g., alarm) or identify what object has fallen, and where, from an intermittent impact sound. Humans learn spoken language and visual perception at an early age by being immersed in the world around them. Why can't computers do the same? In this talk, I will describe our ongoing work to develop methodologies for grounding continuous speech signals at the raw waveform level to natural image scenes. I will first present self-supervised models capable of discovering discrete, hierarchical structure (words and sub-word units) in the speech signal. Instead of conventional annotations, these models learn from correspondences between speech sounds and visual patterns such as objects and textures. Next, I will demonstrate how these discrete units can be used as a drop-in replacement for text transcriptions in an image captioning system, enabling us to directly synthesize spoken descriptions of images without the need for text as an intermediate representation. Finally, I will describe our latest work on Transformer-based models of visually-grounded speech. These models significantly outperform the prior state of the art on semantic speech-to-image retrieval tasks, and also learn representations that are useful for a multitude of other speech processing tasks.

Humans learn spoken language and visual perception at an early age by being immersed in the world around them. Why can't computers do the same? In this talk, I will describe our ongoing work to develop methodologies for grounding continuous speech signals at the raw waveform level to natural image scenes. I will first present self-supervised models capable of discovering discrete, hierarchical structure (words and sub-word units) in the speech signal. Instead of conventional annotations, these models learn from correspondences between speech sounds and visual patterns such as objects and textures. Next, I will demonstrate how these discrete units can be used as a drop-in replacement for text transcriptions in an image captioning system, enabling us to directly synthesize spoken descriptions of images without the need for text as an intermediate representation. Finally, I will describe our latest work on Transformer-based models of visually-grounded speech. These models significantly outperform the prior state of the art on semantic speech-to-image retrieval tasks, and also learn representations that are useful for a multitude of other speech processing tasks. While computer vision has made significant progress by "looking" — detecting objects, actions, or people based on their appearance — it often does not listen. Yet cognitive science tells us that perception develops by making use of all our senses without intensive supervision. Towards this goal, in this talk I will present my research on audio-visual learning — We disentangle object sounds from unlabeled video, use audio as an efficient preview for action recognition in untrimmed video, decode the monaural soundtrack into its binaural counterpart by injecting visual spatial information, and use echoes to interact with the environment for spatial image representation learning. Together, these are steps towards multimodal understanding of the visual world, where audio serves as both the semantic and spatial signals. In the end, I will also briefly talk about our latest work on multisensory learning for robotics.

While computer vision has made significant progress by "looking" — detecting objects, actions, or people based on their appearance — it often does not listen. Yet cognitive science tells us that perception develops by making use of all our senses without intensive supervision. Towards this goal, in this talk I will present my research on audio-visual learning — We disentangle object sounds from unlabeled video, use audio as an efficient preview for action recognition in untrimmed video, decode the monaural soundtrack into its binaural counterpart by injecting visual spatial information, and use echoes to interact with the environment for spatial image representation learning. Together, these are steps towards multimodal understanding of the visual world, where audio serves as both the semantic and spatial signals. In the end, I will also briefly talk about our latest work on multisensory learning for robotics. Recurrent neural networks (RNNs) are effective, data-driven models for sequential data, such as audio and speech signals. However, like many deep networks, RNNs are essentially black boxes; though they are effective, their weights and architecture are not directly interpretable by practitioners. A major component of my dissertation research is explaining the success of RNNs and constructing new RNN architectures through the process of "deep unfolding," which can construct and explain deep network architectures using an equivalence to inference in statistical models. Deep unfolding yields principled initializations for training deep networks, provides insight into their effectiveness, and assists with interpretation of what these networks learn.

Recurrent neural networks (RNNs) are effective, data-driven models for sequential data, such as audio and speech signals. However, like many deep networks, RNNs are essentially black boxes; though they are effective, their weights and architecture are not directly interpretable by practitioners. A major component of my dissertation research is explaining the success of RNNs and constructing new RNN architectures through the process of "deep unfolding," which can construct and explain deep network architectures using an equivalence to inference in statistical models. Deep unfolding yields principled initializations for training deep networks, provides insight into their effectiveness, and assists with interpretation of what these networks learn. GPU computing is alive and well! The GPU has allowed researchers to overcome a number of computational barriers in important problem domains. But still, there remain challenges to use a GPU to target more general purpose applications. GPUs achieve impressive speedups when compared to CPUs, since GPUs have a large number of compute cores and high memory bandwidth. Recent GPU performance is approaching 10 teraflops of single precision performance on a single device. In this talk we will discuss current trends with GPUs, including some advanced features that allow them exploit multi-context grains of parallelism. Further, we consider how GPUs can be treated as cloud-based resources, enabling a GPU-enabled server to deliver HPC cloud services by leveraging virtualization and collaborative filtering. Finally, we argue for for new heterogeneous workloads and discuss the role of the Heterogeneous Systems Architecture (HSA), a standard that further supports integration of the CPU and GPU into a common framework. We present a new class of benchmarks specifically tailored to evaluate the benefits of features supported in the new HSA programming model.

GPU computing is alive and well! The GPU has allowed researchers to overcome a number of computational barriers in important problem domains. But still, there remain challenges to use a GPU to target more general purpose applications. GPUs achieve impressive speedups when compared to CPUs, since GPUs have a large number of compute cores and high memory bandwidth. Recent GPU performance is approaching 10 teraflops of single precision performance on a single device. In this talk we will discuss current trends with GPUs, including some advanced features that allow them exploit multi-context grains of parallelism. Further, we consider how GPUs can be treated as cloud-based resources, enabling a GPU-enabled server to deliver HPC cloud services by leveraging virtualization and collaborative filtering. Finally, we argue for for new heterogeneous workloads and discuss the role of the Heterogeneous Systems Architecture (HSA), a standard that further supports integration of the CPU and GPU into a common framework. We present a new class of benchmarks specifically tailored to evaluate the benefits of features supported in the new HSA programming model. Recent progress in generative modeling has improved the naturalness of synthesized speech significantly. In this talk I will summarize these generative model-based approaches for speech synthesis such as WaveNet, a deep generative model of raw audio waveforms. We show that WaveNets are able to generate speech which mimics any human voice and which sounds more natural than the best existing Text-to-Speech systems.

Recent progress in generative modeling has improved the naturalness of synthesized speech significantly. In this talk I will summarize these generative model-based approaches for speech synthesis such as WaveNet, a deep generative model of raw audio waveforms. We show that WaveNets are able to generate speech which mimics any human voice and which sounds more natural than the best existing Text-to-Speech systems. Speech signals covey various kinds of information, which are grouped into two kinds, linguistic and extra-linguistic information. Many speech applications, however, focus on only a single aspect of speech. For example, speech recognizers try to extract only word identity from speech and speaker recognizers extract only speaker identity. Here, irrelevant features are often treated as hidden or latent by applying the probability theory to a large number of samples or the irrelevant features are normalized to have quasi-standard values. In speech analysis, however, phases are usually removed, not hidden or normalized, and pitch harmonics are also removed, not hidden or normalized. The resulting speech spectrum still contains both linguistic information and extra-linguistic information. Is there any good method to remove extra-linguistic information from the spectrum? In this talk, our answer to that question is introduced, called speech structure. Extra-linguistic variation can be modeled as feature space transformation and our speech structure is based on the transform-invariance of f-divergence. This proposal was inspired by findings in classical studies of structural phonology and recent studies of developmental psychology. Speech structure has been applied to accent clustering, speech recognition, and language identification. These applications are also explained in the talk.

Speech signals covey various kinds of information, which are grouped into two kinds, linguistic and extra-linguistic information. Many speech applications, however, focus on only a single aspect of speech. For example, speech recognizers try to extract only word identity from speech and speaker recognizers extract only speaker identity. Here, irrelevant features are often treated as hidden or latent by applying the probability theory to a large number of samples or the irrelevant features are normalized to have quasi-standard values. In speech analysis, however, phases are usually removed, not hidden or normalized, and pitch harmonics are also removed, not hidden or normalized. The resulting speech spectrum still contains both linguistic information and extra-linguistic information. Is there any good method to remove extra-linguistic information from the spectrum? In this talk, our answer to that question is introduced, called speech structure. Extra-linguistic variation can be modeled as feature space transformation and our speech structure is based on the transform-invariance of f-divergence. This proposal was inspired by findings in classical studies of structural phonology and recent studies of developmental psychology. Speech structure has been applied to accent clustering, speech recognition, and language identification. These applications are also explained in the talk.