TR2018-202

Sim-to-Real Transfer Learning using Robustified Controllers in Robotic Tasks involving Complex Dynamics

-

- , "Sim-to-Real Transfer Learning using Robustified Controllers in Robotic Tasks involving Complex Dynamics", IEEE International Conference on Robotics and Automation (ICRA), DOI: 10.1109/ICRA.2019.8793561, May 2019, pp. 6001-6007.BibTeX TR2018-202 PDF Video Software

- @inproceedings{vanBaar2019may,

- author = {{van Baar}, Jeroen and Sullivan, Alan and Corcodel, Radu and Jha, Devesh K. and Romeres, Diego and Nikovski, Daniel N.},

- title = {{Sim-to-Real Transfer Learning using Robustified Controllers in Robotic Tasks involving Complex Dynamics}},

- booktitle = {IEEE International Conference on Robotics and Automation (ICRA)},

- year = 2019,

- pages = {6001--6007},

- month = may,

- doi = {10.1109/ICRA.2019.8793561},

- url = {https://www.merl.com/publications/TR2018-202}

- }

- , "Sim-to-Real Transfer Learning using Robustified Controllers in Robotic Tasks involving Complex Dynamics", IEEE International Conference on Robotics and Automation (ICRA), DOI: 10.1109/ICRA.2019.8793561, May 2019, pp. 6001-6007.

-

MERL Contacts:

-

Research Areas:

Abstract:

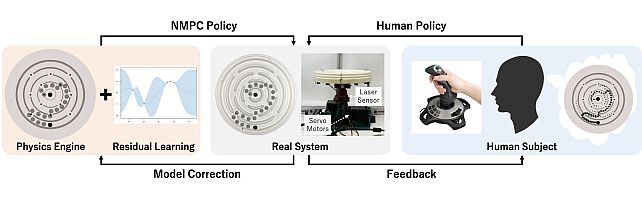

Learning robot tasks or controllers using deep reinforcement learning has been proven effective in simulations. Learning in simulation has several advantages. For example, one can fully control the simulated environment, including halting motions while performing computations. Another advantage when robots are involved, is that the amount of time a robot is occupied learning a task—rather than being productive—can be reduced by transferring the learned task to the real robot. Transfer learning requires some amount of fine-tuning on the real robot. For tasks which involve complex (non-linear) dynamics, the fine-tuning itself may take a substantial amount of time. In order to reduce the amount of fine-tuning we propose to learn robustified controllers in simulation. Robustified controllers are learned by exploiting the ability to change simulation parameters (both appearance and dynamics) for successive training episodes. An additional benefit for this approach is that it alleviates the precise determination of physics parameters for the simulator, which is a non-trivial task. We demonstrate our proposed approach on a real setup in which a robot aims to solve a maze game, which involves complex dynamics due to static friction and potentially large accelerations. We show that the amount of fine-tuning in transfer learning for a robustified controller is substantially reduced compared to a non-robustified controller.

Software & Data Downloads

Related News & Events

-

NEWS New robotics benchmark system Date: November 16, 2020

MERL Contact: Daniel N. Nikovski

Research Areas: Artificial Intelligence, Machine Learning, RoboticsBrief- MERL researchers, in collaboration with researchers from MELCO and the Department of Brain and Cognitive Science at MIT, have released simulation software Circular Maze Environment (CME). This system could be used as a new benchmark for evaluating different control and robot learning algorithms. The control objective in this system is to tip and the tilt the maze so as to drive one (or multiple) marble(s) to the innermost ring of the circular maze. Although the system is very intuitive for humans to control, it is very challenging for artificial intelligence agents to learn efficiently. It poses several challenges for both model-based as well as model-free methods, due to its non-smooth dynamics, long planning horizon, and non-linear dynamics. The released Python package provides the simulation environment for the circular maze, where movement of multiple marbles could be simulated simultaneously. The package also provides a trajectory optimization algorithm to design a model-based controller in simulation.