TR2012-016

Indirect Model-Based Speech Enhancement

-

- , "Indirect Model-based Speech Enhancement", IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), DOI: 10.1109/ICASSP-2012.6288806, March 2012, pp. 4045-4048.BibTeX TR2012-016 PDF

- @inproceedings{LeRoux2012mar2,

- author = {{Le Roux}, J. and Hershey, J.R.},

- title = {{Indirect Model-based Speech Enhancement}},

- booktitle = {IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP)},

- year = 2012,

- pages = {4045--4048},

- month = mar,

- doi = {10.1109/ICASSP-2012.6288806},

- issn = {1520-6149},

- isbn = {978-1-4673-0045-2},

- url = {https://www.merl.com/publications/TR2012-016}

- }

- , "Indirect Model-based Speech Enhancement", IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), DOI: 10.1109/ICASSP-2012.6288806, March 2012, pp. 4045-4048.

-

MERL Contact:

-

Research Areas:

Abstract:

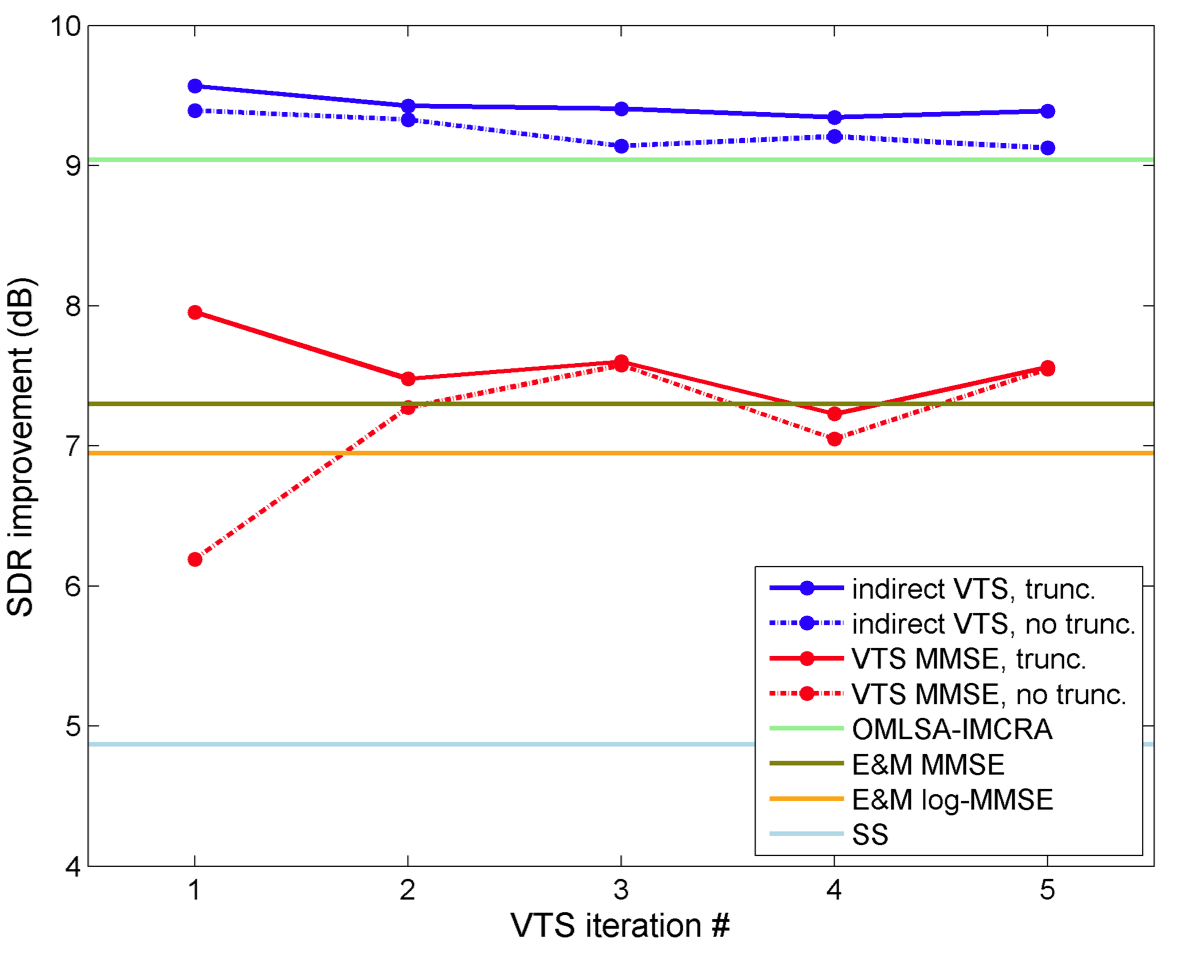

Model-based speech enhancement methods, such as vector-Taylor series-based methods (VTS) [1, 2], share a common methodology: they estimate speech using the expected value of the clean speech given the noisy speech under a statistical model. We show that it may be better to use the expected value of the noise under the model and subtract it from the noisy observation to form an indirect estimate of the speech. Interestingly, for VTS, this methodology turns out to be related to the application of an SNR-dependent gain to the direct VTS speech estimate. In results obtained on an automotive noise task, this methodology produces an average improvement of 1.6 dB signal-to-noise ratio (SNR), relative to conventional methods.

Related News & Events

-

NEWS ICASSP 2012: 8 publications by Petros T. Boufounos, Dehong Liu, John R. Hershey, Jonathan Le Roux and Zafer Sahinoglu Date: March 25, 2012

Where: IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP)

MERL Contacts: Dehong Liu; Jonathan Le Roux; Petros T. BoufounosBrief- The papers "Dictionary Learning Based Pan-Sharpening" by Liu, D. and Boufounos, P.T., "Multiple Dictionary Learning for Blocking Artifacts Reduction" by Wang, Y. and Porikli, F., "A Compressive Phase-Locked Loop" by Schnelle, S.R., Slavinsky, J.P., Boufounos, P.T., Davenport, M.A. and Baraniuk, R.G., "Indirect Model-based Speech Enhancement" by Le Roux, J. and Hershey, J.R., "A Clustering Approach to Optimize Online Dictionary Learning" by Rao, N. and Porikli, F., "Parametric Multichannel Adaptive Signal Detection: Exploiting Persymmetric Structure" by Wang, P., Sahinoglu, Z., Pun, M.-O. and Li, H., "Additive Noise Removal by Sparse Reconstruction on Image Affinity Nets" by Sundaresan, R. and Porikli, F. and "Depth Sensing Using Active Coherent Illumination" by Boufounos, P.T. were presented at the IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP).