TR2012-043

Connecting the Dots in Multi-Class Classification: From Nearest Subspace to Collaborative Representation

-

- , "Connecting the Dots in Multi-Class Classification: From Nearest Subspace to Collaborative Representation", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2012, pp. 3602-3609.BibTeX TR2012-043 PDF

- @inproceedings{Chi2012jun,

- author = {Chi, Y. and Porikli, F.},

- title = {{Connecting the Dots in Multi-Class Classification: From Nearest Subspace to Collaborative Representation}},

- booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

- year = 2012,

- pages = {3602--3609},

- month = jun,

- url = {https://www.merl.com/publications/TR2012-043}

- }

- , "Connecting the Dots in Multi-Class Classification: From Nearest Subspace to Collaborative Representation", IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2012, pp. 3602-3609.

-

Research Areas:

Abstract:

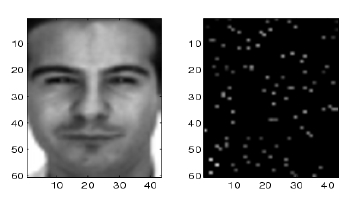

We present a novel multi-class classifier that strikes a balance between the nearest-subspace classifier, which assigns a test sample to the class that minimizes the distance between the test sample and its principal projection in the selected class, and a collaborative representation based classifier, which classifies a sample to the class that minimizes the distance between the collaborative components of the test sample by using all training samples from all classes as the dictionary and its projection in the selected class. In our formulation, the sparse representation based classifier [1] and nearest subspace classifier become special cases under different regularization parameters. We show that the classification performance can be improved by optimally tuning the regularization parameter, which can be done at almost no extra computational cost. We give extensive numerical examples for digit identification and face recognition with performance comparisons of different choices of collaborative representations, in particular when only a partial observation of the test sample is available via compressive sensing measurements.

Related News & Events

-

NEWS CVPR 2012: 4 publications by Yuichi Taguchi, Srikumar Ramalingam and Amit K. Agrawal Date: June 16, 2012

Where: IEEE Conference on Computer Vision and Pattern Recognition (CVPR)

Research Area: Computer VisionBrief- The papers "Connecting the Dots in Multi-Class Classification: From Nearest Subspace to Collaborative Representation" by Chi, Y. and Porikli, F., "Decomposing Global Light Transport using Time of Flight Imaging" by Wu, D., O'Toole, M., Velten, A., Agrawal, A. and Raskar, R., "Changedetection.net: A New Change Detection Benchmark Dataset" by Goyette, N., Jodoin, P.-M., Porikli, F., Konrad, J. and Ishwar, P. and "A Theory of Multi-Layer Flat Refractive Geometry" by Agrawal, A., Ramalingam, S., Taguchi, Y. and Chari, V. were presented at the IEEE Conference on Computer Vision and Pattern Recognition (CVPR).