TR2008-062

Incremental Exemplar Learning Schemes for Classification on Embedded Devices

-

- , "Incremental Exemplar Learning Schemes for Classification on Embedded Devices", European Conference on Machine Learning and Principles and Practice of Knowledge Discovery in Databases (ECML PKDD), September 2008.BibTeX TR2008-062 PDF

- @inproceedings{Jain2008sep,

- author = {Jain, A. and Nikovski, D.},

- title = {{Incremental Exemplar Learning Schemes for Classification on Embedded Devices}},

- booktitle = {European Conference on Machine Learning and Principles and Practice of Knowledge Discovery in Databases (ECML PKDD)},

- year = 2008,

- month = sep,

- url = {https://www.merl.com/publications/TR2008-062}

- }

- , "Incremental Exemplar Learning Schemes for Classification on Embedded Devices", European Conference on Machine Learning and Principles and Practice of Knowledge Discovery in Databases (ECML PKDD), September 2008.

-

MERL Contact:

-

Research Areas:

Abstract:

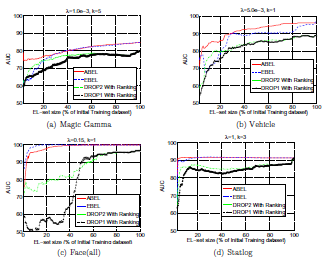

Although memory-based classifiers offer robust classification performance, their widespread usage on embedded devices is hindered due to the device's limited memory resources. Moreover, embedded devices often operate in an environment where data exhibits evolutionary changes which entails frequent update of the in-memory training data. A viable option for dealing with the memory constraint is to use Exemplar Learning (EL) schemes that learn a small memory set (called the exemplar set) of high functional information that fits in memory. However, traditional EL schemes have several drawbacks which make them inapplicable for the embedded devices; (1) they have high memory overheads and are unable to handle incremental updates to the exemplar set, (2) they cannot be customized to obtain exemplar sets of any user-defined size that fits in the memory and (3) they learn exemplar sets based on local neighborhood structure that do not offer robust classification performance. In this paper, we propose two novel EL schemes, EBEL (Entropy-Based Exemplar Learning) and ABEL (AUC-Based Exemplar Learning) that overcome the aforementioned short-comings of traditional EL algorithms. We show that our schemes efficiently incorporate new training datasets while maintaining high quality exemplar sets of any user-defined size. We present a comprehensive experimental analysis showing excellent classification-accuracy versus memory-usage tradeoffs using our proposed methods.

Related News & Events

-

NEWS ECML PKDD 2008: publication by Daniel Nikovski and others Date: September 15, 2008

Where: European Conference on Machine Learning and Principles and Practice of Knowledge Discovery in Databases (ECML PKDD)

MERL Contact: Daniel N. Nikovski

Research Area: Data AnalyticsBrief- The paper "Incremental Exemplar Learning Schemes for Classification on Embedded Devices" by Jain, A. and Nikovski, D. was presented at the European Conference on Machine Learning and Principles and Practice of Knowledge Discovery in Databases (ECML PKDD).